By Jeff Bier

Founder

Embedded Vision Alliance

Co-Founder and President

BDTI

This article was originally published on Altera's Technology Center. It is reprinted here with the permission of Altera.

With the emergence of increasingly capable processors, image sensors, memories and other semiconductor devices, along with associated algorithms, it's becoming practical to incorporate computer vision capabilities into a wide range of embedded systems, enabling them to analyze their environments via video inputs. Products like Microsoft's Kinect game controller and Mobileye's driver assistance systems are raising awareness of the incredible potential of embedded vision technology. As a result, many embedded system designers are beginning to think about implementing embedded vision capabilities. This article explores the opportunity for embedded vision, compares various processor options for implementing it, and introduces an industry alliance created to help engineers incorporate vision capabilities into their designs.

Jeff Bier

Co-Founder and President, BDTI

Founder, Embedded Vision Alliance

The term “embedded vision” refers to the use of computer vision technology in embedded systems. Stated another way, “embedded vision” refers to embedded systems that extract meaning from visual inputs. Similar to the way that wireless communication has become pervasive over the past 10 years, embedded vision technology is poised to be widely deployed in the next 10 years.

It’s clear that embedded vision technology can bring huge value to a vast range of applications (Figure 1). Two examples are Mobileye’s vision-based driver assistance systems, intended to help prevent motor vehicle accidents, and MG International’s swimming pool safety system, which helps prevent swimmers from drowning. And for sheer geek appeal, it’s hard to beat Intellectual Ventures’ laser mosquito zapper, designed to prevent people from contracting malaria.

Figure 1. Embedded vision got its start as computer vision in applications such as assembly-line inspection, optical character recognition, robotics, surveillance, and military systems. In recent years, however, decreasing costs and increasing capabilities have broadened and accelerated its penetration into numerous other markets.

Just as high-speed wireless connectivity began as an exotic, costly technology, so far embedded vision technology typically has been found in complex, expensive systems, such as a surgical robot for hair transplantation and quality-control inspection systems for manufacturing.

Advances in digital integrated circuits were critical in enabling high-speed wireless technology to evolve from exotic to mainstream. When chips got fast enough, inexpensive enough, and energy efficient enough, high-speed wireless became a mass-market technology. Today one can buy a broadband wireless modem for under $100.

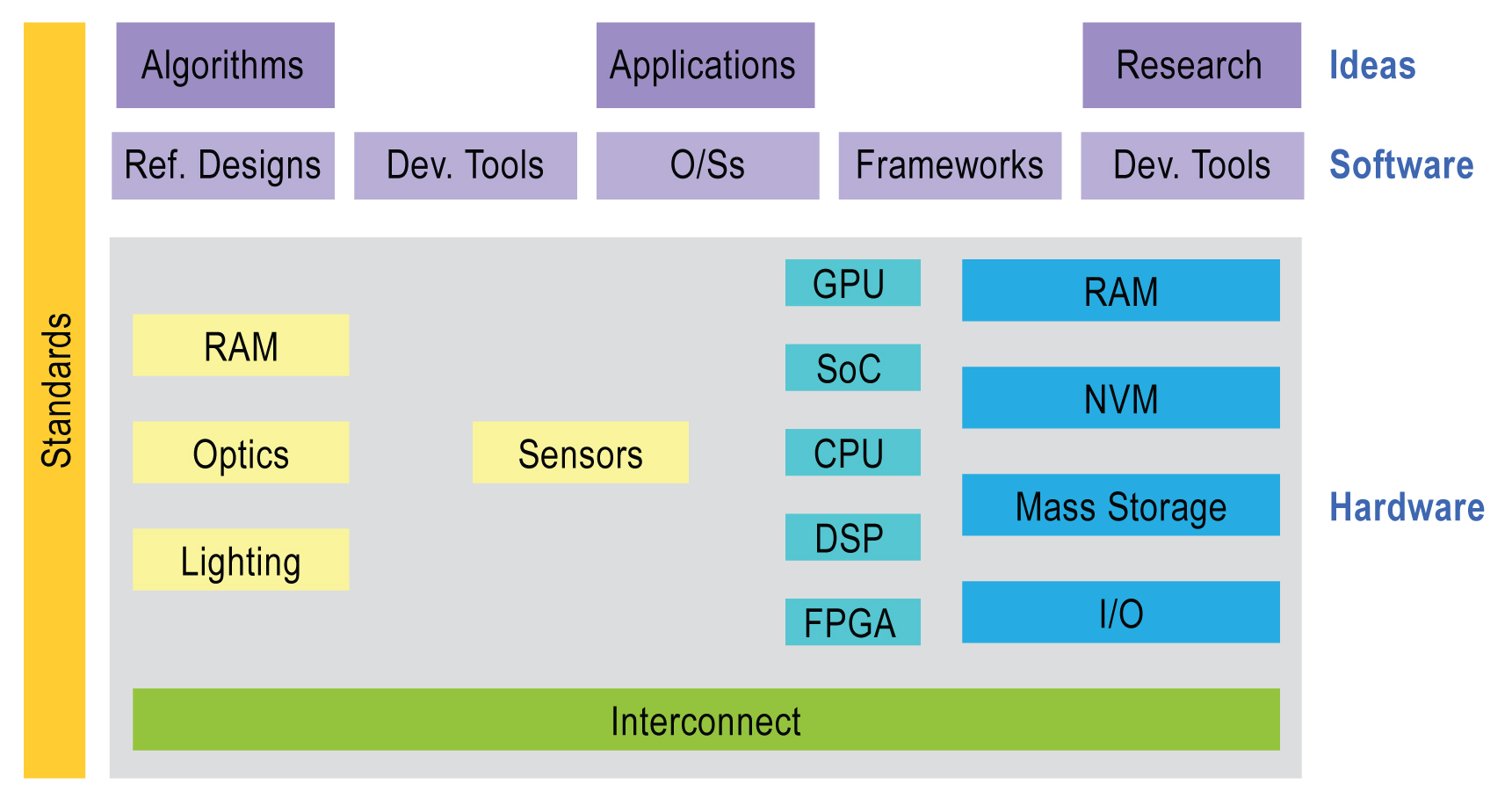

Similarly, advances in digital chips are now paving the way for the proliferation of embedded vision into high-volume applications (Figure 2). Like wireless communication, embedded vision requires lots of processing power—particularly as applications increasingly adopt high-resolution cameras and make use of multiple cameras. Providing that processing power at a cost low enough to enable mass adoption is a big challenge. This challenge is multiplied by the fact that embedded vision applications require a high degree of programmability. In contrast to wireless applications where standards mean that, for example, baseband algorithms don’t vary dramatically from one cell phone handset to another, in embedded vision applications there are great opportunities to get better results—and enable valuable features—through unique algorithms.

Figure 2. The embedded vision ecosystem spans hardware, semiconductor, and software component suppliers, subsystem developers, systems integrators, and end users, along with the fundamental research that provides ongoing breakthroughs. This article focuses on the embedded vision algorithm processing options shown in the center of the figure.

With embedded vision, the industry is entering a “virtuous circle” of the sort that has characterized many other digital signal processing (DSP) application domains. Although there are few chips dedicated to embedded vision applications today, these applications are increasingly adopting high-performance, cost-effective processing chips developed for other applications, including digital signal processors, CPUs, FPGAs, and GPUs. As these chips continue to deliver more programmable performance per dollar and per watt, they will enable the creation of more high-volume embedded vision products. Those high-volume applications, in turn, will attract more attention from silicon providers, who will deliver even better performance, efficiency, and programmability.

Processing Candidates

As previously mentioned, vision algorithms typically require high compute performance. And, of course, embedded systems of all kinds are usually required to fit into tight cost and power consumption envelopes. In other DSP application domains, such as digital wireless communications, chip designers achieve this challenging combination of high performance, low cost, and low power by using specialized coprocessors and accelerators to implement the most demanding processing tasks in the application. These coprocessors and accelerators are typically not programmable by the chip user, however.

This tradeoff is often acceptable in wireless applications, where standards mean that there is strong commonality among algorithms used by different equipment designers. In vision applications, however, there are no standards constraining the choice of algorithms. On the contrary, there are often many approaches to choose from to solve a particular vision problem. Therefore, vision algorithms are very diverse, and tend to change fairly rapidly over time. As a result, the use of non-programmable accelerators and coprocessors is less attractive for vision applications compared to applications like digital wireless and compression-centric consumer video equipment.

Achieving the combination of high performance, low cost, low power, and programmability is challenging. Special-purpose hardware typically achieves high performance at low cost, but with little programmability. General-purpose CPUs provide programmability, but with weak performance, poor cost-effectiveness, and/or low energy-efficiency. Demanding embedded vision applications most often use a combination of processing elements, which might include, for example:

- A general-purpose CPU for heuristics, complex decision-making, network access, user interface, storage management, and overall control

- A high-performance digital signal processors for real-time, moderate-rate processing with moderately complex algorithms

- One or more highly parallel engines for pixel-rate processing with simple algorithms

While any processor can in theory be used for embedded vision, the most promising types today are the:

- High-performance embedded CPU

- Application-specific standard product (ASSP) in combination with a CPU

- Graphics processing unit (GPU) with a CPU

- Digital signal processor with accelerator(s) and a CPU

- Mobile “application processor”

- Field programmable gate array (FPGA) with a CPU

Subsequent sections of this article will briefly introduce each of these processor types, along with some of their key strengths and weaknesses for embedded vision applications.

High-Performance Embedded CPU

In many cases, embedded CPUs cannot provide enough performance—or cannot do so at acceptable price or power consumption levels—to implement demanding vision algorithms. Often, memory bandwidth is a key performance bottleneck, since vision algorithms typically use large amounts of data, and don’t tend to repeatedly access the same data. The memory systems of embedded CPUs are not designed for these kinds of data flows. However, like most types of processors, embedded CPUs become more powerful over time, and in some cases can provide adequate performance.

Compelling reasons exist to run vision algorithms on a CPU when possible. First, most embedded systems need a CPU for a variety of functions. If the required vision functionality can be implemented using that CPU, then the complexity of the system is reduced relative to a multiprocessor solution. In addition, most vision algorithms are initially developed on PCs using general-purpose CPUs and their associated software development tools. Similarities between PC CPUs and embedded CPUs (and their associated tools) mean that it is typically easier to create embedded implementations of vision algorithms on embedded CPUs compared to other kinds of embedded vision processors. Finally, embedded CPUs typically are the easiest to use compared to other kinds of embedded vision processors, due to their relatively straightforward architectures, sophisticated tools, and other application development infrastructure, such as operating systems.

ASSP in Combination with a CPU

ASSPs are specialized, highly integrated chips tailored for specific applications or application sets. ASSPs may incorporate a CPU, or use a separate CPU chip. By virtue of specialization, ASSPs typically deliver superior cost- and energy-efficiency compared with other types of processing solutions. Among other techniques, ASSPs deliver this efficiency through the use of specialized coprocessors and accelerators. And, because ASSPs are by definition focused on a specific application, they are usually delivered with extensive application software.

The specialization that enables ASSPs to achieve strong efficiency, however, also leads to their key limitation: lack of flexibility. An ASSP designed for one application is typically not suitable for another application, even one that is related to the target application. ASSPs use unique architectures, and this can make programming them more difficult than with other kinds of processors. Indeed, some ASSPs are not user-programmable. Another consideration is risk. ASSPs often are delivered by small suppliers, and this may increase the risk that there will be difficulty in supplying the chip, or in delivering successor products that enable system designers to upgrade their designs without having to start from scratch.

GPU with a CPU

GPUs, intended mainly for 3D graphics, are increasingly capable of being used for other functions, including vision applications. The GPUs used in personal computers today are explicitly intended to be programmable to perform functions other than 3D graphics. Such GPUs are termed “general-purpose GPUs” or “GPGPUs.” GPUs have massive parallel processing horsepower. They are ubiquitous in personal computers. GPU software development tools are readily and freely available, and getting started with GPGPU programming is not terribly complex. For these reasons, GPUs are often the parallel processing engines of first resort for computer vision algorithm developers who develop their algorithms on PCs, and then may need to accelerate execution of their algorithms for simulation or prototyping purposes.

GPUs are tightly integrated with general-purpose CPUs, sometimes on the same chip. However, one of the limitations of GPU chips is the limited variety of CPUs with which they are currently integrated, and the limited number of CPU operating systems that support that integration. Today, low-cost, low-power GPUs exist, designed for products like smartphones and tablets. However, these GPUs are often not GPGPUs, and therefore using them for applications other than 3D graphics is very challenging.

Digital Signal Processor with Accelerator(s) and a CPU

Digital signal processors are microprocessors specialized for signal processing algorithms and applications. This specialization typically makes digital signal processors more efficient than general-purpose CPUs for the kinds of signal processing tasks that are at the heart of vision applications. In addition, digital signal processors are relatively mature and easy to use compared to other kinds of parallel processors.

Unfortunately, while digital signal processors do deliver higher performance and efficiency than general-purpose CPUs on vision algorithms, they often fail to deliver sufficient performance for demanding algorithms. For this reason, DSPs are often supplemented with one or more coprocessors. A typical DSP chip for vision applications therefore comprises a CPU, a digital signal processor, and multiple coprocessors. This heterogeneous combination can yield excellent performance and efficiency, but can also be difficult to program. Indeed, DSP vendors typically do not enable users to program the coprocessors; rather, the coprocessors run software function libraries developed by the chip supplier.

Mobile “Application Processor”

A mobile “application processor” is a highly integrated system-on-chip, typically designed primarily for smart phones but used for other applications. Application processors typically comprise a high-performance CPU core and a constellation of specialized coprocessors, which may include a digital signal processor, a GPU, a video processing unit (VPU), a 2D graphics processor, an image acquisition processor, etc.

These chips are specifically designed for battery-powered applications, and therefore place a premium on energy efficiency. In addition, because of the growing importance of and activity surrounding smartphone and tablet applications, mobile application processors often have strong software development infrastructure, including low-cost development boards, Linux and Android ports, etc. However, as with the digital signal processors discussed in the previous section, the specialized coprocessors found in application processors are usually not user-programmable, which limits their utility for vision applications.

FPGA with a CPU

FPGAs are flexible logic chips that can be reconfigured at the gate and block levels. This flexibility enables the user to craft computation structures that are tailored to the application at hand. It also allows selection of I/O interfaces and on-chip peripherals matched to the application requirements. The ability to customize compute structures, coupled with the massive amount of resources available in modern FPGAs, yields high performance coupled with good cost- and energy-efficiency.

Using FGPAs, however, is essentially a hardware design function, rather than a software development activity. FPGA design is typically performed using hardware description languages (Verilog or VHLD) at the register transfer level (RTL)—a very low level of abstraction. This makes FPGA design time-consuming and expensive, compared to using the other types of processors discussed in this article.

With that said, using FPGAs is getting easier, due to several factors. First, so-called “IP block” libraries—libraries of reusable FPGA design components—are becoming increasingly capable. In some cases, these libraries directly address vision algorithms. In other cases, they enable supporting functionality, such as video I/O ports or line buffers. Also, FGPA suppliers and their partners increasingly offer reference designs—reusable system designs incorporating FPGAs and targeting specific applications. Finally, high-level synthesis tools, which enable designers to implement vision and other algorithms in FPGAs using high-level languages, are increasingly effective. Users can implement relatively low-performance CPUs in the FPGA. And, in a few cases, FPGA manufacturers are integrating high-performance CPUs within their devices.

In Conclusion

With embedded vision, the industry is entering a “virtuous circle” of the sort that has characterized many other DSP application domains. Although there are few chips dedicated to embedded vision applications today, these applications are increasingly adopting high-performance, cost-effective processing chips developed for other applications, including digital signal processors, CPUs, FPGAs, and GPUs. As these chips continue to deliver more programmable performance per dollar and per watt, they will enable the creation of more high-volume embedded vision products. Those high-volume applications, in turn, will attract more attention from silicon providers, who will deliver even better performance, efficiency, and programmability. And the Embedded Vision Alliance will help empower engineers to harness these chips to create a wide variety of amazing new products.

For more information, please visit www.Embedded-Vision.com. Contact the Embedded Vision Alliance at [email protected] or +1-510-451-1800.

Sidebar: The Embedded Vision Alliance

As discussed earlier, embedded vision technology has the potential to enable a wide range of electronic products that are more intelligent and responsive than before, and thus more valuable to users. It can add helpful features to existing products. And it can provide significant new markets for hardware, software and semiconductor manufacturers. The Embedded Vision Alliance, a worldwide organization of technology developers and providers, is working to empower engineers to transform this potential into reality in a rapid and efficient manner.

More specifically, the mission of the Alliance is to provide engineers with practical education, information, and insights to help them incorporate embedded vision capabilities into products. To execute this mission, the Alliance has developed a full-featured website, freely accessible to all and including (among other things) articles, videos, a daily news portal and a discussion forum staffed by a diversity of technology experts. Registered website users can receive the Embedded Vision Alliance's e-mail newsletter; they also gain access to the Embedded Vision Academy, containing numerous training videos, technical papers and file downloads, intended to enable those new to the embedded vision application space to rapidly ramp up their expertise.

A few examples of compelling content on the Embedded Vision Alliance website include:

- "Introduction To Computer Vision Using OpenCV", the combination of a descriptive article, tutorial video, and executable software demo.

- A three-part video interview with Jitendra Malik, Arthur J. Chick Professor of EECS at the University of California at Berkeley and a computer vision academic pioneer: Part 1, Part 2, Part 3.

- Information on the definition and development of Cernium's Archerfish, a consumer-targeted and embedded vision-based surveillance system, in both article and video interview forms.