Resources

In-depth information about the edge AI and vision applications, technologies, products, markets and trends.

The content in this section of the website comes from Edge AI and Vision Alliance members and other industry luminaries.

All Resources

“Enabling “GenAI Everywhere”: Flexible Model Compatibility and Insight-Driven Workflows,” a Presentation from Nota AI

Tae-Ho Kim, Co-Founder and CTO at Nota AI presents “Enabling “GenAI Everywhere”: Flexible Model Compatibility and Insight-Driven Workflows” at the May 2026 Embedded Vision Summit. As generative AI models rapidly evolve—with increasingly dynamic transformer architectures

Akida Pico: The Tiny Brain Making “Always-On” AI a Reality

This blog post was originally published at BrainChip’s website. It is reprinted here with the permission of BrainChip. In the world of Edge AI, there’s always been a tradeoff: high intelligence, always-on capability, or long battery

Macnica Americas Becomes Authorized Distributor for NAMUGA Vision Connectivity

New relationship brings NAMUGA’s advanced camera modules and 3D sensing technologies to customers developing next-generation intelligent systems Solana Beach, California — June 1, 2026 — Macnica Americas announced that it is now an authorized

“Roads to Robots: How Generative AI Is Redefining Memory and Storage for Embodied AI,” a Presentation from Micron

Saideep Tiku, Principal System Architect at Micron presents “Roads to Robots: How Generative AI Is Redefining Memory and Storage for Embodied AI” at the May 2026 Embedded Vision Summit. Generative AI has accelerated the automation

Four Generations of the Rapidflare Agent Harness – Spark, Flame, Blaze and Forge

How the Rapidflare agent harness has evolved across four generations — from a simple RAG pipeline in 2023 to a long-running, broader, deeper harness in 2026. This blog post was originally published at Rapidflare’s website.

“Speeding Time to Market with Production-Ready Edge AI Solutions: From Wake Word Detection to Face Recognition,” a Presentation from Microchip Technology

Nick De Rosa, Kannan Srinivasagam, Edge AI Marketing Manager at Microchip Technology presents “Speeding Time to Market with Production-Ready Edge AI Solutions: From Wake Word Detection to Face Recognition” at the May 2026 Embedded Vision

Sony IMX412 vs Sony IMX676: A detailed comparison of Sony sensors for Embedded Vision Solutions

This blog post was originally published at e-con Systems’ website. It is reprinted here with the permission of e-con Systems. Key Takeaways: What Sony STARVIS 2 technology is and how it improves upon the original STARVIS

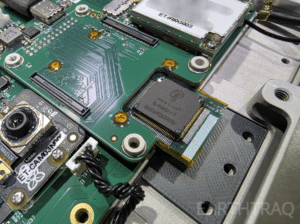

Mentium Technologies’ Luna-R1 AI Chip Selected for ET-01 Constellation Mission, First Multi-Satellite Deployment of Mentium Hardware

Launching on SpaceX Transporter-17 to Enable Autonomous Intelligence Across LEO Satellite Networks Goleta, CA — May 28, 2026 — Mentium Technologies today announced that its next-generation AI processing chip, Luna-R1, has been selected for deployment on the ET-01

Axelera AI and Andes Technology Partner to Power Next-Generation “Europa” AI Platform with High-Performance RISC-V AX65 Cores

EINDHOVEN, Netherlands & HSINCHU, Taiwan — June 1, 2026 — Axelera AI, the leading provider of high-performance, ultra-efficient edge AI solutions, and Andes Technology (TWSE: 6533), a premier supplier of high-efficiency 32/64-bit RISC-V processor cores, today announced

Technologies

“Enabling “GenAI Everywhere”: Flexible Model Compatibility and Insight-Driven Workflows,” a Presentation from Nota AI

Tae-Ho Kim, Co-Founder and CTO at Nota AI presents “Enabling “GenAI Everywhere”: Flexible Model Compatibility and Insight-Driven Workflows” at the May 2026 Embedded Vision Summit. As generative AI models rapidly evolve—with increasingly dynamic transformer architectures and diverse framework variations—the challenge of bringing these models to the edge has grown dramatically.… “Enabling “GenAI Everywhere”: Flexible Model

Akida Pico: The Tiny Brain Making “Always-On” AI a Reality

This blog post was originally published at BrainChip’s website. It is reprinted here with the permission of BrainChip. In the world of Edge AI, there’s always been a tradeoff: high intelligence, always-on capability, or long battery life. If you wanted a device to listen for a voice command or monitor something 24/7, you usually had to

Macnica Americas Becomes Authorized Distributor for NAMUGA Vision Connectivity

New relationship brings NAMUGA’s advanced camera modules and 3D sensing technologies to customers developing next-generation intelligent systems Solana Beach, California — June 1, 2026 — Macnica Americas announced that it is now an authorized distributor for NAMUGA Vision Connectivity, expanding access to NAMUGA’s advanced camera modules and 3D sensing technologies for developers of intelligent

Applications

Akida Pico: The Tiny Brain Making “Always-On” AI a Reality

This blog post was originally published at BrainChip’s website. It is reprinted here with the permission of BrainChip. In the world of Edge AI, there’s always been a tradeoff: high intelligence, always-on capability, or long battery life. If you wanted a device to listen for a voice command or monitor something 24/7, you usually had to

“Roads to Robots: How Generative AI Is Redefining Memory and Storage for Embodied AI,” a Presentation from Micron

Saideep Tiku, Principal System Architect at Micron presents “Roads to Robots: How Generative AI Is Redefining Memory and Storage for Embodied AI” at the May 2026 Embedded Vision Summit. Generative AI has accelerated the automation of complex digital tasks, and now multimodal perception and reasoning make embodied AI the next… “Roads to Robots: How Generative

“Speeding Time to Market with Production-Ready Edge AI Solutions: From Wake Word Detection to Face Recognition,” a Presentation from Microchip Technology

Nick De Rosa, Kannan Srinivasagam, Edge AI Marketing Manager at Microchip Technology presents “Speeding Time to Market with Production-Ready Edge AI Solutions: From Wake Word Detection to Face Recognition” at the May 2026 Embedded Vision Summit. Design teams are moving from edge AI evaluation to deployment and need production-ready, system-level… “Speeding Time to Market with

Functions

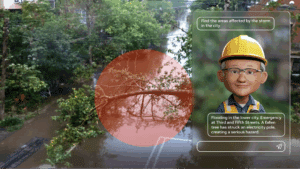

AI On: 3 Ways to Bring Agentic AI to Computer Vision Applications

This blog post was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. Learn how to integrate vision language models into video analytics applications, from AI-powered search to fully automated video analysis. Today’s computer vision systems excel at identifying what happens in physical spaces and processes, but lack the abilities to explain the

SAM3: A New Era for Open‑Vocabulary Segmentation and Edge AI

Quality training data – especially segmented visual data – is a cornerstone of building robust vision models. Meta’s recently announced Segment Anything Model 3 (SAM3) arrives as a potential game-changer in this domain. SAM3 is a unified model that can detect, segment, and even track objects in images and videos using both text and visual

TLens vs VCM Autofocus Technology

This blog post was originally published at e-con Systems’ website. It is reprinted here with the permission of e-con Systems. In this blog, we’ll walk you through how TLens technology differs from traditional VCM autofocus, how TLens combined with e-con Systems’ Tinte ISP enhances camera performance, key advantages of TLens over mechanical autofocus systems, and applications