Resources

In-depth information about the edge AI and vision applications, technologies, products, markets and trends.

The content in this section of the website comes from Edge AI and Vision Alliance members and other industry luminaries.

All Resources

Upcoming Webinar on Agentic Memory Systems

On April 16, 2026, at 1:00 pm EDT (10:00 am PDT) Boston.AI will deliver a webinar “Remembering to Forget: Agentic Memory Systems and Context Constraints” From the event page: As AI agents evolve from stateless

From hardware to intelligence: The Qualcomm AI camera platform for scalable security solutions

At ISC West 2026 Qualcomm Technologies showcases its vision for the future of smart camera and security Key Takeaways: End-to-end AI development tools and the Qualcomm Insight Platform to enable customers to develop AI features

Lightweight Keyword Spotting Solution from Microchip

Microchip presents a customizable, target-agnostic solution to program wake words and voice commands. The ML model, generated and tested using a custom application, has low latency and a minimal memory footprint, making it ideal for

Accelerating Multi-Die Innovation: How Synopsys and Samsung are Shaping Chip Design

This blog post was originally published at Synopsys’s website. It is reprinted here with the permission of Synopsys. With the semiconductor industry shifting from monolithic chip designs to multi-die architectures — which leverage chiplets for improved flexibility, scalability,

2026: The Year Intelligence Gets Physical

This article was originally published at Analog Devices’ website. It is reprinted here with the permission of Analog Devices. Artificial intelligence is entering a new phase where models interpret contextual data whilst interacting with the physical

Why Night HDR Is More Challenging Than Daytime HDR

This blog post was originally published at Visidon’s website. It is reprinted here with the permission of Visidon. High Dynamic Range (HDR) imaging has become a standard feature in modern cameras, from smartphones to automotive and

AI-Assisted Coding: The Next Step in Abstraction

I’ve been using AI-assisted coding for my work a lot recently, and I’ll admit, I wasn’t sure how I felt about it. Was I cheating? How do I know it’s right? Do I admit to

Restar Framos Demo: Sony IMX927 105 MP Global Shutter Image Sensor

Prashant Mehta from Restar Framos presents the Sony IMX927, a high-performance 105-megapixel global shutter image sensor. The demonstration highlights the sensor’s extreme resolution and speed, showing its ability to capture live, detailed images of

A Smarter Vision for AI at ISC West 2026

Ambarella will be hosting an invitation-only exhibition at ISC West 2026, taking place March 25 – 27 at the Venetian Expo in Las Vegas, where we’ll demonstrate how edge AI is enabling the next generation

Technologies

Upcoming Webinar on Agentic Memory Systems

On April 16, 2026, at 1:00 pm EDT (10:00 am PDT) Boston.AI will deliver a webinar “Remembering to Forget: Agentic Memory Systems and Context Constraints” From the event page: As AI agents evolve from stateless responders into persistent, goal-directed systems, memory has become a central design challenge. The question is no longer just what agents

From hardware to intelligence: The Qualcomm AI camera platform for scalable security solutions

At ISC West 2026 Qualcomm Technologies showcases its vision for the future of smart camera and security Key Takeaways: End-to-end AI development tools and the Qualcomm Insight Platform to enable customers to develop AI features once and scale across their entire product portfolio. Qualcomm Technologies’ camera solutions span video security, law enforcement and enterprise body

Lightweight Keyword Spotting Solution from Microchip

Microchip presents a customizable, target-agnostic solution to program wake words and voice commands. The ML model, generated and tested using a custom application, has low latency and a minimal memory footprint, making it ideal for resource-constrained embedded systems. The ML model can be integrated into voice-based applications running on any 32-bit microcontroller or microprocessor running

Applications

2026: The Year Intelligence Gets Physical

This article was originally published at Analog Devices’ website. It is reprinted here with the permission of Analog Devices. Artificial intelligence is entering a new phase where models interpret contextual data whilst interacting with the physical world in real time. At Analog Devices, Inc. (ADI), we call this Physical Intelligence: intelligent systems that can perceive, reason

From Warehouse to Wallet: New State of AI in Retail and CPG Survey Uncovers How AI Is Rewiring Supply Chains and Customer Experiences

This blog post was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. The third annual NVIDIA State of AI in Retail and CPG survey shows why nine in 10 retailers will increase AI budgets in 2026, focusing on open-source models and software, as well as agentic and physical AI. Highlights

STMicroelectronics and Leopard Imaging Accelerate Robotics Vision with NVIDIA Jetson-ready Multi-sensor Module

Key Takeaways Multimodal module combining 2D imaging, 3D depth sensing, and human-like motion perception NVIDIA Holoscan Sensor Bridge ensuring multi-gigabit plug and play connectivity with Jetson platforms Fully supported by NVIDIA Isaac open robot development platform STMicroelectronics and Leopard Imaging® have introduced an all-in-one multimodal vision module for humanoid and other advanced robotics systems. Combining

Functions

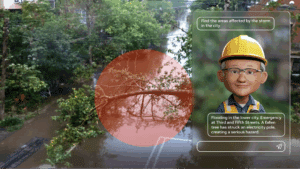

AI On: 3 Ways to Bring Agentic AI to Computer Vision Applications

This blog post was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. Learn how to integrate vision language models into video analytics applications, from AI-powered search to fully automated video analysis. Today’s computer vision systems excel at identifying what happens in physical spaces and processes, but lack the abilities to explain the

SAM3: A New Era for Open‑Vocabulary Segmentation and Edge AI

Quality training data – especially segmented visual data – is a cornerstone of building robust vision models. Meta’s recently announced Segment Anything Model 3 (SAM3) arrives as a potential game-changer in this domain. SAM3 is a unified model that can detect, segment, and even track objects in images and videos using both text and visual

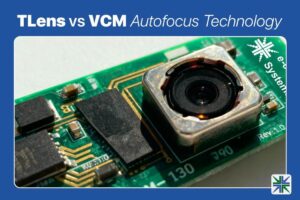

TLens vs VCM Autofocus Technology

This blog post was originally published at e-con Systems’ website. It is reprinted here with the permission of e-con Systems. In this blog, we’ll walk you through how TLens technology differs from traditional VCM autofocus, how TLens combined with e-con Systems’ Tinte ISP enhances camera performance, key advantages of TLens over mechanical autofocus systems, and applications