Resources

In-depth information about the edge AI and vision applications, technologies, products, markets and trends.

The content in this section of the website comes from Edge AI and Vision Alliance members and other industry luminaries.

All Resources

“Invertible Light Technology: A Paradigm Shift for Depth Sensing,” a Presentation from MagikEye

Takeo Miyazawa, Founder and CEO at MagikEye presents “Invertible Light Technology: A Paradigm Shift for Depth Sensing” at the May 2026 Embedded Vision Summit. Invertible Light Technology (ILT) is a physics- and geometry-based depth-sensing approach

Introducing Infineon’s Live Lab

This blog post was originally published at Infineon’s website. It is reprinted here with the permission of Infineon. You have just decided to evaluate one of Infineon’s PSOC™ family of MCUs, be it PSOC™ Edge

“Efficient Computer Vision at the Far Edge: Design and Training Under Constraints,” a Presentation from Lattice Semiconductor

Nicolas Widynski, AI Fellow at Lattice Semiconductor presents “Efficient Computer Vision at the Far Edge: Design and Training Under Constraints” at the May 2026 Embedded Vision Summit. This session explores practical strategies for deploying computer

JetPack 7.2: The Production Moment for Physical AI

NVIDIA just shipped the most important Jetson release in years. Here’s what it means — and where Avocado OS fits in. This blog was originally published at Peridio’s website. It is reprinted here with

GlobalFoundries completes acquisition of Synopsys’ Processor IP Solutions Business, delivering a holistic technology platform for Physical AI

Combines GF’s Physical AI portfolio with MIPS’ RISC-V and software-to-silicon expertise to accelerate custom, software-first products for automotive, industrial and agentic edge platforms MALTA, N.Y., June 2, 2026 – GlobalFoundries (Nasdaq: GFS) (GF) today

“Why Your Next AI Accelerator Should Be an FPGA,” a Presentation from Efinix

Mark Oliver, VP of Marketing and Business Development at Efinix presents “Why Your Next AI Accelerator Should Be an FPGA” at the May 2026 Embedded Vision Summit. Edge AI system developers often assume that AI

Running BitNet on Qualcomm Hexagon with custom 1.58 kernels

This blog post was originally published at ENERZAi’s website. It is reprinted here with the permission of ENERZAi. Today, we are excited to share a milestone that our team has been working toward for some

GSOC Solutions and Andes Technology Announce Strategic Partnership to Expand RISC-V CPU Options for Configurable SoC and Chiplet Platforms

SAN JOSE, Calif. – May 26, 2026 – GSOC Solutions, a premier ASIC development center located at Tel-Aviv, Israel, and Andes Technology (TWSE: 6533), a leading supplier of high-performance, low-power RISC-V processor cores, announced a

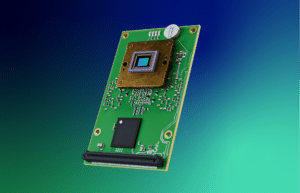

Vision Components at CVPR: VC EvoCam with Onboard Edge AI Processor and new MIPI Cameras with up to 24.5 Megapixels

Ettlingen, May 29, 2026. Following its successful US premiere at the Embedded Vision Summit, Vision Components is set to showcase its all-in-one intelligent board-level camera VC EvoCam at the Conference on Computer Vision and Pattern

Technologies

Macnica Americas Becomes Authorized Distributor for NAMUGA Vision Connectivity

New relationship brings NAMUGA’s advanced camera modules and 3D sensing technologies to customers developing next-generation intelligent systems Solana Beach, California — June 1, 2026 — Macnica Americas announced that it is now an authorized distributor for NAMUGA Vision Connectivity, expanding access to NAMUGA’s advanced camera modules and 3D sensing technologies for developers of intelligent

“Roads to Robots: How Generative AI Is Redefining Memory and Storage for Embodied AI,” a Presentation from Micron

Saideep Tiku, Principal System Architect at Micron presents “Roads to Robots: How Generative AI Is Redefining Memory and Storage for Embodied AI” at the May 2026 Embedded Vision Summit. Generative AI has accelerated the automation of complex digital tasks, and now multimodal perception and reasoning make embodied AI the next… “Roads to Robots: How Generative

Four Generations of the Rapidflare Agent Harness – Spark, Flame, Blaze and Forge

How the Rapidflare agent harness has evolved across four generations — from a simple RAG pipeline in 2023 to a long-running, broader, deeper harness in 2026. This blog post was originally published at Rapidflare’s website. It is reprinted here with the permission of Rapidflare. Since the start of Rapidflare, we have shipped four distinct

Applications

“Roads to Robots: How Generative AI Is Redefining Memory and Storage for Embodied AI,” a Presentation from Micron

Saideep Tiku, Principal System Architect at Micron presents “Roads to Robots: How Generative AI Is Redefining Memory and Storage for Embodied AI” at the May 2026 Embedded Vision Summit. Generative AI has accelerated the automation of complex digital tasks, and now multimodal perception and reasoning make embodied AI the next… “Roads to Robots: How Generative

“Speeding Time to Market with Production-Ready Edge AI Solutions: From Wake Word Detection to Face Recognition,” a Presentation from Microchip Technology

Nick De Rosa, Kannan Srinivasagam, Edge AI Marketing Manager at Microchip Technology presents “Speeding Time to Market with Production-Ready Edge AI Solutions: From Wake Word Detection to Face Recognition” at the May 2026 Embedded Vision Summit. Design teams are moving from edge AI evaluation to deployment and need production-ready, system-level… “Speeding Time to Market with

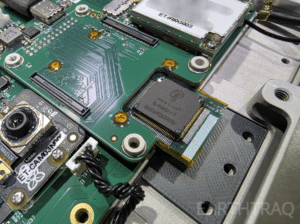

Mentium Technologies’ Luna-R1 AI Chip Selected for ET-01 Constellation Mission, First Multi-Satellite Deployment of Mentium Hardware

Launching on SpaceX Transporter-17 to Enable Autonomous Intelligence Across LEO Satellite Networks Goleta, CA — May 28, 2026 — Mentium Technologies today announced that its next-generation AI processing chip, Luna-R1, has been selected for deployment on the ET-01 mission, a constellation of satellites operating in Low Earth Orbit (LEO). The constellation is being developed by EarthTraq, previously operating in

Functions

AI On: 3 Ways to Bring Agentic AI to Computer Vision Applications

This blog post was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. Learn how to integrate vision language models into video analytics applications, from AI-powered search to fully automated video analysis. Today’s computer vision systems excel at identifying what happens in physical spaces and processes, but lack the abilities to explain the

SAM3: A New Era for Open‑Vocabulary Segmentation and Edge AI

Quality training data – especially segmented visual data – is a cornerstone of building robust vision models. Meta’s recently announced Segment Anything Model 3 (SAM3) arrives as a potential game-changer in this domain. SAM3 is a unified model that can detect, segment, and even track objects in images and videos using both text and visual

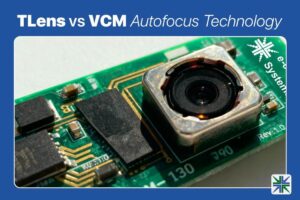

TLens vs VCM Autofocus Technology

This blog post was originally published at e-con Systems’ website. It is reprinted here with the permission of e-con Systems. In this blog, we’ll walk you through how TLens technology differs from traditional VCM autofocus, how TLens combined with e-con Systems’ Tinte ISP enhances camera performance, key advantages of TLens over mechanical autofocus systems, and applications