New SDK helps teams add real-time vision capabilities to robotics, industrial automation, security and edge AI systems with faster deployment, flexible integration and continuous model improvement

REDMOND, Wash. — May 11, 2026 — Synetic, Inc., a provider of computer vision and synthetic data solutions for real-world AI systems, today announced the debut of LYNX, a new computer vision SDK designed to help product teams build, adapt and deploy vision capabilities more quickly across real-world environments.

LYNX was introduced at the 2026 Embedded Vision Summit in Santa Clara, California, where Synetic demonstrated the SDK to engineers and product teams working across robotics, industrial automation, security and edge deployment.

Built by Synetic, LYNX brings together detection, segmentation, pose estimation, tracking, behavior recognition, monocular depth, 3D RGB outputs, OCR, zone analytics, line crossing and multi-stream management in a single SDK. The platform is designed to give developers practical computer vision outputs they can use directly in production systems, rather than requiring teams to stitch together multiple models, tools and vendor-specific pipelines.

LYNX is designed around Synetic’s physics-based synthetic data pipeline, which enables the company to generate edge cases, environmental variation and labeled training data without relying solely on expensive real-world data collection and manual annotation. When a model misses an important scenario, users can submit the failure case through the SDK; Synetic can then generate synthetic variations of that scenario and incorporate improvements into future model updates.

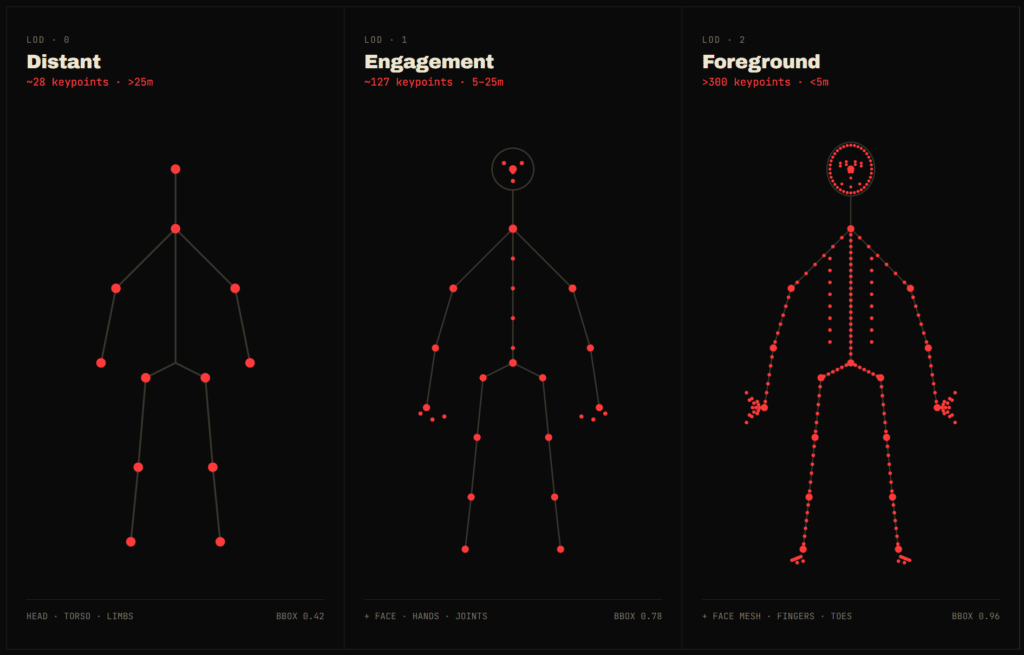

The SDK supports level-of-detail keypoints that automatically scale based on camera distance. For example, LYNX can provide skeletal anchors for distant subjects, more detailed joints and limbs at intermediate distances, and higher-resolution landmarks such as fingertips, facial points and eyelid landmarks for close-range subjects. This allows developers to use a single SDK across scenes where subject distance and camera viewpoint vary significantly.

LYNX also includes behavior outputs as first-class SDK signals. In addition to identifying objects and people, the SDK can return behavior states such as walking, running, limping, falling, concealing and loitering, along with confidence and timing information. These capabilities are intended to support applications such as industrial safety, security monitoring, retail analytics, sports and fitness analysis, and robotics perception.

For robotics applications, LYNX combines detection, segmentation, monocular depth and 3D RGB outputs to support tasks such as pick-and-place and grasp planning from a single RGB camera. For industrial and security use cases, the SDK combines detection, zone analytics, pose and behavior to help teams distinguish between simple object presence and more meaningful activity patterns.

LYNX is being developed for deployment across six platform targets: iOS, Android, Linux, Windows, macOS and NVIDIA Jetson. The SDK is built with a C-first architecture and is designed to support native bindings for languages including Python, Rust, Java and C#.

Synetic is now accepting requests for beta access to LYNX. More information is available at https://golynx.ai/.

About LYNX

LYNX is a computer vision SDK from Synetic designed to help teams add real-time perception capabilities to real-world products. The SDK combines detection, segmentation, pose, tracking, behavior recognition, depth, OCR, analytics and multi-stream management in one platform. Built using Synetic’s physics-based synthetic data pipeline, LYNX is designed to help teams deploy computer vision faster, adapt to edge cases and support production use cases across robotics, industrial automation, security, retail analytics and edge AI.