This blog post was originally published at Cadence's website. It is reprinted here with the permission of Cadence.

Image and vision processing require a tremendous amount of processing power, not to mention a large amount of memory bandwidth. And when you consider the mobile and wearable devices that utilize these functions, it’s clear why low energy consumption is also a priority. Cadence just announced the new Tensilica Vision P5 Imaging/Vision DSP that can help you meet your performance/energy consumption targets. Himanshu Sanghavi, a design engineering group director at Cadence, explains how.

What are some types of processing that are driving the need for a new image/vision processing DSP?

There are two key types of processing: post-processing to make an image better, through techniques like color correction, sharpening, and noise reduction. The second area is in analytics—What is the image? Is it a dog or a human being? A male or a female? While it's clear that all of this involves a huge amount of processing power, we also can't disregard memory bandwidth. In fact, in many applications, memory bandwidth becomes the limiting factor.

What kinds of use cases can the Tensilica Vision P5 address?

Key application domains include drones and robots, security, mobile, and advanced driver assistance systems (ADAS). Computer vision, enabled by the VIsion P5 DSP, gives these products intelligence.

Remote-controlled drones, for example, can capture 360-degree visuals of your child’s soccer game and send the live feed to your phone. They’re also putting cameras on drones to detect their surroundings for collision avoidance. In robotics, we’re seeing industrial applications, where vision technology is used to detect and recognize the presence of things and respond.

For security, low-cost cameras are being deployed everywhere. Early on, it was about capturing and storing information cheaply. Now, it’s about generating terabtyes of information, so the next set of enhancements is to add analytics. Then, the camera can capture all the time and mark the points when something interesting is happening, like when there’s motion. For example, today you can buy door lights with cameras and analytics—when someone is within some distance of your door, the system alerts you on your phone. But the analytics inside will prevent a passing car from triggering an alert.

In mobile apps like phones, we’re seeing more analytics paired with the camera. In next-generation phones, face recognition to unlock the phone could become standard. It’s do-able today in some very high-end phones.

As for ADAS, all of the smarts in cars these days are from electronics. The research lies in making cars safer and more efficient. Over the next few years, features like lane departure warning and collision avoidance will become standard.

How are these use cases impacting performance and power requirements?

The sheer amount of data you need to process in these applications is large. For drone or ADAS applications, you might have two to three different cameras at high definition. Techniques required to do analytics are processing power intensive. For example, detecting objects, recognizing faces (even when the person is not wearing their glasses or they got a haircut), and recognizing cars, with all of their different models and colors and shapes, takes a tremendous amount of processing.

As computer vision techniques get deployed in mobile devices, power efficiency in performing these computations becomes important to preserve battery life. Even in server-based applications, power is important because of the large amount of computations being performed on the vast amounts of data being stored in the cloud. For example, the new version of Google Photos is constantly running analytics on photos that you upload, allowing you to make searches such as, “show me pictures with a dog” from your photo collection.

Why would the Vision P5 DSP be a compelling alternative to using a CPU for image/vision processing?

A general-purpose CPU can take the same amount of area on the silicon die as the Vision P5 DSP, but the Vision P5 DSP provides significantly higher performance—10-20X higher based on the number of video frames processed for people detection, for instance. If you take a multi-core CPU with eight cores and lots of memory versus the Vision P5 DSP, the amount of power from the CPU to do the same task is significantly higher than the Vision P5 DSP. As an embedded processor, the Vision P5 DSP needs to be small and efficient.

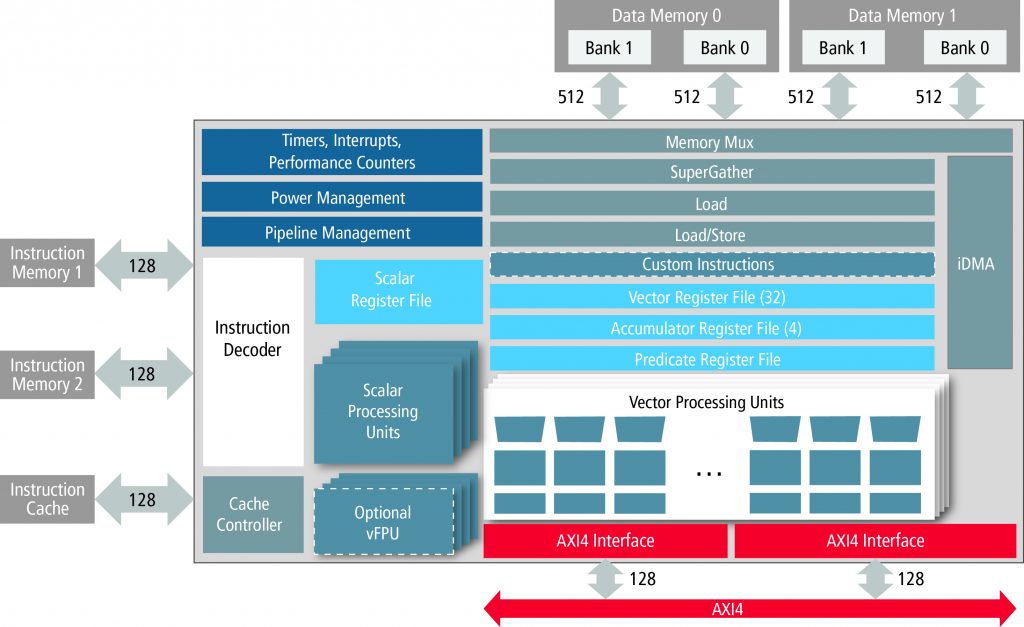

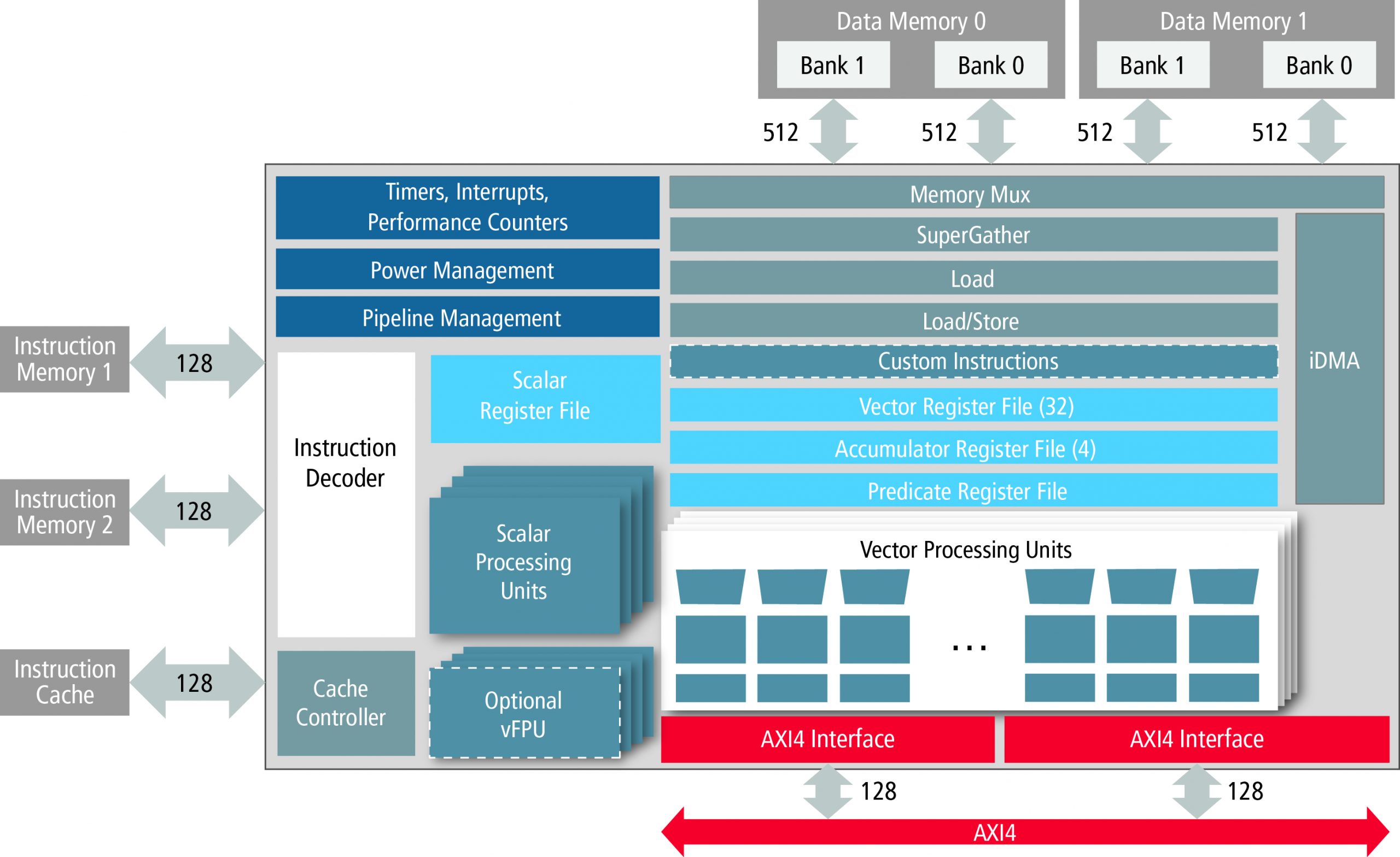

Tensilica Vision P5 Architecture

How is the Vision P5 DSP designed to support hundreds of pixel operations per cycle per core?

With its VLIW architecture, the Vision P5 DSP can do multiple operations at the same time, providing parallelism in the tasks. A typical scalar processor, by comparison, does one operation at a time. Its SIMD engine can do the same operation on multiple pieces of data at the same time, providing parallelism in terms of data processing. The Vision P5 DSP is a wide DSP that can operate on 32 data elements in a single operation, with 4 to 5 operations happening in parallel. Also, its instruction set is specifically designed for processing image and vision processing algorithms.

What makes the Vision P5 DSP energy efficient?

Algorithmic-level optimization makes it energy efficient. By designing an instruction set and datapath that’s tuned toward processing imaging algorithms, you can operate on that data more efficiently as compared to a general-purpose CPU.

We also took extensive care to apply design techniques for low power, such as appropriate types of clock gating, doing the maximal level of hardware sharing to minimize the implementation area for the task that needs to be done. We did extensive analysis of the instruction set for cycle count performance through code profiling, and area/power characteristics of their physical implementation using Cadence EDA tools to obtain a very efficient hardware implementation. We gave up on certain instructions that might provide fast execution, but cause inordinately high energy consumption. We made the right tradeoffs for good performance at acceptable power level.

How can designers use a Tensilica Instruction Extension (TIE) to further enhance performance of the Vision P5 DSP?

The Vision P5 design is already well optimized for the typical image processing and computer vision tasks a customer may want to do on this DSP. But there could always be a need for a customer to want to execute a proprietary and special piece of code at a high level of performance. This is where the Tensilica Instruction Extension (TIE) capability can be used to add specialized instructions and hardware to make the processor better suited to run that application.

As an example, consider histogram calculation. The Vision P5 DSP is very good at doing histogram calculation as is. But for customers wanting to have very-high-performance histogram calculations, we’ve created a histogram acceleration package that performs histogram calculations about 10X faster for a modest increase in the hardware. This was done using the same TIE technology that is available to all our customers. The TIE capability is very flexible and customers are able to use this technology to add their own custom extensions to obtain similar performance increases for their specialized applications.