LETTER FROM THE EDITOR |

|

Dear Colleague, The Embedded Vision Summit is coming soon: May 11-13, Santa Clara, California. As a subscriber, you can use code 26EVSUM-NL for 10% off your pass when you register before May 10. The Summit is a fantastic opportunity to keep up with the latest trends in edge AI topics, such as…vision-language models. VLMs are advancing fast, but it’s not always clear where they add value. Many teams struggle to turn demos into dependable products. This year, the Summit will host a plenary panel session to cut through the hype and focus on where VLMs make sense and the barriers to deploying them in real systems. Moderated by Phil Lapsley, the panel will feature Rakshit Agrawal of Microsoft, Vaibhav Ghadiok of Hayden AI, David Selinger of Deep Sentinel and Tushar Sheth of Superfocus.ai. Learn more about the panel here Another fantastic resource is our incredible lineup of exhibitors and sponsors at the forefront of AI and computer vision. It’s a unique opportunity to explore the latest processors, cameras, algorithms, libraries and tools—to see them yourself, learn how they work and get your questions answered by the designers themselves! See the 2026 Sponsor and Exhibitor Directory Lastly, the Edge AI and Vision Product of the Year Awards celebrate the innovation and achievement of the industry’s leading companies that are enabling and developing products incorporating edge AI and computer vision technologies. This year’s award winners were announced last Monday, and an awards ceremony will take place on-stage during the Summit. Congratulations to the winners:

Watch the winners accept their awards at the Summit—use code 26EVSUM-NL for 10% off your pass when you register online! Whether you can attend the Summit or not, this newsletter will continue to be your source for insightful technical and business content on edge AI and vision technology. This week, we’ll look at a novel use of channel state information which turns existing Wi-Fi devices into powerful sensors with very low compute requirements. We’ll also hear from a quartet of Alliance Members bringing a radar-camera sensor fusion stack to fruition. Lastly, we’ll get two takes on edge compute—one regarding neuromorphic computing, and the other looking at trends in the semiconductor industry. Without further ado, let’s get to the content. Erik Peters |

BUILDING AND DEPLOYING REAL-WORLD ROBOTS |

LOW-COST SENSING, RICHER PERCEPTION |

|

Non-Contact Vital Sign Monitoring Using Low-Cost WiFi Devices Non-contact vital sign monitoring is moving toward commodity infrastructure. Katia Obraczka, Professor of Computer Science and Engineering at the University of California, Santa Cruz, along with students Nayan Bhatia and Pranay Kocheta, detail how standard WiFi devices can be used to measure heart rate—from across a room—by analyzing channel state information (CSI). Subtle changes in RF signals caused by physiological motion (e.g., heartbeat) are extracted and processed using lightweight machine learning models. The system runs on low-cost hardware such as ESP32 and Raspberry Pi-class devices, achieving clinically relevant accuracy with minimal compute and no specialized sensors. CSI-based techniques have been explored for years, but advances in ML are making them more practical and robust for real-world environments. |

|

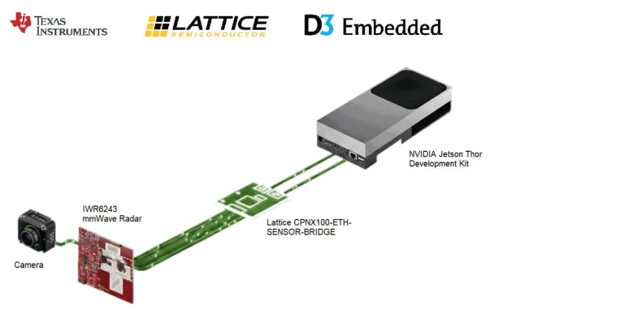

Texas Instruments outlines a robotics perception pipeline that combines TI mmWave radar, camera input, a Lattice sensor bridge, NVIDIA Holoscan and D3 integration software. It explains how synchronized radar and camera data are moved into GPU-accessible memory with low CPU overhead, then processed for radar point-cloud generation, vision inference and multimodal fusion. The setup is aimed at cases where cameras alone are less reliable, using radar to add range, velocity and resilience in low-visibility conditions or around hard-to-detect obstacles. The result is a reference architecture for building low-latency sensor-fusion systems for humanoids and other autonomous robots. |

RETHINKING COMPUTE AT THE EDGE |

|

Neuromorphic Computing Enables Ultra-low Power Edge Devices Helbling explains why neuromorphic computing is drawing attention for ultra-low-power edge systems. The piece centers on two architectural advantages: reducing the energy and latency cost of moving data between memory and compute, and processing sparse, event-driven inputs only when the signal changes. It also notes that end-to-end efficiency depends on more than the compute core, since sensor interfaces, encoding and output handling can erode power savings if they are not designed carefully. Helbling suggests neuromorphic approaches are promising for always-on workloads such as wearables, implants and monitoring devices—though toolchains, hardware availability and engineering maturity still limit broader adoption. |

|

Key Trends Shaping the Semiconductor Industry in 2026 HTEC argues that the semiconductor industry is moving into a phase where software ecosystems, inference efficiency and power constraints matter as much as raw silicon performance. It looks at several linked trends, including the growing importance of compiler toolchains and workload portability, the challenge of fragmented edge NPU software stacks, the rise of chiplet-based architectures and a shift toward evaluating systems by cost, latency and energy per inference rather than peak FLOPS alone. It also highlights data center power availability as an emerging constraint and connects that pressure to greater interest in edge deployment and physical AI applications. |

UPCOMING INDUSTRY EVENTS |

|

Embedded Vision Summit: May 11-13, Santa Clara, California Newsletter subscribers may use the code 26EVSUM-NL for 10% off the price of registration until May 10. High-Speed and High-Resolution Vision with the Sony IMX925/935 and SLVS-EC – RESTAR FRAMOS Webinar: May 12, 10:00 am CEST – Intel Webinar: May 27, 10:00 am PT |

FEATURED NEWS |

|

The Edge AI and Vision Alliance has unveiled the 2026 Product of the Year Award Winners Lattice will collaborate with Texas Instruments to accelerate edge AI for robotics and industrial applications Intel has launched its Core Series 3 processors with advanced features for commercial and edge devices Image Quality Labs has released the IQL/poLight/Sunex MLens® EVK System for embedded vision and machine vision development |