Entertainment Applications for Embedded Vision

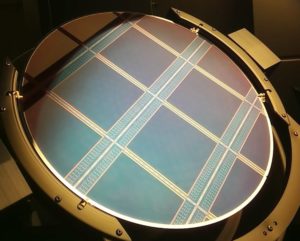

STMicroelectronics and Sphere Studios Reveal New Details On the World’s Largest Cinema Image Sensor

Sensor custom created for Big Sky – the world’s most advanced camera system – and is used to capture ultra-high-resolution content for Sphere in Las Vegas Burbank, CA, and Geneva, Switzerland — January 11, 2024 – Sphere Entertainment Co. (NYSE: SPHR) today revealed new details on its work with STMicroelectronics (NYSE: STM) (“ST”), a global

Qualcomm Accelerates New Wave of Mixed Reality Experiences with Snapdragon XR2+ Gen 2

Highlights: The Snapdragon XR2+ Gen 2 supports 4.3K per eye resolution and 12 or more concurrent cameras to deliver crisp, immersive mixed reality (MR) and virtual reality (VR) experiences. Samsung and Google to provide leading XR experiences by utilizing Snapdragon XR2+ Gen 2. Jan 4, 2024 | San Diego | Qualcomm Technologies, Inc. today announced

Extended Reality Optics Industry to Reach US$ 5.1 Billion in 2034

For more information, visit https://www.idtechex.com/en/research-report/optics-for-virtual-augmented-and-mixed-reality-2024-2034-technologies-players-and-markets/969. AR combiners including diffractive (surface relief/holographic) and reflective (geometric) waveguides, birdbath and freespace holographic combiners. Lenses for VR including pancake and geometric phase lenses, and AR prescription correction. This report characterizes the optics industry, analyzing markets, technologies and players. It provides in-depth coverage of 13 individual optics technologies and forecasts

Qualcomm and Meta are Expanding Your Reality: Here’s How

This blog post was originally published at Qualcomm’s website. It is reprinted here with the permission of Qualcomm. New Snapdragon XR processors power Meta Quest 3 and the Ray-Ban Meta smart glasses collection to revolutionize your reality Qualcomm Technologies and Meta have a long history of driving innovation in the extended reality (XR) industry. Our

A Tale of Two Realities: Mapping Spatial Computing’s Next Decade

Spatial computing promises to transform the way we interact with our devices as computing goes truly 3D, with early signs of change already underway. While Apple‘s upcoming Vision Pro is bringing new excitement to this space, gaming-focused VR (Virtual Reality) headsets from companies including Meta, Sony, and Pico have sold millions of units, and AR

“Computer Vision in Sports: Scalable Solutions for Downmarkets,” a Presentation from Sportlogiq

Mehrsan Javan, Co-founder and CTO of Sportlogiq, presents the “Computer Vision in Sports: Scalable Solutions for Downmarket Leagues” tutorial at the May 2023 Embedded Vision Summit. Sports analytics is about observing, understanding and describing the game in an intelligent manner. In practice, this requires a fully automated, robust end-to-end pipeline, spanning from visual input, to

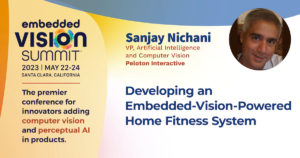

“Developing an Embedded Vision AI-powered Fitness System,” a Presentation from Peloton Interactive

Sanjay Nichani, Vice President for Artificial Intelligence and Computer Vision at Peloton Interactive, presents the “Developing an Embedded Vision AI-powered Fitness System” tutorial at the May 2023 Embedded Vision Summit. The Guide is Peloton’s first strength-training product that runs on a physical device and also the first that uses AI technology. It turns any TV

What is Sports Analytics, and How is Embedded Vision Reimagining It?

This blog post was originally published at e-con Systems’ website. It is reprinted here with the permission of e-con Systems. Embedded vision and sports analytics go hand-in-hand as the collected image and video data can help analyze player speed/performance, ball position, etc. Find out how these two technologies have been changing the world of sports

Buying an Autonomous Electric Car Will Feel More Like Buying a Laptop Than a Car

Computer chips have been part of cars for a long time, but no one really cares about them until they stop working or they are late to the production line, grinding manufacturing to an industry-shaking halt. However, the research within IDTechEx’s “Semiconductors for Autonomous and Electric Vehicles 2023-2033” report shows that trends within the automotive

“Develop Next-gen Camera Apps Using Snapdragon Computer Vision Technologies,” a Presentation from Qualcomm

Judd Heape, VP of Product Management for Camera, Computer Vision and Video Technology at Qualcomm Technologies, presents the “Develop Next-gen Camera Apps Using Snapdragon Computer Vision Technologies” tutorial at the May 2023 Embedded Vision Summit. The Qualcomm Snapdragon mobile platform powers the world’s best smartphones, XR headsets, PCs, wearables, automobiles and IoT products. These devices

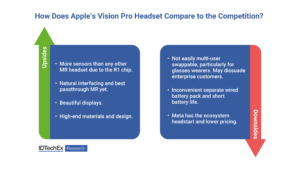

What Apple’s Vision Pro Mixed Reality Headset Does Differently

On June 5th, Apple ended years of speculation and finally announced a mixed reality (MR) headset. The US$3499 Vision Pro headset confirmed some expectations and confounded others. IDTechEx has been tracking the mixed, augmented and virtual reality markets since 2015, with reports on headsets and accessories and optics available now and a report on displays

Teledyne Cameras Enable VR Play at TOCA Social

Popular restaurant chain selects Teledyne’s Genie Nano cameras for its sports themed VR restaurants Waterloo, CANADA ─December 8, 2022 ─ Teledyne DALSA is pleased to announce that TOCA Social, the world’s first soccer and dining experience, has chosen Teledyne DALSA Genie Nano cameras to enable the capture of play in its interactive soccer based competitive socializing

“A New AI Platform Architecture for the Smart Toys of the Future,” a Presentation from Xperi

Gabriel Costache, Senior R&D Director at Xperi, presents the “New AI Platform Architecture for the Smart Toys of the Future” tutorial at the May 2022 Embedded Vision Summit. From a parent’s perspective, toys should be safe, private, entertaining and educational, with the ability to adapt and grow with the child. For natural interaction, a toy

May 2022 Embedded Vision Summit Slides

The Embedded Vision Summit was held on May 16-19, 2022 in Santa Clara, California, as an educational forum for product creators interested in incorporating visual intelligence into electronic systems and software. The presentations delivered at the Summit are listed below. All of the slides from these presentations are included in… May 2022 Embedded Vision Summit

Vision-Based Sports Analytics, Soccer Training and Player Assessment Enabled by Teledyne FLIR Machine Vision Cameras

This blog post was originally published at Teledyne FLIR’ website. It is reprinted here with the permission of Teledyne FLIR. Football (or soccer) is the world’s favorite pastime as well as a huge industry. Top clubs cannot afford to make the wrong personnel decisions – and key players need to recover as quickly as possible

May 2021 Embedded Vision Summit Slides

The Embedded Vision Summit was held online on May 25-28, 2021, as an educational forum for product creators interested in incorporating visual intelligence into electronic systems and software. The presentations delivered at the Summit are listed below. All of the slides from these presentations are included in PDF form. To… May 2021 Embedded Vision Summit

MediaTek’s MT9638 4K Smart TV Chip Ushers in a New Era of AI-Enabled Interactive Multimedia Experiences

New chip pairs innovative AI-powered features with the latest connectivity and display technologies HSINCHU, Taiwan – March 3, 2021 – MediaTek today announced its new 4K smart TV chip, the MT9638, with an integrated high-performance AI processing unit (APU). MT9638 supports cutting-edge AI-enhancement technologies such as AI super resolution, AI picture quality and AI voice assistants,

September 2020 Embedded Vision Summit Slides

The Embedded Vision Summit was held online on September 15-25, 2020, as an educational forum for product creators interested in incorporating visual intelligence into electronic systems and software. The presentations delivered at the Summit are listed below. All of the slides from these presentations are included in PDF form. To… September 2020 Embedded Vision Summit