Object Tracking Functions

Sony Semiconductor Demonstration of On-sensor YOLO Inference with the Sony IMX500 and Raspberry Pi

Amir Servi, Edge AI Product Manager at Sony Semiconductors, demonstrates the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Servi demonstrates the IMX500 — the first vision sensor with integrated edge AI processing capabilities. Using the Raspberry Pi AI Camera and Ultralytics YOLOv11n models, Servi showcases real-time

Namuga Vision Connectivity Demonstration of Compact Solid-state LiDAR for Automotive and Robotics Applications

Min Lee, Business Development Team Leader at Namuga Vision Connectivity, demonstrates the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Lee demonstrates a compact solid-state LiDAR solution tailored for automotive and robotics industries. This solid-state LiDAR features high precision, fast response time, and no moving parts—ideal for

How Driver Monitoring Cameras Improve Driving Safety and Their Key Features

This blog post was originally published at e-con Systems’ website. It is reprinted here with the permission of e-con Systems. Driver monitoring cameras have become widely accepted as a force in improving road safety. They go a long way to address the risks associated with driver inattention and fatigue by helping continuously observe driver behavior.

Namuga Vision Connectivity Demonstration of an AI-powered Total Camera System for an Automotive Bus Solution

Min Lee, Business Development Team Leader at Namuga Vision Connectivity, demonstrates the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Lee demonstrates his company’s AI-powered total camera system. The system is designed for integration into public transportation, especially buses, enhancing safety and automation. It includes front-view, side-view,

Namuga Vision Connectivity Demonstration of a Real-time Eye-tracking Camera Solution with a Glasses-free 3D Display

Min Lee, Business Development Team Leader at Namuga Vision Connectivity, demonstrates the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Lee demonstrates a real-time eye-tracking camera solution that accurately detects the viewer’s eye position and angle. This data enables a glasses-free 3D display experience using an advanced

Teledyne FLIR Demonstration of an Advanced Thermal Imaging Camera Enabling Automotive Safety Improvements

Ethan Franz, Senior Software Engineer at Teledyne FLIR, demonstrates the company’s latest edge AI and vision technologies and products in Lattice Semiconductor’s booth at the 2025 Embedded Vision Summit. Specifically, Franz demonstrates a state-of-the-art thermal imaging camera for automotive safety applications, designed using Lattice FPGAs. This next-generation camera, also incorporating Teledyne FLIR’s advanced sensing technology,

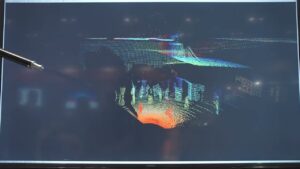

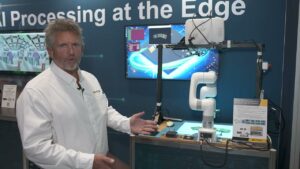

Lattice Semiconductor Demonstration of Random Bin Picking Based on Structured-Light 3D Scanning

Mark Hoopes, Senior Director of Industrial and Automotive at Lattice Semiconductor, demonstrates the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Hoopes demonstrates how Lattice FPGAs increase performance, reduce latency and jitter, and reduce overall power for a random bin picking robot. The Lattice FPGA offloads the

Stereo ace for Precise 3D Images Even with Challenging Surfaces

The new high-resolution Basler Stereo ace complements Basler’s 3D product range with an easy-to-integrate series of active stereo cameras that are particularly suitable for logistics and factory automation. Ahrensburg, July 10, 2025 – Basler AG introduces the new active 3D stereo camera series Basler Stereo ace consisting of 6 camera models and thus strengthens its position as

Cadence Demonstration of Waveguide 4D Radar Central Computing on a Tensilica Vision DSP-based Platform

Sriram Kalluri, Product Marketing Manager for Cadence Tensilica DSPs, demonstrates the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Kalluri demonstrates the use of the Tensilica Vision 130 (P6) DSP for advanced 4D radar computing for perception sensing used in ADAS applications. The Vision 130 DSP is

Why HDR and LED Flicker Mitigation Are Game-changers for Forward-facing Cameras in ADAS

This blog post was originally published at e-con Systems’ website. It is reprinted here with the permission of e-con Systems. In ADAS, forward-facing cameras capture traffic signs, signals, and pedestrians at farther distances using a narrow field of view (FOV). This narrower angle enables the camera to focus on distant objects with greater accuracy, making

Achieving High-speed Automatic Emergency Braking with AI-driven 4D Imaging Radar

This blog post was originally published at Ambarella’s website. It is reprinted here with the permission of Ambarella. Across the globe, regulators are accelerating efforts to make roads safer through the widespread adoption of Automatic Emergency Braking (AEB). In the United States, the National Highway Traffic Safety Administration (NHTSA) implemented a sweeping regulation that requires

Network Optix Demonstration of How the Company is Powering Scalable Data-driven Video Infrastructure

Tagir Gadelshin, Director of Product at Network Optix, demonstrates the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Gadelshin demonstrates how the company’s latest release, Gen 6 Enterprise, is enabling cloud-powered, event-driven video infrastructure for enterprise organizations at scale. Built on Nx EVOS, Gen 6 Enterprise supports

Network Optix Demonstration of Seamless AI Model Integration with Nx AI Manager

Robert van Emdem, Senior Director of Data Science at Network Optix, demonstrates the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Van Emdem demonstrates how Nx AI Manager enables seamless deployment of AI models across a wide variety of hardware, including GPU, VPU, and CPU environments. van

Network Optix Demonstration of Extracting AI Model Data with AI Manager

Marcel Wouters, Senior Backend Engineer at Network Optix, the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Wouters demonstrates how Nx AI Manager simplifies the extraction and use of data from AI models. Wouters showcases a live model detecting helmets and vests on a construction site and

Network Optix Overview of the Company’s Technologies, Products and Capabilities

Bradley Milligan, North America Sales Coordinator at Network Optix, demonstrates the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Milligan shares how Network Optix is enabling scalable, intelligent video solutions for organizations and industries around the world, including by using Nx EVOS and Nx AI Manager. Learn

How Does a Forward-facing Camera Work, and What Are Its Use Cases in ADAS?

This blog post was originally published at e-con Systems’ website. It is reprinted here with the permission of e-con Systems. Forward-facing cameras are the proverbial eyes of Advanced Driver Assistance Systems (ADAS). They collect real-time visual data from the vehicle’s surroundings and monitor the road, contributing to the system’s overall situational awareness. They capture key

“Computer Vision at Sea: Automated Fish Tracking for Sustainable Fishing,” a Presentation from Tryolabs and the Nature Conservancy

Alicia Schandy Wood, Machine Learning Engineer at Tryolabs, and Vienna Saccomanno, Senior Scientist at The Nature Conservancy, co-present the “Computer Vision at Sea: Automated Fish Tracking for Sustainable Fishing” tutorial at the May 2025 Embedded Vision Summit. What occurs between the moment a commercial fishing vessel departs from shore and its return? How sustainable is

“Beyond the Demo: Turning Computer Vision Prototypes into Scalable, Cost-effective Solutions,” a Presentation from Plainsight Technologies

Kit Merker, CEO of Plainsight Technologies, presents the “Beyond the Demo: Turning Computer Vision Prototypes into Scalable, Cost-effective Solutions” tutorial at the May 2025 Embedded Vision Summit. Many computer vision projects reach proof of concept but stall before production due to high costs, deployment challenges and infrastructure complexity. This presentation explores the path from prototype