Cameras and Sensors for Embedded Vision

WHILE ANALOG CAMERAS ARE STILL USED IN MANY VISION SYSTEMS, THIS SECTION FOCUSES ON DIGITAL IMAGE SENSORS

While analog cameras are still used in many vision systems, this section focuses on digital image sensors—usually either a CCD or CMOS sensor array that operates with visible light. However, this definition shouldn’t constrain the technology analysis, since many vision systems can also sense other types of energy (IR, sonar, etc.).

The camera housing has become the entire chassis for a vision system, leading to the emergence of “smart cameras” with all of the electronics integrated. By most definitions, a smart camera supports computer vision, since the camera is capable of extracting application-specific information. However, as both wired and wireless networks get faster and cheaper, there still may be reasons to transmit pixel data to a central location for storage or extra processing.

A classic example is cloud computing using the camera on a smartphone. The smartphone could be considered a “smart camera” as well, but sending data to a cloud-based computer may reduce the processing performance required on the mobile device, lowering cost, power, weight, etc. For a dedicated smart camera, some vendors have created chips that integrate all of the required features.

Cameras

Until recent times, many people would imagine a camera for computer vision as the outdoor security camera shown in this picture. There are countless vendors supplying these products, and many more supplying indoor cameras for industrial applications. Don’t forget about simple USB cameras for PCs. And don’t overlook the billion or so cameras embedded in the mobile phones of the world. These cameras’ speed and quality have risen dramatically—supporting 10+ mega-pixel sensors with sophisticated image processing hardware.

Consider, too, another important factor for cameras—the rapid adoption of 3D imaging using stereo optics, time-of-flight and structured light technologies. Trendsetting cell phones now even offer this technology, as do latest-generation game consoles. Look again at the picture of the outdoor camera and consider how much change is about to happen to computer vision markets as new camera technologies becomes pervasive.

Sensors

Charge-coupled device (CCD) sensors have some advantages over CMOS image sensors, mainly because the electronic shutter of CCDs traditionally offers better image quality with higher dynamic range and resolution. However, CMOS sensors now account for more 90% of the market, heavily influenced by camera phones and driven by the technology’s lower cost, better integration and speed.

Physical AI: From ST Sensors to a Robotics Platform, How Innovation Can Only Happen Through Collaboration

This blog post was originally published at STMicroelectronics’s website. It is reprinted here with the permission of STMicroelectronics. As technology aims to enable Physical AI, ST is sharing today how collaboration brought our sensors into a Holoscan Sensor Bridge module from Leopard Imaging, enabling developers to feed multi-modal sensing data to the NVIDIA Jetson Thor or

Lattice Collaborates with TI to Accelerate Edge AI for Robotics and Industrial Applications

HILLSBORO, Ore. – Apr. 20, 2026 – Lattice Semiconductor (NASDAQ: LSCC), the low power programmable leader, today announced that the company is collaborating with Texas Instruments (TI) to simplify sensor integration and to scale real-time edge AI systems. The combination of TI’s sensing technologies and the Lattice Holoscan Sensor Bridge solution, based on Lattice low power FPGA technology, will

Upcoming Webinar on Sony’s IMX925/935 Sensor Series and High Performance SLVS-EC Interface

On May 12, 2026, at 10:00 am CEST, RESTAR FRAMOS will deliver a webinar “Reaching High-Speed and High-Resolution Architecture with IMX925/935 and SLVS-EC” From the event page: From sensor architecture to real-world integration — join the engineers behind the technology High-speed and high-resolution machine vision systems are pushing the limits of data throughput, latency, and

Image Quality Labs Launches IQL/poLight/Sunex MLens EVK System for Embedded Vision and Machine Vision Development

DURHAM, N.C., April 16, 2026 — Image Quality Labs, Inc. today announced the launch of the IQL/poLight ASA/Sunex, Inc. MLens® EVK System, a new evaluation platform designed to help engineers assess electronically tunable focus for embedded vision, machine vision, robotics and AI imaging applications. The new development kit combines poLight’s MLens® tunable lens technology, Sunex

Neuromorphic Computing Enables Ultra-low Power Edge Devices

This blog post was originally published at Helbling’s website. It is reprinted here with the permission of Helbling. Over the last five years, neuromorphic computing has rapidly advanced through the development of state-of-the-art hardware and software technologies that mimic the information processing dynamics of animal brains. This development provides ultra-low power computation capabilities, especially for edge

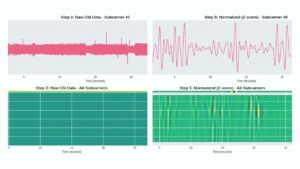

“Non-Contact Vital Sign Monitoring Using Low-Cost WiFi Devices,” a Presentation from the University of California, Santa Cruz

Katia Obraczka, Professor of Computer Science and Engineering, University of California, Santa Cruz, Pranay Kocheta, Lexington High School, and Nayan Bhatia, University of California, Santa Cruz present the “Non-Contact Vital Sign Monitoring Using Low-Cost WiFi Devices” tutorial at the December 2025 Edge AI and Vision Innovation Forum.

Texas Instruments, D3 Embedded, Lattice and NVIDIA Show a Practical Radar-Camera Fusion Stack for Robotics

TI’s new application brief and companion demo outline how mmWave radar, camera input, FPGA-based sensor bridging and NVIDIA Holoscan can be combined into a low-latency perception pipeline for humanoids and other autonomous machines. Texas Instruments, D3 Embedded, Lattice Semiconductor and NVIDIA are outlining a concrete radar-camera fusion stack for robotics rather than just talking

Upcoming Webinar on Akida Radar Reference Platform

On April 20, 2026, at 8:00 pm PDT (11:00 am EDT) BrainChip will deliver a webinar “Akida Radar Reference Platform: See the Evolution of Radar Intelligence with AI-Powered Object Classification” From the event page: Join us on 20 April at 8:00 AM PT for an exclusive deep dive into BrainChip’s Radar Reference Platform — bringing

BrainChip Unveils Radar Reference Platform to Bridge the ‘Identification Gap’ in Edge AI

LAGUNA HILLS, Calif. — April 6, 2026 — BrainChip Holdings Ltd (ASX: BRN, OTCQX: BRCHF, BCHPY), the world’s first commercial producer of ultra-low-power, neuromorphic AI technology, today announced the launch of its Radar Reference Platform. This fully validated hardware and AI stack is designed to provide real-time object classification at the edge, solving the critical “identification gap” that limits traditional radar

e-con Systems Launches STURDeCAM57: A 5MP Global Shutter RGB-IR Camera for In-cabin Monitoring Systems

California & Chennai (March 31, 2026): e-con Systems®, a global leader in embedded vision solutions, launches STURDeCAM57, a 5MP global shutter RGB-IR GMSL2 camera designed to deliver reliable, context-rich vision from day to night for applications such as Driver Monitoring Systems (DMS) and Occupant Monitoring Systems (OMS). This new RGB-IR camera is based on the