|

Dear Colleague,

The Embedded Vision Summit is the preeminent conference on practical computer vision, covering applications from the edge to the cloud. It attracts a global audience of over one thousand product creators, entrepreneurs and business decision-makers who are creating and using computer vision technology. The Embedded Vision Summit has experienced exciting growth over the last few years, with 98% of 2017 Summit attendees reporting that they’d recommend the event to a colleague. The next Summit will take place May 22-24, 2018 in Santa Clara, California. The deadline to submit presentation proposals is November 10, 2017. For detailed proposal requirements and to submit proposals, please visit https://www.embedded-vision.com/summit/2018/call-proposals. For questions or more information, please email [email protected].

On October 24, 2017 at 11 am ET (8 am PT), Embedded Vision Alliance Founder Jeff Bier will conduct a free one-hour Q&A webcast, "Embedded and Mobile Vision Systems – Developments and Benefits," in partnership with Vision Systems Design. Bier will answer your questions on enabling technologies such as embedded computers, compact vision processors, camera modules and software development tools, as well as on applications such as autonomous vehicles, drones, robots and toys. For more information and to register, see the event page.

Brian Dipert

Editor-In-Chief, Embedded Vision Alliance

|

|

1,000X in Three Years: How Embedded Vision is Transitioning from Exotic to Everyday

Just a few years ago, it was inconceivable that everyday devices would incorporate visual intelligence. Now it’s clear that visual intelligence will be ubiquitous soon. How soon? Faster than you might think, thanks to three key accelerating factors. In the next few years, we'll see roughly a 10x improvement in cost-performance and energy efficiency at each of three layers: algorithms, software techniques, and processor architecture. Combined, this means that we can expect roughly a 1,000x improvement. So, tasks that today require hundreds of watts of power and hundreds of dollars' worth of silicon will soon require less than a watt of power and less than a dollar's worth of silicon. This will be world-changing, enabling even very cost-sensitive devices, like toys, to incorporate sophisticated visual perception. In this talk, Jeff Bier, Founder of the Embedded Vision Alliance and Co-founder and President of BDTI, explains how innovators across the industry are delivering this 1,000X improvement very rapidly. He also highlights end-products that are showing us what’s possible in this new era, and important challenges that remain.

How to Start an Embedded Vision Company

This talk from Chris Rowen, CEO of Cognite Ventures, outlines the essential opportunity and daunting challenges of building significant new companies based on deep learning technology, specifically in vision systems. It draws on Rowen's long experience as a successful founder, CEO, CTO and investor in Silicon Valley, and lays out some of the key factors in selecting a target market, leadership team, technology foundation, and product strategy. It highlights the particular leverage, as well as some of the pitfalls, of the deep learning revolution as a trigger for fundable vision breakthroughs. The talk helps company founders think about how their priorities and skills are best leveraged in a start-up environment. It drills down into the particular issues of embedded deep learning and vision, focusing particularly on the essential role for access to relevant training data, the range of workable business models spanning device and cloud, and the stages of deep learning competence.

|

|

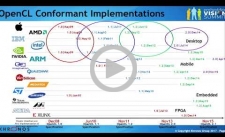

The Vision Acceleration API Landscape: Options and Trade-offs

The landscape of APIs for accelerating vision and neural network software using specialized processors continues to rapidly evolve. Many industry-standard APIs, such as OpenCL and OpenVX, are being upgraded to increasingly focus on deep learning, and the industry is rapidly adopting the new generation of low-level, explicit GPU APIs, such as Vulkan, that tightly integrate graphics and compute. Some of these APIs, like OpenVX and OpenCV, are vision-specific, while others, like OpenCL and Vulkan, are general-purpose. Some, like CUDA and TensorRT, are vendor-specific, while others are open standards that any supplier can adopt. Which ones should you use for your project? In this presentation, Neil Trevett, President of the Khronos Group and Vice President at NVIDIA, sorts out the options and helps you make an optimum selection for your particular design situation.

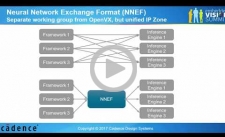

The OpenVX Computer Vision Library Standard for Portable, Efficient Code

OpenVX is an industry-standard computer vision API designed for efficient implementation on a variety of embedded platforms. The API incorporates the concept of a dataflow graph, which enables implementers to apply a range of optimizations appropriate to their architectures, such as image tiling and kernel fusion. Application developers can use this API to create high-performance computer vision applications quickly, without having to perform complex device-specific optimizations for data management and kernel execution, since these optimizations are handled by the OpenVX implementation provided by the processor vendor. This talk from Frank Brill, Design Engineering Director at Cadence and Chairperson of the Khronos Group's OpenVX Working Group, describes the current status of OpenVX, with particular focus on the most recent enhancements to it, including a neural network extension. The talk concludes with summary of the currently available implementations and an overview of the roadmap for the OpenVX API and its implementations.

|

|

Vision Systems Design Webinar – Embedded and Mobile Vision Systems – Developments and Benefits: October 24, 2017, 8:00 am PT

Consumer Electronics Show: January 9-12, 2018, Las Vegas, Nevada

Embedded Vision Summit: May 22-24, 2018, Santa Clara, California

More Events

|