This blog post was originally published at Cadence's website. It is reprinted here with the permission of Cadence.

When I was at embedded world in Nuremberg recently, I ran into Jeff Bier, the head of the Embedded Vision Alliance, and the organizer of the Embedded Vision Conference. He gave me a copy of a survey that they had run on tools and processors for computer vision.

When I was at embedded world in Nuremberg recently, I ran into Jeff Bier, the head of the Embedded Vision Alliance, and the organizer of the Embedded Vision Conference. He gave me a copy of a survey that they had run on tools and processors for computer vision.

The focus of the survey is people actually doing designs, as opposed to companies like Cadence that supply technology but don't design end products. As it says in the executive summary:

This white paper provides selected results from our most recent survey, conducted in November 2017. We received responses from 706 computer vision developers across a wide range of industries, organizations, geographical locations, and job types. We have focused our analysis on the 323 respondents whose organizations are developing end products for consumers, businesses, or governments (vs. organizations that are providing services, or providing components, subsystems, or software for incorporation into new products).

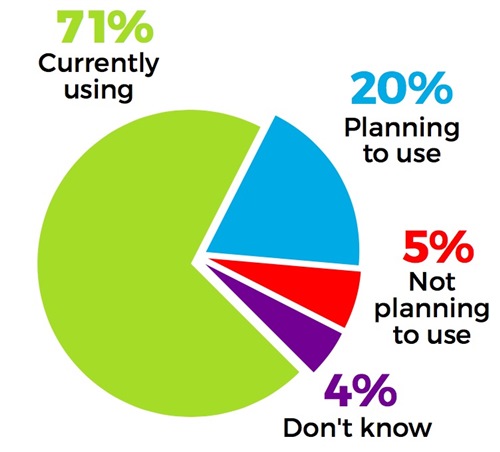

Using or Planning to Use Vision

The first question is whether you are using or planning to use computer vision in products. Unless you have been in some sort of suspended animation for the last couple of years, you can't have avoided noticing the increase in the importance of computer vision. ADAS and autonomous driving, of course. But lots of other applications. As Chris Rowen regularly points out, to a first approximation, all sensor data is vision (at least if measured by the bandwidth used).

This shows up in the numbers. Over 90% of people are planning to use computer vision in their products, and half of the rest are undecided. This might just reflect the population surveyed, of course, which are computer vision developers as opposed to, say, modem developers.

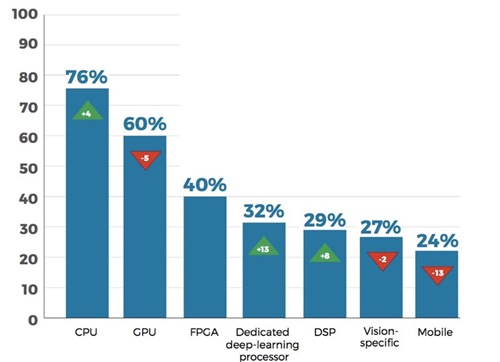

Types of Processors

Cadence has a broad portfolio of vision processors, so the question about what types of processors are being used is especially interesting. Since the various processors in the Tensilica portfolio might be described (by users who are not being precise) as any of a deep-learning processor, a DSP, or a vision-specific processor, I'm not sure there is any deep insight. Users ranked the top 3 (so the totals are almost 300%).

The trends are not dramatic, but GPU use is down a little from last year, dedicated deep learning processor (which means neural network processors) is up 13% and DSP is up 8%. I don't want to draw too many conclusions from a small number of datapoints, but using a general-purpose solution like a GPU is losing ground to using special-purpose processors more optimized to the specific task.

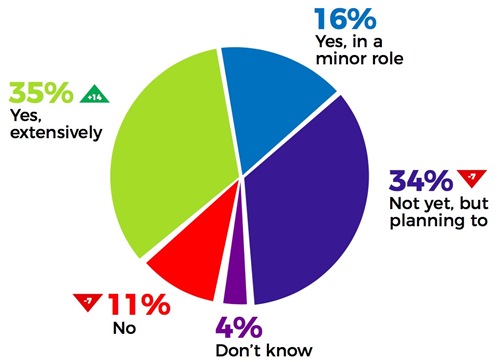

Neural Networks

Another interesting question was about the use of neural networks. The percentage of people planning to use neural networks, or not planning to, are both down a little, presumably because they have now started to use them. The percentage using them extensively is up quite a bit. The result is nearly 90% of people are already using them or planning to do so.

See the Whole Survey

The survey is officially called the Fall 2017 Computer Vision Developer Survey from the Embedded Vision Alliance and you can see the summary from that link. If you are a member of the Embedded Vision Alliance, then you can have the full survey results by emailing.

The Embedded Vision Summit

If you are interested in embedded vision, then the must-attend event is the annual Embedded Vision Summit. This will take place Tuesday and Wednesday, May 22 – 23 in the Santa Clara Convention Center. There is also a full-day training course available the Monday before on Tensorflow and various workshops on the Thursday following. There are two keynotes at the summit from:

If you are interested in embedded vision, then the must-attend event is the annual Embedded Vision Summit. This will take place Tuesday and Wednesday, May 22 – 23 in the Santa Clara Convention Center. There is also a full-day training course available the Monday before on Tensorflow and various workshops on the Thursday following. There are two keynotes at the summit from:

- Dean Kamen, DEKA Research & Development, but best known as the inventor of the Segway (and so, indirectly, all those hoverboards): From Mobility to Medicine: Vision Enables the Next Generation of Innovation

- Takeo Kanade, Carnegie-Mellon University (CMU): Think Like an Amateur, Do As an Expert: Lessons from a Career in Computer Vision

Registration is already open.

Paul McLellan

Editor of Breakfast Bytes, Cadence