| LETTER FROM THE EDITOR |

|

Dear Colleague,

Deep Learning for Computer Vision with TensorFlow 2.0 is the Embedded Vision Alliance's in-person, hands-on technical training class. The next session will take place November 1 in Fremont, California, hosted by Alliance Member company Mentor. This one-day hands-on overview will give you the critical knowledge you need to develop deep learning computer vision applications with TensorFlow. Details, including online registration, can be found here.

Renesas Electronics America will deliver the free webinar "Renesas' Dynamically Reconfigurable Processor (DRP) Technology Enables a Hybrid Approach for Embedded Vision Solutions" on November 13 at 10 am PT, in partnership with the Embedded Vision Alliance. DRP technology, built into select Arm® Cortex-based RZ Family MPUs, accelerates image processing algorithms with runtime-reconfigurable hardware that delivers the acceleration benefits of dedicated circuitry while avoiding the cost and power penalties associated with embedded FPGA-based approaches. In this webinar, Renesas will explain the DRP architecture and its operation, present benchmarks and design examples demonstrating more than 10x the performance of traditional CPU-only solutions, and introduce resources for developing DRP-based embedded vision systems with the RZ/A2M MPU. For more information and to register, please see the event page.

The Alliance is looking for an experienced, talented, and energetic Marketing Manager to drive its marketing program — both for the Alliance as a whole and for the various events that it runs. The ideal candidate has 5-10 years’ experience devising and implementing marketing programs, either for high-tech companies or for events and conferences targeted at engineers, product developers and executives working at technology companies. Both full-time and part-time roles are available; for more information and to submit an application, please see the job posting page on the Alliance website for the role you're interested in.

Brian Dipert

Editor-In-Chief, Embedded Vision Alliance

|

| VISUAL AI INNOVATIONS, TRENDS AND OPPORTUNITIES |

|

Making the Invisible Visible: Within Our Bodies, the World Around Us and Beyond

The invention of X-ray imaging enabled us to see inside our bodies. The invention of thermal infrared imaging enabled us to depict heat. So, over the last few centuries, the key to making the invisible visible was recording with new slices of electromagnetic spectrum. But the impossible photos of tomorrow won’t be recorded; they’ll be computed. Ramesh Raskar, Associate Professor in the MIT Media Lab, and his group have pioneered the field of femto-photography, which uses a high-speed camera that enables visualizing the world at nearly a trillion frames per second so that we can create slow-motion movies of light in flight. These techniques enable the seemingly impossible: seeing around corners, seeing through fog as if it were a sunny day and detecting circulating tumor cells with a device resembling a blood-pressure cuff. Raskar and his colleagues in the Camera Culture Group have advanced fundamental techniques and have pioneered new imaging and computer vision applications. Their work centers on the co-design of novel imaging hardware and machine learning algorithms, including techniques for the automated design of deep neural networks. Many of Raskar’s projects address healthcare, such as EyeNetra, a start-up that extends the capabilities of smart phones to enable low-cost eye exams. In his Embedded Vision Summit keynote presentation, Raskar shares highlights of his group’s work, and his unique perspective on the future of imaging, machine learning and computer vision.

The Reality of Spatial Computing: What’s Working in 2019 (And Where It Goes From Here)

This presentation from Tim Merel, Managing Director at Digi-Capital, gives you hard data and lessons learned on what is and isn’t working in augmented reality (AR) and virtual reality (VR) today, as well as a clear view of where the market is headed over the next five years. With computer vision underpinning the entire market, Merel explores the biggest opportunities for computer vision companies in AR and VR going forward.

|

| RESOURCE-EFFICIENT DEEP LEARNING |

|

Using TensorFlow Lite to Deploy Deep Learning on Cortex-M Microcontrollers

Is it possible to deploy deep learning models on low-cost, low-power microcontrollers? While it may be surprising, the answer is a definite “yes”! In this talk, Pete Warden, Staff Research Engineer and TensorFlow Lite development lead at Google, explains how the new TensorFlow Lite framework enables creating very lightweight DNN implementations suitable for execution on microcontrollers. He illustrates how this works using an example of a 20 Kbyte DNN model that performs speech wake word detection, and discusses how this generalizes to image-based use cases. Warden introduces TensorFlow Lite, and explores the key steps in implementing lightweight DNNs, including model design, data gathering, hardware platform choice, software implementation and optimization.

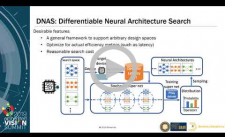

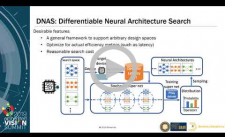

Enabling Automated Design of Computationally Efficient Deep Neural Networks

Efficient deep neural networks are increasingly important in the age of AIoT (AI + IoT), in which people hope to deploy intelligent sensors and systems at scale. However, optimizing neural networks to achieve both high accuracy and efficient resource use on different target devices is difficult, since each device has its own idiosyncrasies. In this talk, Bichen Wu, Graduate Student Researcher in the EECS Department at the University of California, Berkeley, introduces differentiable neural architecture search (DNAS), an approach for hardware-aware neural network architecture search. He shows that, using DNAS, the computation cost of the search itself is two orders of magnitude lower than previous approaches, while the models found by DNAS are optimized for target devices and surpass the previous state-of-the-art in efficiency and accuracy. Wu also explains how he used DNAS to find a new family of efficient neural networks called FBNets.

|

| UPCOMING INDUSTRY EVENTS |

|

Embedded Vision Alliance Webinar – Key Trends in the Deployment of Visual AI: October 16, 2019, 9:00 am PT and 6:00 pm PT

Technical Training Class – Deep Learning for Computer Vision with TensorFlow 2.0: November 1, 2019, Fremont, California

Renesas Webinar – Renesas' Dynamically Reconfigurable Processor (DRP) Technology Enables a Hybrid Approach for Embedded Vision Solutions: November 13, 2019, 10:00 am PT

Embedded AI Summit: December 6-8, 2019, Shenzhen, China

More Events

|

| VISION PRODUCT OF THE YEAR SHOWCASE |

|

Infineon Technologies IRS2381C 3D Image Sensor (Best Camera or Sensor)

Infineon Technologies' IRS2381C 3D image sensor is the 2019 Vision Product of the Year Award Winner in the Cameras and Sensors category. The IRS2381C is a novel member of the REAL3 product family, Infineon’s portfolio of highly integrated 3D Time-of-flight (ToF) imager sensors. Building upon the combined expertise of Infineon and pmdtechnologies in algorithms for processed 3D point clouds, the innovative sensor delivers a new level of front camera capabilities in smartphones. Applications include secure user authentication such as face- or hand-recognition for device unlock and payment, augmented reality, morphing, photography improvement, room scanning and many others.

Please see here for more information on Infineon Technologies and its IRS2381C 3D image sensor product. The Vision Product of the Year Awards are open to Member companies of the Embedded Vision Alliance and celebrate the innovation of the industry's leading companies that are developing and enabling the next generation of computer vision products. Winning a Vision Product of the Year award recognizes leadership in computer vision as evaluated by independent industry experts.

|

| FEATURED NEWS |

|

Upcoming Synopsys Conference Details the Latest Technologies in Trendsetting Applications and Markets

OmniVision Announces Image Sensor With Industry’s Smallest BSI Global Shutter Pixel

Intel Launches First 10th Gen Intel Core Processors: Redefining the Next Era of Laptop Experiences

Now Available – Design-in Samples of the Basler boost Camera and Basler CXP-12 Interface Card

Khronos Releases OpenXR 1.0 Specification Establishing a Foundation for the AR and VR Ecosystem

More News

|