This blog post was originally published at Qualcomm’s website. It is reprinted here with the permission of Qualcomm.

Qualcomm AI Research demonstrates 1280×704 HD video being decoded real-time at 30+ frames per second on Snapdragon 888

It used to be that most people wondered about the value of artificial intelligence (AI) and what it could do. Fast forward to today, and the question has become what can’t AI do? At Qualcomm, we’ve long envisioned that AI will become ubiquitous, enabling devices to perceive, reason, and take intelligent actions based on awareness of the situation. It will improve just about any experience, augment or replace conventional algorithms, and even solve problems considered unsolvable. I’m very pleased to share that Qualcomm AI Research is showcasing our latest advancement and what is possible with AI technology by demonstrating the world’s first HD neural video decoder running real-time on a commercial smartphone. Let me explain the significance of this achievement.

The scale of video being created and consumed is massive

Video technology has revolutionized how we create and consume media. Advancements in video compression, which provide enhanced video quality with less bits, have led to broad video adoption across a wide range of devices and services. In fact, it’s expected that 82% of Internet traffic will be video by 20221. With this explosive growth of video traffic, video coding technology enhancements are crucial for providing entertainment, enhancing collaboration, and transforming industries in the coming years.

AI is enabling future generation video codecs

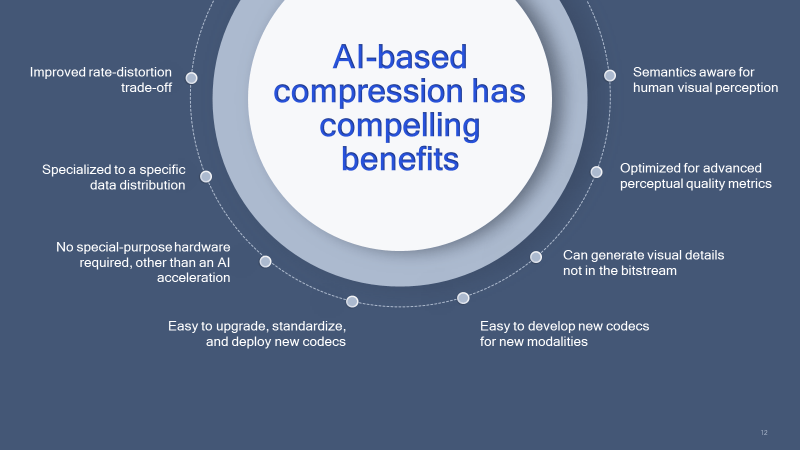

To meet the rising demand of video consumption, we envision that future video codecs will have the following features:

- Direct optimization of bitrate and perceptual quality metrics

- Simplified codec development

- Intrinsic massive parallelism

- Efficient execution and ability to update on deployed hardware

- Downloadable codec updates

Neural network video codecs have the potential to provide all these desired features. Specifically, they can run on AI hardware accelerators developed for other AI applications and can also enable much more efficient parallelization of entropy coding. Driven by this potential, there has been active research on neural video codecs over the past few years, showing impressive compression performance and closing the gap to conventional codecs.

Making neural video codecs possible on mobile devices

Taking AI research from the lab to real-life scenarios is often not easy, and in this case practical deployment of neural video codecs is challenging. Most existing AI research studies use wall-powered high-end GPUs with floating-point computation, and the neural network models are often not optimized for fast inference. Running real-time inference on these types of neural decoder models is not practical or feasible on mobile devices with fixed compute, power, and thermal constraints.

With our expertise in power-efficient AI, our goal was to achieve real-time intra-frame neural video coding on a commercial smartphone. We made several optimizations, such as redesigning the network architecture for reduced complexity, quantizing the network for optimal performance on the AI acceleration processor, exploiting parallel entropy coding, and utilizing Qualcomm’s technology innovations. For quantization, we used Qualcomm Innovation Center’s open-source AI Model Efficiency Toolkit (AIMET). The result is the world’s 1st demo that showcases real-time HD neural video decoding on a mobile device.

In our demo, a 1280 x 704 video, which is very close to HD 720p, is decoded at 30+ frames per second on a commercial smartphone power by Qualcomm Snapdragon 888 processor. Specifically, the parallel entropy decoding runs on the CPU and the decoder network is accelerated on the 6th generation Qualcomm AI Engine. Take a close look at the embedded video to see that the rich visual structures in challenging nature scenes are accurately preserved by the neural decoder, resulting in excellent scene reproduction.

We’re very excited about this paradigm shifting achievement and how this will affect future video codecs. Stay tuned for future work extending this to include inter-frame decoding running real-time on a mobile device.

1Cisco Annual Internet Report, 2018–2023

Dr. Jilei Hou

Vice President, Engineering, Qualcomm Technologies