This blog post was originally published at Edge Impulse’s website. It is reprinted here with the permission of Edge Impulse.

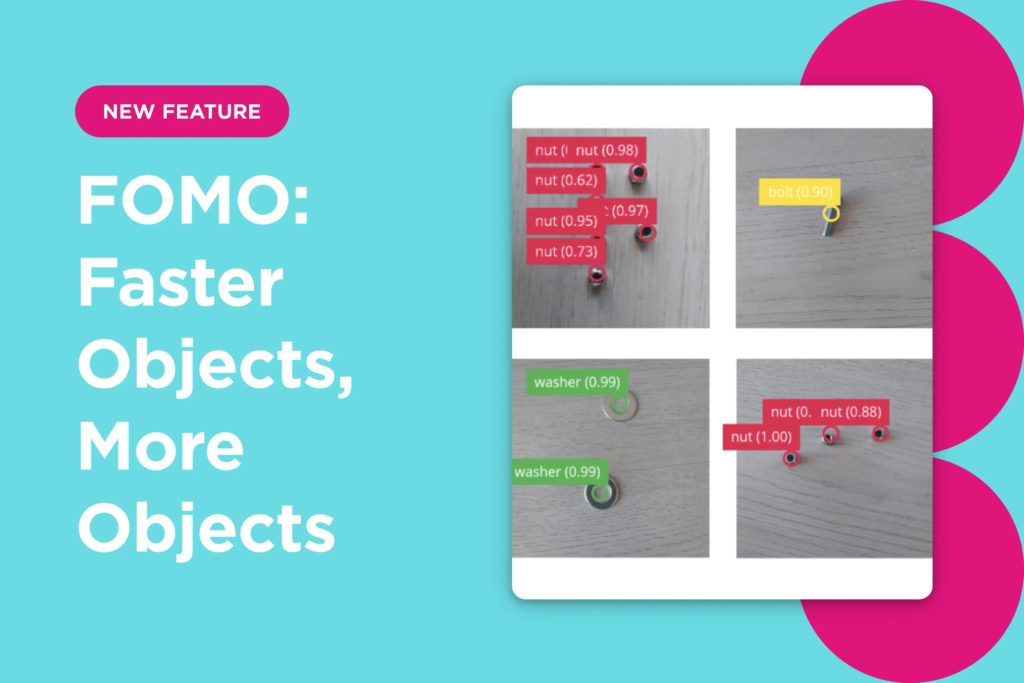

Today, we are super excited to announce the official release of a brand new approach to run object detection models on constrained devices: Faster Objects, More Objects (FOMO).

FOMO is a ground-breaking algorithm that brings real-time object detection, tracking and counting to microcontrollers for the first time. FOMO is 30x faster than MobileNet SSD and runs in <200K of RAM.

To give you an idea, we have seen results around 30 fps on the Arduino Nicla Vision (Cortex-M7 MCU) using 245K RAM.

Image processing approaches

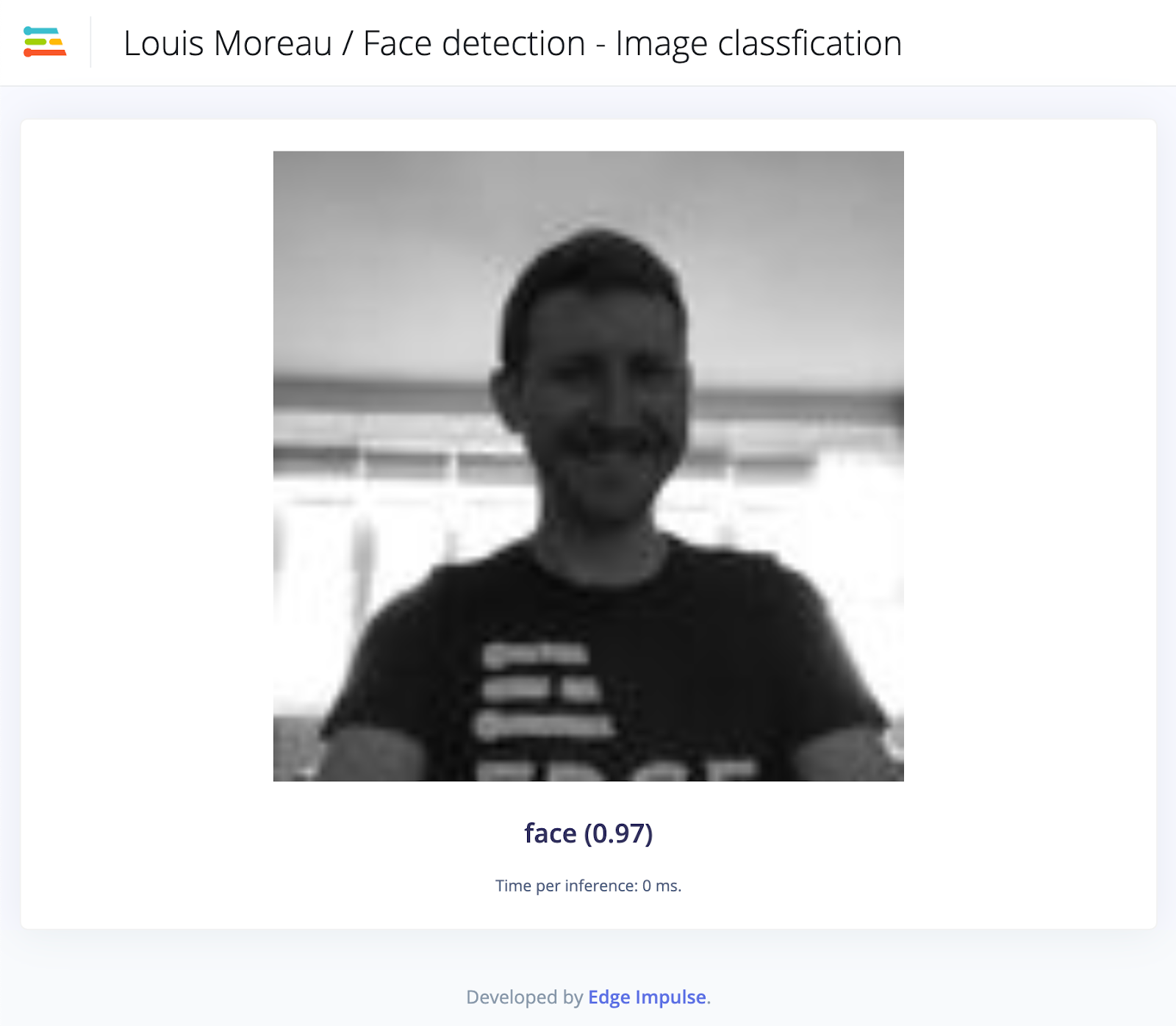

The two most common image processing problems are image classification and object detection.

Image classification takes an image as an input and outputs what type of object is in the image. This technique works great, even on microcontrollers, as long as we only need to detect a single object in the image.

The question the model is trying to answer is: “Is there a face or not in the image?”

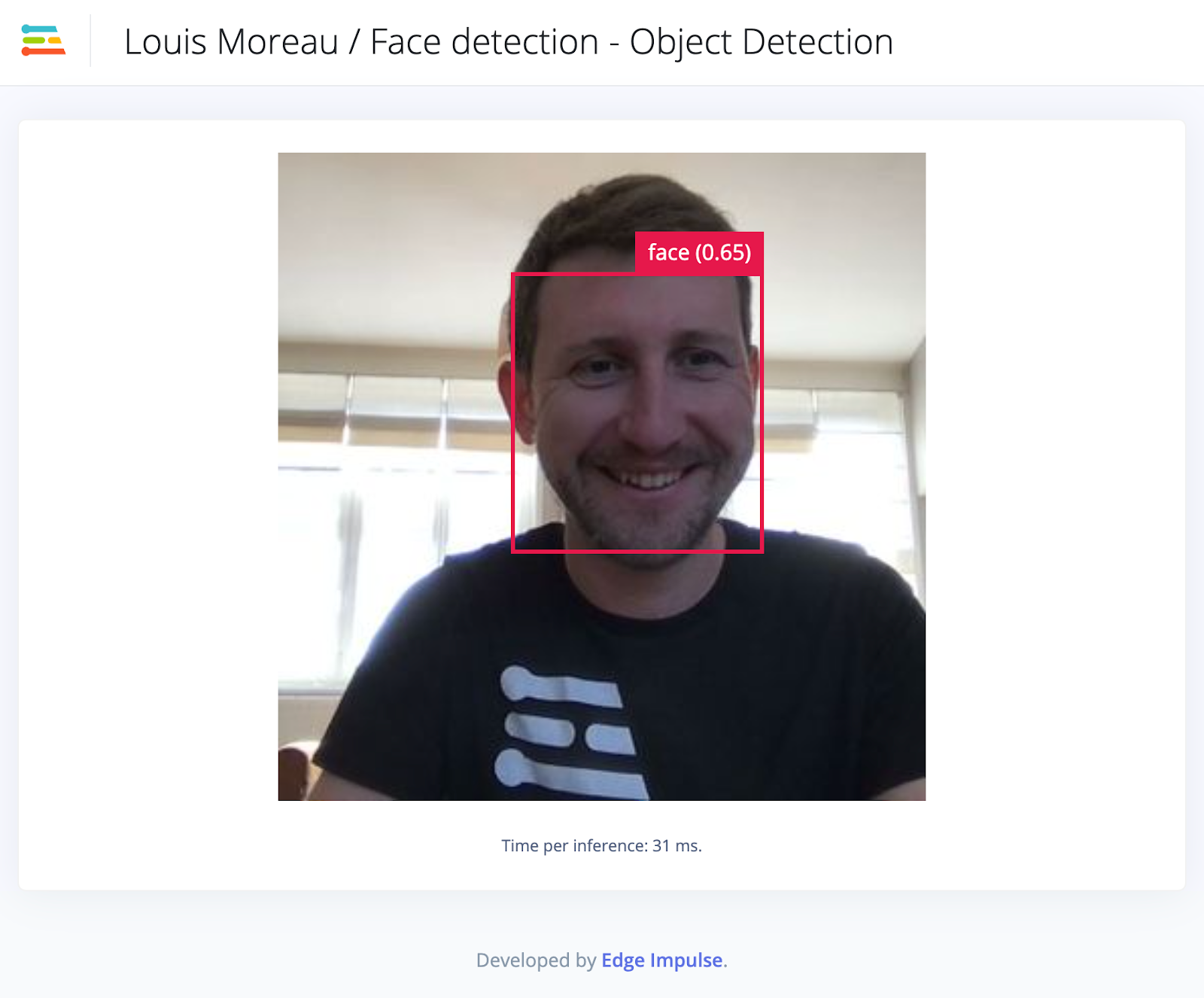

Object detection takes an image and outputs information about the class and number of objects, position, and size in the image.

The question the model is trying to answer is: “Are there faces in the image, where and what size are they?”

Since object detection models are making a more complex decision than object classification models they are often larger (in parameters) and require more data to train. This is why we hardly see any of these models running on microcontrollers.

The FOMO model provides a variant in between; a simplified version of object detection that is suitable for many use cases where the position of the objects in the image is needed but when a large or complex model cannot be used due to resource constraints on the device.

The question the model is trying to answer is: “Are there faces in the image, where are they?”

Design choices

Trained on centroid

The main design decisions for FOMO are based on the idea that a lot of object detection problems don’t actually need the size of the objects but rather the number of objects and their position in the frame.

Based on this observation, classical bounding boxes are no longer needed. Instead, a visualization based on centroids corresponds much better to the need.

Note that, to keep the interoperability with other models, your training image input still uses bounding boxes.

Designed to be flexible

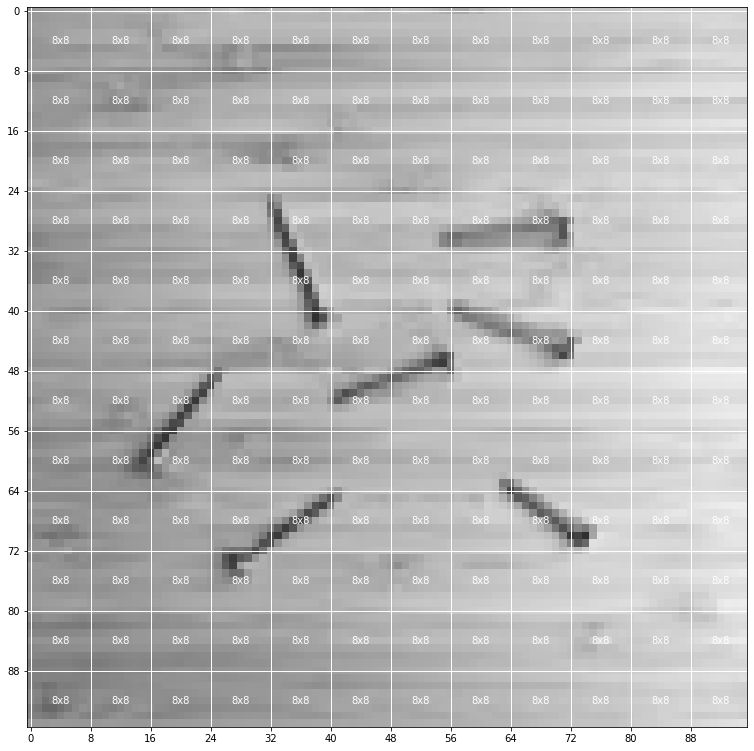

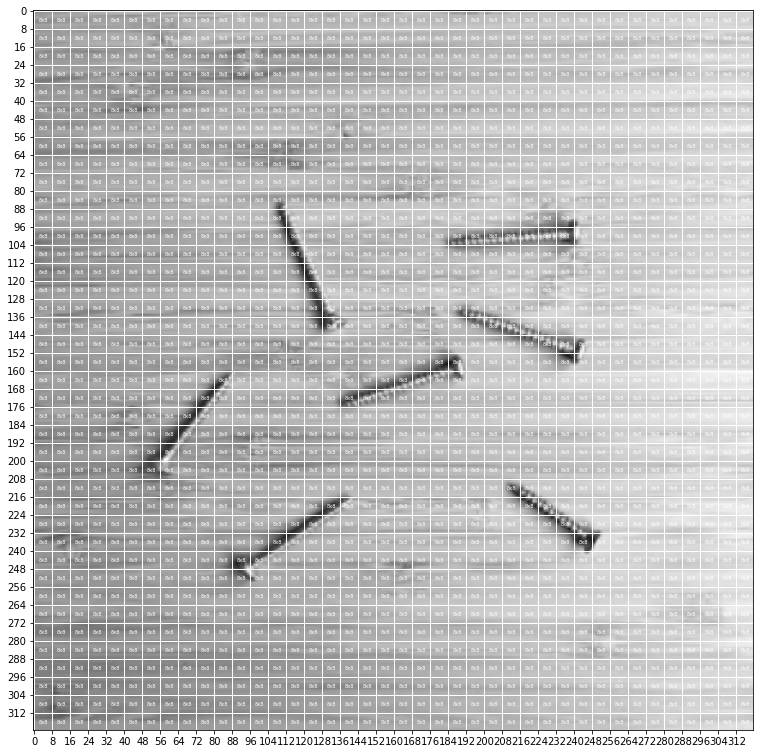

Because of the underneath technique used by FOMO, when we run the model on an input image, we split this image into grids (or heat maps) and we then run an image classification-like technique to classify the grids independently. By default the grid size is 8×8 pixels, which means for a 96×96 image, the heat map will be 12×12. For a 320×320 image, the heat map will be 40×40.

96×96 image split into a 12×12 heat map

320×320 image split into a 40×40 heat map

The resolution can be adjusted depending on the use case. You can even train on smaller patches, and then scale up during inference.

Another beauty of FOMO is its fully convolutional nature, which means that just the ratio is set and you can use it as an add-on to any convolutional image network (including transfer learning models).

Finally, FOMO also performs much better on small objects than YOLOv5 or MobileNet SSD.

Designed to be small and amazingly fast

One of the first goals when we started to design FOMO was to run object detection on microcontrollers where flash and RAM are most of the time very limited. The smallest version of FOMO (96×96 grayscale input, MobileNetV2 0.05 alpha) runs in <100KB RAM and ~10 fps on a Cortex-M4F at 80MHz.

Limitations

Works better if the objects have a similar size

Because we do not use bounding boxes, the size of the object is not available with FOMO.

When FOMO models are trained, it makes the assumption that the objects share an arbitrary size.

Objects shouldn’t be too close to each other.

If your classes are “screw,” “nail,” “bolt,” and “background” each cell (or grid) will be either “screw,” “nail,” “bolt,” or “background.” It’s thus not possible to detect objects with overlapping centroids. It is possible, though, to increase the resolution of the image (or to decrease the heat map factor) to reduce this limitation.

In short, if we make the following two constraining assumptions:

- All bounding boxes are square and have a fixed size

- The objects occupy a grid over the input

Then we can vastly reduce the complexity, and hence the size and speed of our model. In that case, FOMO will work in its optimal condition.

Going further

To start using FOMO: https://studio.edgeimpulse.com

FOMO documentation page: https://docs.edgeimpulse.com/docs/fomo-object-detection-for-constrained-devices

Happy discovery!

Louis Moreau

Senior Developer Relations Engineer, Edge Impulse

Mat Kelcey

Principal Machine Learning Engineer, Edge Impulse