This blog post was originally published at e-con Systems’ website. It is reprinted here with the permission of e-con Systems.

CCD and CMOS sensors come with their own benefits. The main difference lies in recreating images from electric signals. See why CMOS is already ahead of CCD – and what the future holds for both types of sensors in embedded vision.

The invention of image sensors dates back to the 1960s. A journey that started with designing the MOS (Metal Oxide Semiconductor) sensor architecture in the early 1960s and developing the first digital camera in 1969 to the latest SPAD (Single Photon Avalanche Diode) technique from Canon, sensor technology has come a long way. Despite these developments, CCD and CMOS sensors have remained two of the most popular sensor technologies in the imaging space for decades.

When both types of sensors come with their own set of advantages, CMOS sensors have started becoming more popular in recent years, especially in the embedded vision space. While a vast majority of the discussions around the comparison of these two technologies have revolved around mobile phone cameras and machine vision systems, not much has been spoken about the topic in light of embedded vision.

In this article, we briefly look at some of the key differences between CMOS and CCD sensors, why CMOS is gaining on – or is already beating– CCD in embedded vision, and what the future holds for both in the imaging world.

What are CCD and CMOS sensors? What are the key differences between the two?

Both CCD and CMOS technologies use the photoelectric effect to convert packets of light (or photons) into electric signals. Also, these two sensors are made up of pixel wells that collect these incoming photons. The fundamental difference between the two lies in recreating an image from electric signals.

Let us now look at each of these technologies in detail to learn more about their similarities and differences.

CCD (Charge Coupled Device) sensors

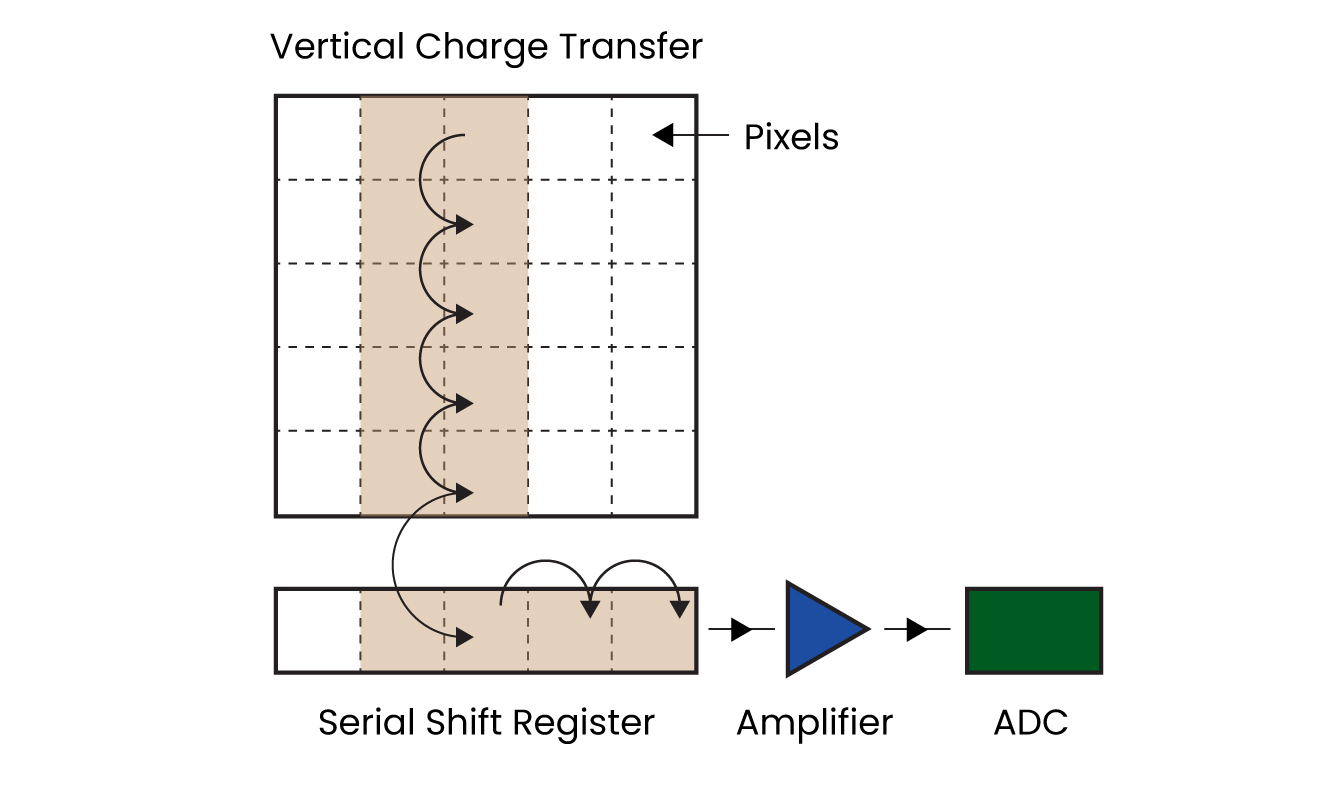

A CCD sensor is an analog device. Below the CCD layer lies the SSR (Serial Shift Register) which is connected to an amplifier on one end and an ADC (Analog to Digital Converter) on the other. The charge in the CCD layer is transferred to the SSR, and then to the amplifier and the ADC. This charge is read from each pixel site to recreate the image. Have a look at the below diagram to understand how the whole process works:

Figure 1: Charge transfer process in CCD sensors

In a CCD sensor, when photons get converted into electric signals, the charge to be converted into voltage is transferred through a limited number of nodes. This would mean that only a few amplifiers and ADCs are in action, which in turn results in less noise in the output image.

CMOS sensors

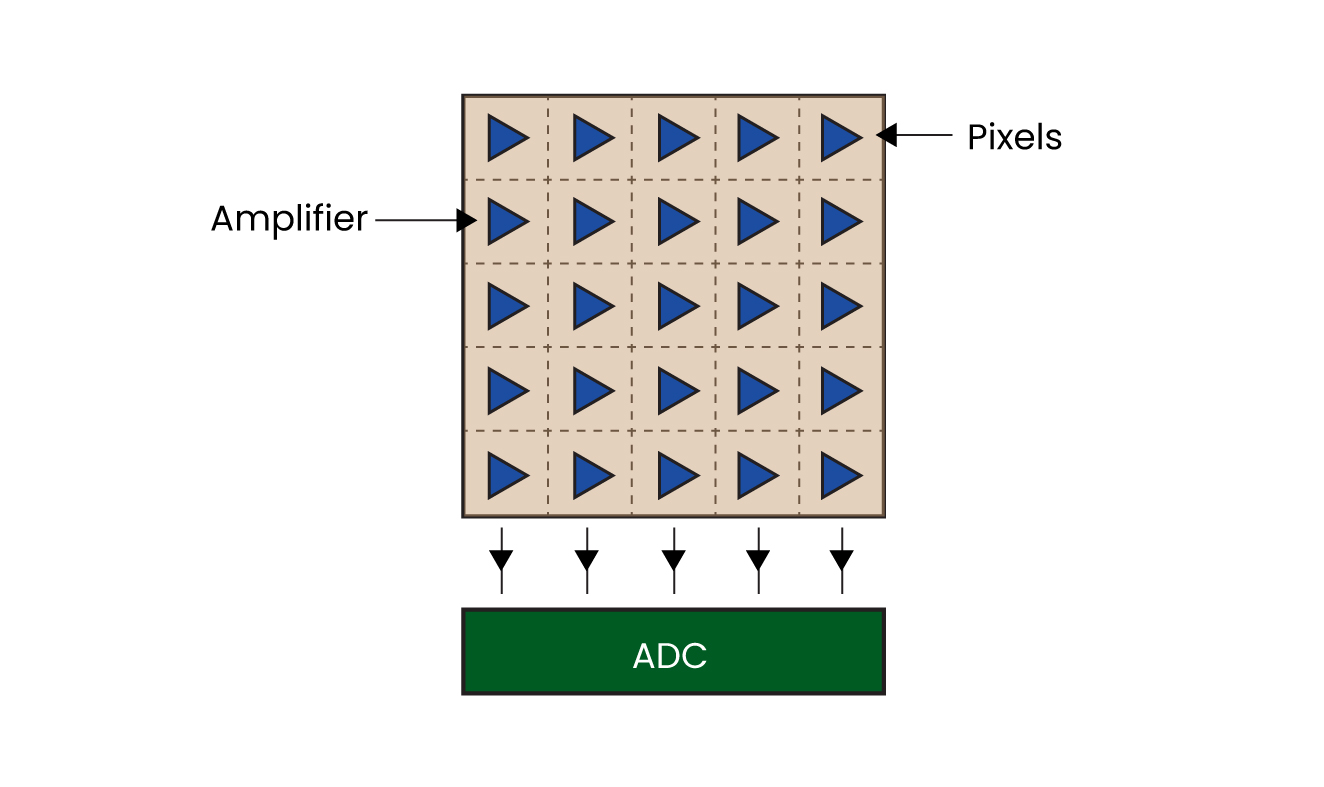

CMOS stands for ‘Complementary Metal Oxide Semiconductor’. The major difference between a CMOS and a CCD sensor is that the former has an amplifier in every pixel. In some CMOS sensor configurations, each pixel has an ADC as well. This results in higher noise compared to a CCD sensor. However, this setup makes it possible to read several sensor pixels simultaneously. In a later section, we will also see how CMOS sensors are matching CCD’s performance despite having this disadvantage.

To understand the architecture of a CMOS sensor, please have a look at the below image:

Figure 2: CMOS sensor architecture

Key differences between CCD and CMOS sensors

A 1 on 1 comparison of CCD and CMOS sensors can be done along 5 different parameters:

- Sensitivity: Until recently, CCD had an edge over CMOS when it comes to imaging in low light conditions as well as in the NIR (Near Infra-Red) region (we will talk more about low light and NIR imaging in an upcoming section). However, advancements in the CMOS sensor technology are helping it close in on CCD sensors.

- Noise and image quality: Having a lower number of ADCs and amplifiers helps CCD sensors to deliver images with lesser noise in comparison with CMOS sensors. However, sensor manufacturers are consistently coming up with innovative techniques to enhance image quality of CMOS sensors.

- Power consumption: Compared to CCD sensors, CMOS sensors draw less power which is particularly useful in embedded vision systems that have other power-hungry components in them.

- Supply chain and availability: With more sensor manufacturers moving away from the CCD technology, the availability of these sensors has been drastically reduced in the last few years. Also, if you are looking for a CCD supplier, you are likely to end up with fewer options as opposed to a large universe of vendors for CMOS sensors.

- Cost: CMOS easily wins the race when it comes to cost.

CCD sensors and CMOS sensors for low light and NIR imaging

CCD sensors remained the natural choice for many product developers for a very long time when it came to building camera-based devices that need to operate under low lighting conditions or an IR/NIR light source. This was true especially in higher temperature ranges where CMOS sensors needed an additional cooler to maintain the required level of QE (Quantum Efficiency – a measure that indicates the sensitivity of a sensor). This was also owing to the fact that CCD sensors offered the flexibility of having a thicker substrate layer for the absorption of photons in the NIR spectrum. But recent developments in the CMOS sensor technology have given birth to sensors that offer better sensitivity than traditional CCD sensors. For instance, the STARVIS series from Sony includes a wide variety of sensors with superior low light performance and NIR sensitivity.

Why CMOS sensors are winning the race in embedded vision

As discussed before, CMOS cameras are catching up on CCD cameras when it comes to most of the imaging parameters. Reduced costs with matching performance are encouraging more and more product developers to pick CMOS sensors over CCD sensors. Also, for the same reason, sensor manufacturers are also gradually moving away from developing new CCD sensors. Hence not much research or advancement is happening in the space. This is resulting in a cascade effect that is reducing the popularity of CCD sensors over time.

Moreover, many imaging applications like medical microscopy that stayed with CCD for much longer compared to other embedded vision applications have also joined the ‘CMOS wave’. Further, in addition to power consumption advantages, CMOS sensors also tend to offer higher frame rates and better dynamic range. This has also led embedded camera companies to come up with cutting-edge camera solutions using CMOS sensors. For instance, e-con Systems’ wide portfolio of CMOS cameras includes a 16MP autofocus USB camera, 4K HDR camera, global shutter camera module, IP67 rated Full HD GMSL2 HDR camera module, IP66 rated AI smart camera, and much more (To get a complete view of e-con Systems’ CMOS camera portfolio, please visit our Camera Selector).

Considering all these, there is no surprise that CMOS cameras are ruling the kingdom of embedded vision.

CCD sensors and CMOS sensors – what the future holds

With further developments stalled, we are soon likely to see the death of CCD sensors. In fact, many sensor manufacturers had already stopped producing CCD sensors years back, but are merely continuing to support their existing customers using them.

While things are dark on the CCD side, the future looks shiny for CMOS sensors. From global shutter to extreme low light cameras to high-resolution cameras, advancements are moving fast in CMOS technology. With leading sensor manufacturers such as Sony, Onsemi, Omnivision etc putting more focus on enhancing the sensitivity, resolution, dynamic range, power efficiency, etc of CMOS sensors, innovations in the space are happening with lightning speed.

Hope you were able to develop a good understanding of CMOS and CCD technologies and the key differences between the two types of sensors. If you have any further queries on the topic or are looking for help in integrating a CMOS camera for your vision-based application, please write to us at [email protected].

Prabu Kumar

Chief Technology Officer and Head of Camera Products, e-con Systems