Robotics Applications for Embedded Vision

R²D²: Unlocking Robotic Assembly and Contact Rich Manipulation with NVIDIA Research

This blog post was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. This edition of NVIDIA Robotics Research and Development Digest (R2D2) explores several contact-rich manipulation workflows for robotic assembly tasks from NVIDIA Research and how they can address key challenges with fixed automation, such as robustness, adaptability, and

Humanoid Robots Acting Up

The recent acting up of a humanoid robot that took place in China highlights the space for further development within this technology sector. IDTechEx‘s “Humanoid Robots 2025-2035: Technologies, Markets and Opportunities” report explores the growing need for strict regulation, alongside developments creating the potential for success behind this promising technology. Showcased by the surprise of

NVIDIA Powers Humanoid Robot Industry With Cloud-to-robot Computing Platforms for Physical AI

New NVIDIA Isaac GR00T Humanoid Open Models Soon Available for Download on Hugging Face GR00T-Dreams Blueprint Generates Data to Train Humanoid Robot Reasoning and Behavior NVIDIA RTX PRO 6000 Blackwell Workstations and RTX PRO Servers Accelerate Robot Simulation and Training Agility Robotics, Boston Dynamics, Foxconn, Lightwheel, NEURA Robotics and XPENG Robotics Among Many Robot Makers Adopting NVIDIA Isaac COMPUTEX—NVIDIA today announced VIDIA

D3 Embedded to Showcase Robotic Perception and Generative AI Solutions at the Embedded Vision Summit

D3 Embedded to demonstrate real-time solutions integrating cameras and 3D sensors, robust connectivity, embedded processing, and generative AI at the Embedded Vision Summit. Rochester, NY – May 15, 2025 – D3 Embedded announced today it will exhibit at the 2025 Embedded Vision Summit, the premier event for practical, deployable computer vision and AI, for product

SENSING Tech to Debut Three Advanced Vision Solutions at Embedded Vision Summit

May 16, 2025 – SENSING Tech will debut three new visual perception solutions at the upcoming Embedded Vision Summit USA, taking place from May 20 to 22 at the Santa Clara Convention Center. Reflecting the company’s ongoing commitment to imaging innovation, the new lineup includes an 8MP HDR/LFM Camera, a Defrosting & Deicing HDR Camera

What is the Role of Cameras in Pick and Place Robots?

This blog post was originally published at e-con Systems’ website. It is reprinted here with the permission of e-con Systems. Pick and place robots perform repetitive handling tasks with speed and consistency, making them invaluable across industries. These robots depend heavily on the right camera setup. Get insights about the challenges faced by cameras, their

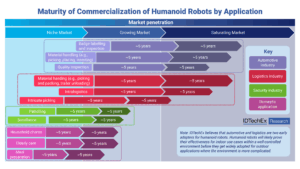

Fast Growth of Humanoid Robots in the Automotive & Logistics Industry

Timeline for humanoid robots being adopted for different industries. Historically, the automotive and logistics sectors have led the charge in adopting automation and robotics, ranging from mobile robots and industrial arms to collaborative robots. Now, in a similar trend, automotive OEMs and warehouse operators are once again taking the lead, this time with humanoid robots.

R²D²: Adapting Dexterous Robots with NVIDIA Research Workflows and Models

This blog post was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. Robotic arms are used today for assembly, packaging, inspection, and many more applications. However, they are still preprogrammed to perform specific and often repetitive tasks. To meet the increasing need for adaptability in most environments, perceptive arms

Humanoid Robots 2025-2035: Technologies, Markets and Opportunities

For more information, visit https://www.idtechex.com/en/research-report/humanoid-robots/1093. The market size of humanoid robots will reach around US$30 billion by 2035. Humanoid robots are widely regarded as “Artificial Intelligence Embodied in the Real World,” especially following the surge in AI advancements like ChatGPT over the past two years. From IDTechEx’s research and analysis, the market has seen a

Collaborative Robots Drive Workforce Return in Auto Industry

Analysis of the cobots market penetration by different tasks and industries. Collaborative robots (cobots), a type of lightweight and slow-moving robot designed to work next to human operators without a physical fence, have gained significant momentum thanks to their flexibility and the initiatives of bringing humans back to factories, industry 5.0, and many announcements from

Vision Components MIPI Bricks: Modular System for Plug and Play Embedded Vision

Vision Components at Robotics Summit & Expo Ettlingen, April 15, 2025. Vision Components presents its new VC MIPI Bricks system at Robotics Summit & Expo in Boston, April 30 – May 01. VC MIPI Bricks is a modular system based on perfectly matching components and comprises camera modules, accessories and services, right through to ready-to-use

Using AI to Better Understand the Ocean

This blog post was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. Humans know more about deep space than we know about Earth’s deepest oceans. But scientists have plans to change that—with the help of AI. “We have better maps of Mars than we do of our own exclusive

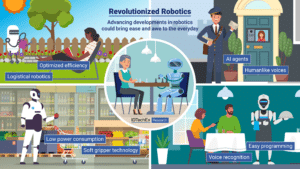

Futuristic Robotics: Robodogs and Gardening Experts

Robots mastering the weekly shop could soon free up time at the weekends. With the introduction of collaborative robots and AI agents, household chores could also become a display of the latest intelligent robotics technology. From companionship robots that engage in conversation and play board games to cars that drive themselves safely using radar and

R²D²: Advancing Robot Mobility and Whole-body Control with Novel Workflows and AI Foundation Models from NVIDIA Research

This blog post was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. Welcome to the first edition of the NVIDIA Robotics Research and Development Digest (R2D2). This technical blog series will give developers and researchers deeper insight and access to the latest physical AI and robotics research breakthroughs across

RGo Robotics Implements Vision-based Perception Engine on Qualcomm SoCs for Robotics Market

This blog post was originally published at Qualcomm’s website. It is reprinted here with the permission of Qualcomm. Mobile robotics developers equip their machines to behave autonomously in the real world by generating facility maps, localizing within them and understanding the geometry of their surroundings. Machines like autonomous mobile robots (AMR), automated guided vehicles (AGV)

NVIDIA Announces Isaac GR00T N1 — the World’s First Open Humanoid Robot Foundation Model — and Simulation Frameworks to Speed Robot Development

Now Available, Fully Customizable Foundation Model Brings Generalized Skills and Reasoning to Humanoid Robots NVIDIA, Google DeepMind and Disney Research Collaborate to Develop Next-Generation Open-Source Newton Physics Engine New Omniverse Blueprint for Synthetic Data Generation and Open-Source Dataset Jumpstart Physical AI Data Flywheel March 18, 2025—GTC—NVIDIA today announced a portfolio of technologies to supercharge humanoid

NVIDIA Announces Major Release of Cosmos World Foundation Models and Physical AI Data Tools

New Models Enable Prediction, Controllable World Generation and Reasoning for Physical AI Two New Blueprints Deliver Massive Physical AI Synthetic Data Generation for Robot and Autonomous Vehicle Post-Training 1X, Agility Robotics, Figure AI, Skild AI Among Early Adopters March 18, 2025—GTC—NVIDIA today announced a major release of new NVIDIA Cosmos™ world foundation models (WFMs), introducing

NVIDIA Unveils Open Physical AI Dataset to Advance Robotics and Autonomous Vehicle Development

Expected to become the world’s largest such dataset, the initial release of standardized synthetic data is now available to robotics developers as open source. Teaching autonomous robots and vehicles how to interact with the physical world requires vast amounts of high-quality data. To give researchers and developers a head start, NVIDIA is releasing a massive,