This blog post was originally published at AImotive’s website. It is reprinted here with the permission of AImotive.

The aiSim team adapted the Hardware-In-the-Loop testing approach with a unique spin on the concept that allows for testing complete automated driving systems with live simulated sensor data without any prerecorded footage. In this post, we will provide some insights into the benefits and challenges of introducing this powerful and exciting new feature to aiSim.

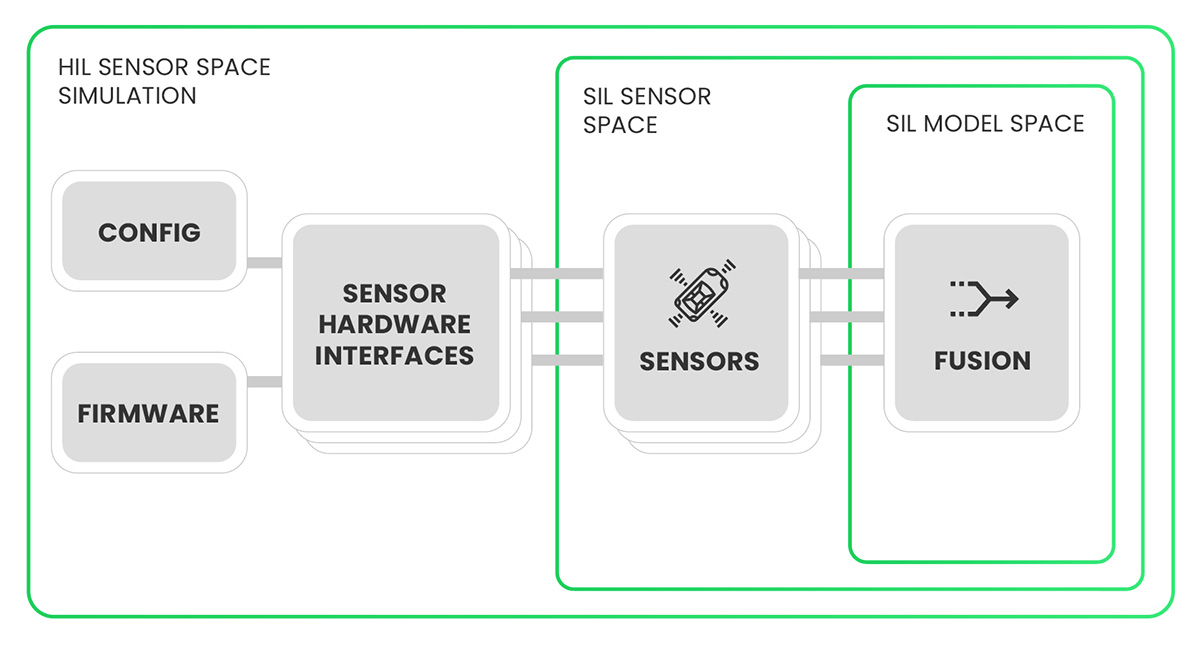

aiSim started development on the premise that extensive testing is essential to ensure the safety and reliability of any Automated Driving product. aiSim not only simulates the model space but also generates accurate virtual sensor data (including camera images) as well. All with the possibility of running in real time, even for a complex sensor setup required for L4 robotaxies, in closed-loop, reacting to live control signals.

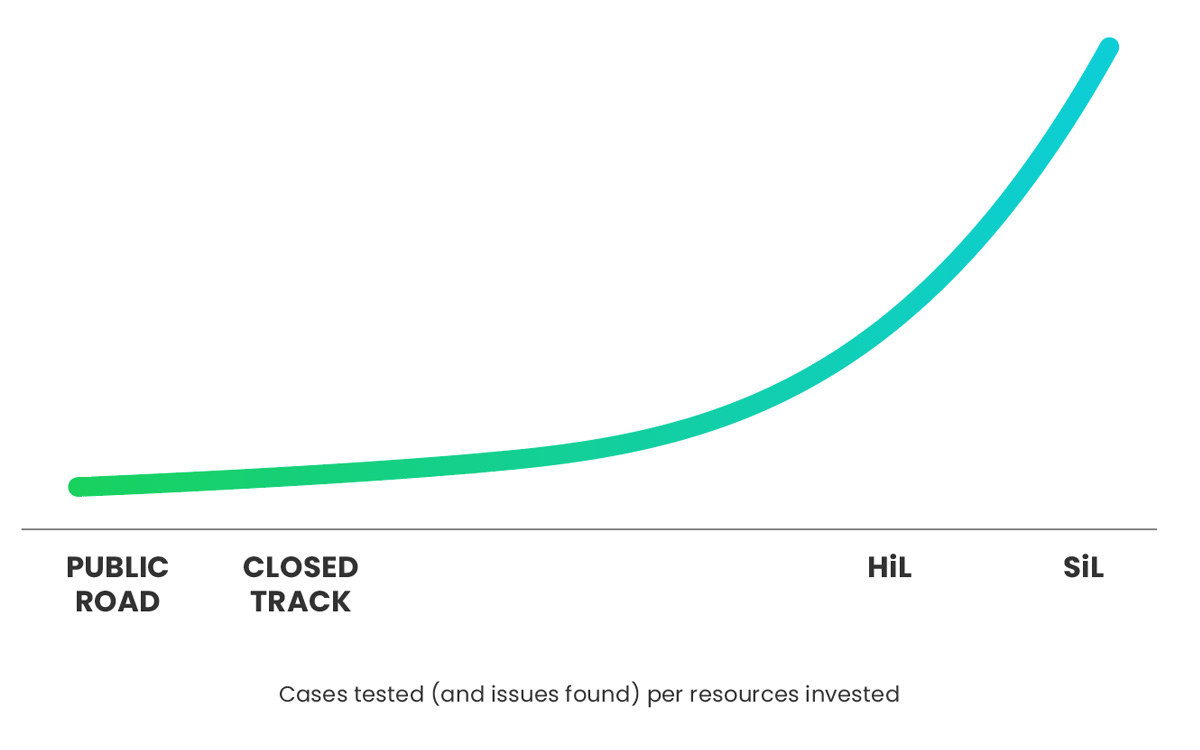

aiSim has large-scale Software-In-the-Loop (SiL) testing capabilities where integration with an AD software means program modules associated with hardware interfacing are replaced with modules interfacing with the simulated world. This reduces hardware reliance during runtime, which feeds into the flexible test deployment and scalability SiL simulator testing is known for.

With SiL testing we can cover most of an AD codebase. However, the rest, the replaced hardware interfacing modules, supporting firmware, hardware configuration parameters, have been impossible to test as part of a complete system without a real vehicle. Even in a vehicle, these parts might be challenging to test and require careful execution to cover all code paths consistently.

This was the key motivation for adapting our simulation for end-to-end Hardware-In-the-Loop (HiL) testing, to expand our capabilities for maximal code coverage. This approach retains many of the biggest advantages of simulator testing, namely precise reproducibility and the opportunity for automation (even in Continuous Integration pipelines) when installed in a lab or server room. It also retains the more exotic features, such as controlled fault injection into the simulated data.

From the beginning of our HiL development with aiSim, our internal HiL setups have been modeling real and complex vehicle and sensor setups, already in active development and in active use within aiMotive. This poses the challenge of adapting to the intricacies of a real-world hardware system but also ensures that the data that we produce can satisfy such an AD setup. Luckily, we also have the in-house experience and know-how to rise to these challenges. This highlights the potential in aiMotive’s unique company structure, and HiL perfectly demonstrates the synergy of the company’s departments:

- aiSim department, providing a reliable simulation platform running in real-time

- aiDrive department, developing a versatile self-driving software stack, posing as an ever-present in-house ‘customer’ for aiSim, also providing detailed feedback and internal know-how

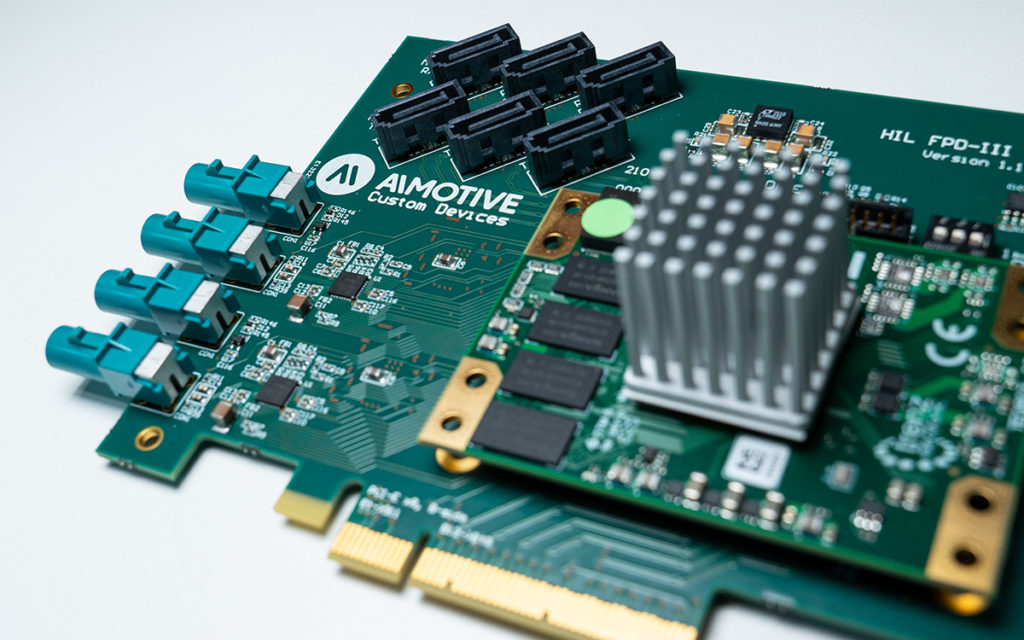

- Hardware department can design and produce custom hardware equipment

The latter refers to the HiL camera serializer cards developed by our custom devices team. We already use the prototypes of these cards in our HiL setups. Using this hardware with aiSim enables us to create and efficiently transfer multiple synthetic live high-resolution camera feeds while reacting to vehicle control signals, a closed-loop HiL camera simulation, which would be impossible with offline rendered or prerecorded footage.

As with all things in engineering, choosing a testing approach is an exercise of balancing tradeoffs. Testing systems as complex as Automated Driving products will always require a combination of multiple approaches. In-vehicle testing will always be the most authentic source of data; SiL testing is still unmatched in scalability and flexibility. With HiL, you can further increase testing yields to detect issues sooner, with more precise feedback to developers, even on the components most difficult to test.

The ultimate solution is to integrate all testing activities into a single automated toolchain to provide maximal coverage for the automated driving systems. You can read more about this topic in an upcoming blog post soon.

Gábor Könyvesi

AImotive