Steve Teig, CEO of Perceive, presents the “TinyML Isn’t Thinking Big Enough” tutorial at the May 2021 Embedded Vision Summit.

Today, TinyML focuses primarily on shoehorning neural networks onto microcontrollers or small CPUs but misses the opportunity to transform all of ML because of two unfortunate assumptions: first, that tiny models must make significant performance and accuracy compromises to fit inside edge devices, and second, that tiny models should run on CPUs or microcontrollers.

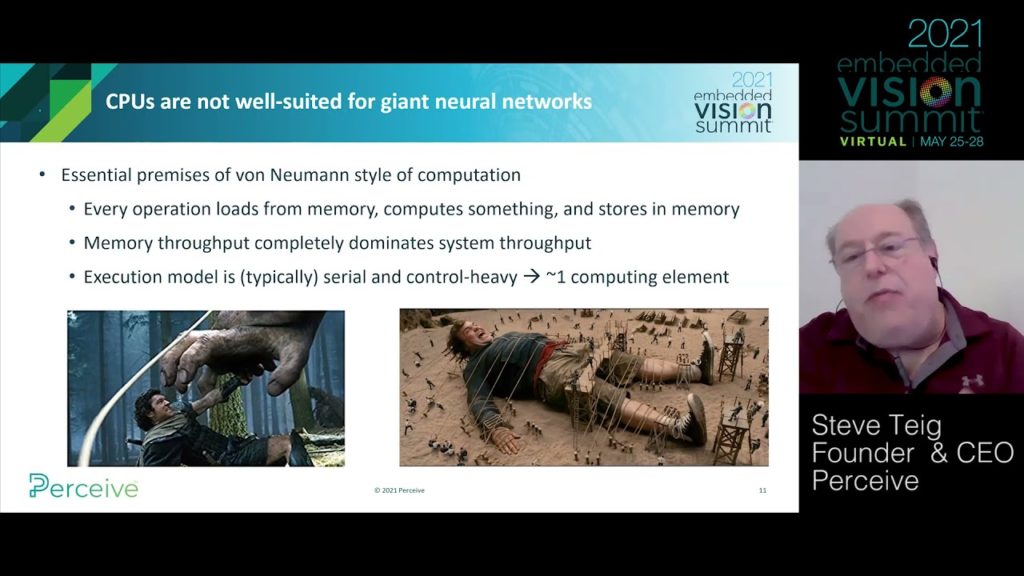

Regarding the first assumption, information-theoretic considerations would suggest that principled compression (vs., say, just replacing 32-bit weights with 8-bit weights) should make models more accurate, not less. For the second assumption, CPUs are saddled with an intrinsically power-inefficient memory model and mostly serial computation, but the evident parallelism of neural networks naturally leads to high-performance, power-efficient, massively parallel inference hardware. By upending these assumptions, TinyML can revolutionize all of ML–and not just inside microcontrollers.

See here for a PDF of the slides.