Sabina Pokhrel, Customer Success AI Engineer at Xailient, presents the “Introduction to DNN Model Compression Techniques” tutorial at the May 2021 Embedded Vision Summit.

Embedding real-time large-scale deep learning vision applications at the edge is challenging due to their huge computational, memory, and bandwidth requirements. System architects can mitigate these demands by modifying deep-neural networks to make them more energy efficient and less demanding of processing resources by applying various model compression approaches.

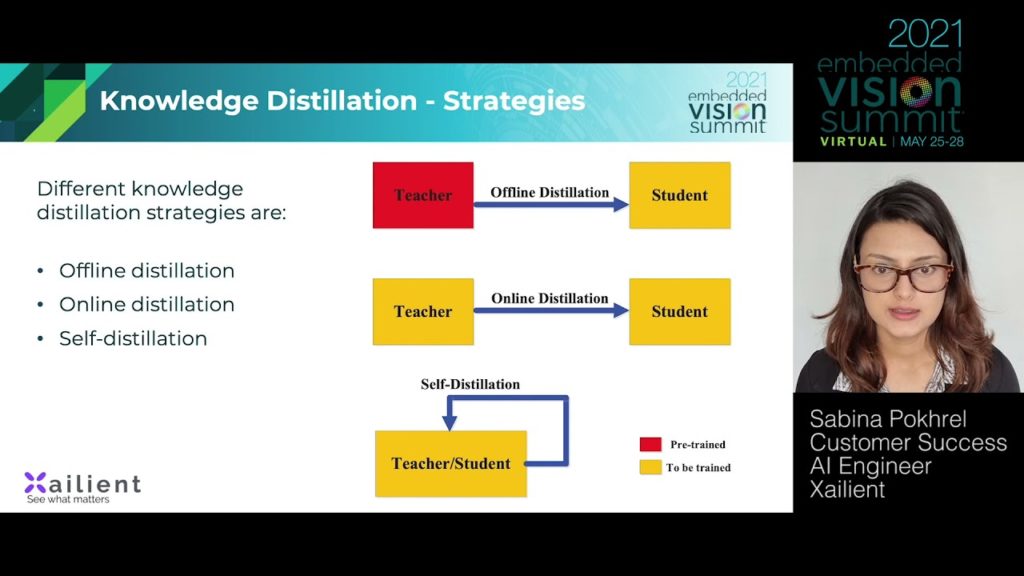

In this talk, Pokhrel provides an introduction to four established techniques for model compression. She discusses network pruning, quantization, knowledge distillation and low-rank factorization compression approaches.

See here for a PDF of the slides.