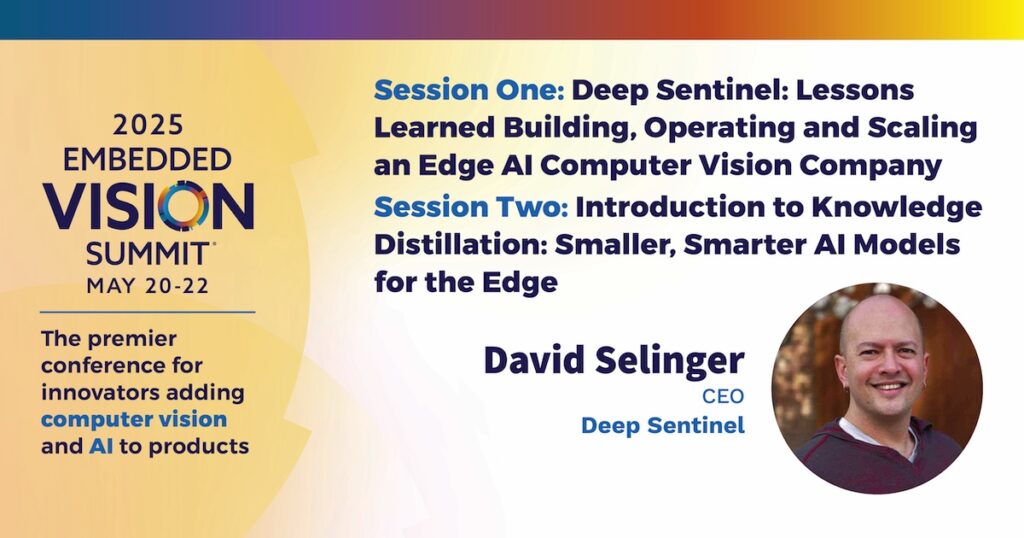

David Selinger, CEO of Deep Sentinel, presents the “Introduction to Knowledge Distillation: Smaller, Smarter AI Models for the Edge” tutorial at the May 2025 Embedded Vision Summit.

As edge computing demands smaller, more efficient models, knowledge distillation emerges as a key approach to model compression. In this presentation, Selinger delves into the details of this process, exploring what knowledge distillation entails and the requirements for its implementation, including dataset size and tools.

Selinger examines when to use knowledge distillation, its pros and cons, and showcase examples of successfully distilled models. Based on performance data highlighting the benefits of distillation, he concludes that knowledge distillation is a powerful tool for creating smaller, smarter models that thrive at the edge.

See here for a PDF of the slides.