Automotive Applications for Embedded Vision

Vision products in automotive applications can make us better and safer drivers

Vision products in automotive applications can serve to enhance the driving experience by making us better and safer drivers through both driver and road monitoring.

Driver monitoring applications use computer vision to ensure that driver remains alert and awake while operating the vehicle. These systems can monitor head movement and body language for indications that the driver is drowsy, thus posing a threat to others on the road. They can also monitor for driver distraction behaviors such as texting, eating, etc., responding with a friendly reminder that encourages the driver to focus on the road instead.

In addition to monitoring activities occurring inside the vehicle, exterior applications such as lane departure warning systems can use video with lane detection algorithms to recognize the lane markings and road edges and estimate the position of the car within the lane. The driver can then be warned in cases of unintentional lane departure. Solutions exist to read roadside warning signs and to alert the driver if they are not heeded, as well as for collision mitigation, blind spot detection, park and reverse assist, self-parking vehicles and event-data recording.

Eventually, this technology will to lead cars with self-driving capability; Google, for example, is already testing prototypes. However many automotive industry experts believe that the goal of vision in vehicles is not so much to eliminate the driving experience but to just to make it safer, at least in the near term.

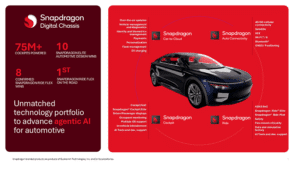

Qualcomm Drives the Future of Mobility with Strong Snapdragon Digital Chassis Momentum and Agentic AI for Major Global Automakers Worldwide

Key Takeaways: Qualcomm extends its automotive leadership with new collaborations, including Google, to power next‑gen software‑defined vehicles and agentic AI‑driven personalization. Snapdragon Ride and Cockpit Elite Platforms, and Snapdragon Ride Flex SoC, see rapid adoption, adding new design-wins and delivering the industry’s first commercialized mixed‑criticality platform that integrates cockpit, advanced driver‑assistance systems, and end‑to‑end AI. Decade of in-vehicle infotainment

SiMa.ai Announces First Integrated Capability with Synopsys to Accelerate Automotive Physical AI Development

San Jose, California – January 6, 2026 – SiMa.ai today announced the first integrated capability resulting from its strategic collaboration with Synopsys. The joint solution provides a blueprint to accelerate architecture exploration and early virtual software development for AI- ready, next-generation automotive SoCs that support applications such as Advanced Driver Assistance Systems (ADAS) and In-vehicle-Infotainment

D3 Embedded Showcases Camera/Radar Fusion, ADAS Cameras, Driver Monitoring, and LWIR solutions at CES

Las Vegas, NV, January 7, 2026 — D3 Embedded is showcasing a suite of technology solutions in partnership with fellow Edge AI and Vision Alliance Members HTEC, STMicroelectronics and Texas Instruments at CES 2026. Solutions include driver and in-cabin monitoring, ADAS, surveillance, targeting and human tracking – and will be viewable at different locations within

NVIDIA DRIVE AV Software Debuts in All-New Mercedes-Benz CLA

This post was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. Production launch of enhanced level 2 driver-assistance system in the US this year signals start of broader rollout of NVIDIA’s full-stack software across the automotive industry. NVIDIA is enabling a new era of AI-defined driving, bringing its NVIDIA

NVIDIA Unveils New Open Models, Data and Tools to Advance AI Across Every Industry

This post was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. Expanding the open model universe, NVIDIA today released new open models, data and tools to advance AI across every industry. These models — spanning the NVIDIA Nemotron family for agentic AI, the NVIDIA Cosmos platform for physical AI, the new NVIDIA Alpamayo family for autonomous vehicle

TI Accelerates the Shift Toward Autonomous Vehicles with Expanded Automotive Portfolio

New analog and embedded processing technologies from TI enable automakers to deliver smarter, safer and more connected driving experiences across their entire vehicle fleet Key Takeaways: TI’s newest family of high-performance computing SoCs delivers safe, efficient edge AI performance up to 1200 TOPS with a proprietary NPU and chiplet-ready design. Automakers can simplify radar designs and

Synopsys Showcases Vision For AI-Driven, Software-Defined Automotive Engineering at CES 2026

Synopsys solutions accelerate innovation from systems to silicon, enabling more than 90% of the top 100 automotive suppliers to boost engineering productivity, predict system performance, and deliver safer, more sustainable mobility Key Takeaways: Synopsys will support the Fédération Internationale de l’Automobile (FIA), the global governing body for motorsport and the federation for mobility organizations

NXP’s New S32N7 Unlocks the Full Potential of SDVs

Key Takeaways: S32N7 processor series fully digitizes and centralizes core vehicle functions OEMs can cut complexity and unlock AI-powered innovation fleetwide Bosch is the first to deploy the S32N7 in its vehicle integration platform LAS VEGAS, Jan. 05, 2026 (GLOBE NEWSWIRE) — CES — NXP Semiconductors N.V. (NASDAQ: NXPI) today unveiled the S32N7 super-integration processor series, building

Ambarella’s CV3-AD655 Surround View with IMG BXM GPU: A Case Study

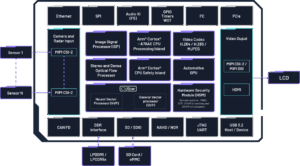

The CV3-AD family block diagram. This blog post was originally published at Imagination Technologies’ website. It is reprinted here with the permission of Imagination Technologies. Ambarella’s CV3-AD655 autonomous driving AI domain controller pairs energy-efficient compute with Imagination’s IMG BXM GPU to enable real-time surround-view visualisation for L2++/L3 vehicles. This case study outlines the industry shift

NVIDIA Advances Open Model Development for Digital and Physical AI

This blog post was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. NVIDIA releases new AI tools for speech, safety and autonomous driving — including NVIDIA DRIVE Alpamayo-R1, the world’s first open industry-scale reasoning vision language action model for mobility — and a new independent benchmark recognizes the openness and