Development Tools for Embedded Vision

ENCOMPASSING MOST OF THE STANDARD ARSENAL USED FOR DEVELOPING REAL-TIME EMBEDDED PROCESSOR SYSTEMS

The software tools (compilers, debuggers, operating systems, libraries, etc.) encompass most of the standard arsenal used for developing real-time embedded processor systems, while adding in specialized vision libraries and possibly vendor-specific development tools for software development. On the hardware side, the requirements will depend on the application space, since the designer may need equipment for monitoring and testing real-time video data. Most of these hardware development tools are already used for other types of video system design.

Both general-purpose and vender-specific tools

Many vendors of vision devices use integrated CPUs that are based on the same instruction set (ARM, x86, etc), allowing a common set of development tools for software development. However, even though the base instruction set is the same, each CPU vendor integrates a different set of peripherals that have unique software interface requirements. In addition, most vendors accelerate the CPU with specialized computing devices (GPUs, DSPs, FPGAs, etc.) This extended CPU programming model requires a customized version of standard development tools. Most CPU vendors develop their own optimized software tool chain, while also working with 3rd-party software tool suppliers to make sure that the CPU components are broadly supported.

Heterogeneous software development in an integrated development environment

Since vision applications often require a mix of processing architectures, the development tools become more complicated and must handle multiple instruction sets and additional system debugging challenges. Most vendors provide a suite of tools that integrate development tasks into a single interface for the developer, simplifying software development and testing.

Running BitNet on Qualcomm Hexagon with custom 1.58 kernels

This blog post was originally published at ENERZAi’s website. It is reprinted here with the permission of ENERZAi. Today, we are excited to share a milestone that our team has been working toward for some time. ENERZAi has successfully deployed BitNet (b1.58) 2B on the Qualcomm QCS6490 Hexagon NPU via QNN! If that sentence felt

“What We Learned Porting to OpenCV 5 with Claude Code,” a Presentation from Boston.AI

Mark Antonelli, CTO at Boston.AI presents “What We Learned Porting to OpenCV 5 with Claude Code” at the May 2026 Embedded Vision Summit. OpenCV 5 introduces significant architectural changes to improve vision performance and better utilize modern hardware. In addition to support for new features like vision-language models, there are… “What We Learned Porting to

“Accelerating Deep Learning Models on AMD Adaptive SoCs with the AMD Vitis AI Workflow,” a Presentation from AMD

Thomas Zerbs, Technical Marketing Engineer, Adaptive and Embedded Computing Group at AMD presents “Accelerating Deep Learning Models on AMD Adaptive SoCs with the AMD Vitis AI Workflow” at the May 2026 Embedded Vision Summit. In this presentation, we provide a practical end-to-end overview of the AMD Vitis™ AI workflow for… “Accelerating Deep Learning Models on

“Edge-First Coding Agents: Trustworthy Agentic Development for Real Devices,” a Presentation from Ambarella

Pietro Antonio Cicalese, Senior Technical Marketing Engineer at Ambarella, presents “Edge-First Coding Agents: Trustworthy Agentic Development for Real Devices” at the May 2026 Embedded Vision Summit. Coding agents are usually built as cloud-first abstractions. But for developing trustworthy, production-ready edge systems, we’ve found that coding agents should be designed from… “Edge-First Coding Agents: Trustworthy Agentic

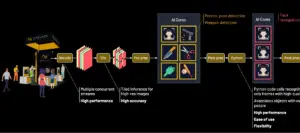

Scaling Edge AI for the Enterprise: Building the Ultimate Multi-Model, Multi-Stream Security System

This blog post was originally published at Axelera AI’s website. It is reprinted here with the permission of Axelera AI. At a Glance The Achievement: Real-time AI person-of-interest (POI) identification and threat detection across multiple 8K streams at 2.5 PetaOPS The Stack: Voyager SDK + Axelera Metis + Intel Xeon The Future: 3x performance leap with

How to Install OpenClaw and Hermes Agent on Qualcomm Arduino Boards, Rubik Pi 3 and Snapdragon PCs

This blog post was originally published at Qualcomm’s website. It is reprinted here with the permission of Qualcomm. A Step-by-Step Guide with Arduino UNO Q, Rubik Pi 3, and PCs with Snapdragon Introduction Welcome, developers and tech enthusiasts! This blog post will guide you through running OpenClaw and Hermes Agent on Qualcomm Technologies’ platforms,

Improving CMOS Performance at Night

How Ubicept Photon Fusion enhances conventional sensors for automotive use This blog post was originally published at Ubicept’s website. It is reprinted here with the permission of Ubicept. We’re back with another demo! Previously, we explored how Ubicept Photon Fusion (UPF) improves high-frame-rate CMOS imaging and how those improvements can translate into better downstream

AMD’s Embedded Computing Summit Comes to London and Eindhoven

On June 16, 2026 in London, and on June 18, 2026 in Eindhoven, the AMD “Embedded Computing Summit Global Technical Tour” comes to Europe. From the event page: Where Embedded Innocation Meets Technical Depth The Embedded Computing Summit (ECS) Global Technical Tour is the premier in-person technical event series from AMD, bringing together engineers, architects,