Development Tools for Embedded Vision

ENCOMPASSING MOST OF THE STANDARD ARSENAL USED FOR DEVELOPING REAL-TIME EMBEDDED PROCESSOR SYSTEMS

The software tools (compilers, debuggers, operating systems, libraries, etc.) encompass most of the standard arsenal used for developing real-time embedded processor systems, while adding in specialized vision libraries and possibly vendor-specific development tools for software development. On the hardware side, the requirements will depend on the application space, since the designer may need equipment for monitoring and testing real-time video data. Most of these hardware development tools are already used for other types of video system design.

Both general-purpose and vender-specific tools

Many vendors of vision devices use integrated CPUs that are based on the same instruction set (ARM, x86, etc), allowing a common set of development tools for software development. However, even though the base instruction set is the same, each CPU vendor integrates a different set of peripherals that have unique software interface requirements. In addition, most vendors accelerate the CPU with specialized computing devices (GPUs, DSPs, FPGAs, etc.) This extended CPU programming model requires a customized version of standard development tools. Most CPU vendors develop their own optimized software tool chain, while also working with 3rd-party software tool suppliers to make sure that the CPU components are broadly supported.

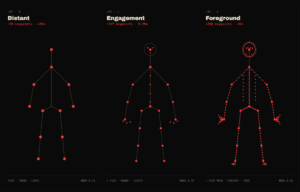

Heterogeneous software development in an integrated development environment

Since vision applications often require a mix of processing architectures, the development tools become more complicated and must handle multiple instruction sets and additional system debugging challenges. Most vendors provide a suite of tools that integrate development tasks into a single interface for the developer, simplifying software development and testing.

Upcoming Webinar on Building Your First Real-World AI Tool

On May 21, 2026, at 1:00 pm EDT (10:00 am PDT) Boston.AI will deliver a webinar “What It Takes to Build Your First Real-World AI Tool” From the event page: Most AI talks focus on what’s possible. This one shows how to actually use AI in product development, even if you’re just getting started. In

The Next Frontier in AI Is Not Just Reasoning. It Is Knowing When to Look Again

Why AI Metacognition Requires Hierarchical Random-access Data This blog post was originally published at V-Nova’s website. It is reprinted here with the permission of V-Nova. Executive Summary (TL;DR) Today’s AI systems can reason impressively, but they still struggle to know when to look again. Humans use metacognition as a feedback loop between thought and perception:

Synetic Debuts LYNX Computer Vision SDK at the 2026 Embedded Vision Summit

New SDK helps teams add real-time vision capabilities to robotics, industrial automation, security and edge AI systems with faster deployment, flexible integration and continuous model improvement REDMOND, Wash. — May 11, 2026 — Synetic, Inc., a provider of computer vision and synthetic data solutions for real-world AI systems, today announced the debut of LYNX, a

Chips&Media Completes Development of Next-Gen ‘AV2’ HW Decoder IP

Key Takeaways: Implementing AOMedia’s latest AV2 standard as world-class HW IP, leading the next-gen video ecosystem Targeting the North American Big Tech-led OTT market (YouTube, Netflix) and solidifying the justification for global standard adoption HW RTL release in May with active commercial licensing talks underway with major North American clients May 12, 2026 – Chips&Media

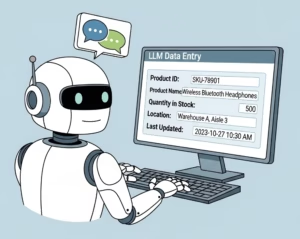

Case Study: How an Enterprise Tech Team Went from Dozens to 2,000+ Fine-Tuning Configurations

This blog post was originally published in expanded form at RapidFire AI’s website. It is reprinted here with the permission of RapidFire AI. The Use Case An AI-forward team at a Fortune 500 enterprise tech company builds intelligent autocomplete for enterprise form data entry: predicting what a user will select next across product dimensions, pricing fields,

Nota AI Wins Grand Prize at NVIDIA Nemotron Hackathon, Proving MoE Quantization Prowess with Synthetic Data Technology

Took 1st place in Track C and Grand Prize among all 20 competing teams with synthetic data generation technology specialized for MoE quantization Built a dataset using an agent based on Nemotron 3 Super120B, presenting a data-centric rather than algorithm-centric optimization approach SEOUL, South Korea, April 24, 2026 /PRNewswire/ — Nota AI, a leading AI model compression and optimization company,

Physical AI: From ST Sensors to a Robotics Platform, How Innovation Can Only Happen Through Collaboration

This blog post was originally published at STMicroelectronics’s website. It is reprinted here with the permission of STMicroelectronics. As technology aims to enable Physical AI, ST is sharing today how collaboration brought our sensors into a Holoscan Sensor Bridge module from Leopard Imaging, enabling developers to feed multi-modal sensing data to the NVIDIA Jetson Thor or

Upcoming Webinar on Sony’s IMX925/935 Sensor Series and High Performance SLVS-EC Interface

On May 12, 2026, at 10:00 am CEST, RESTAR FRAMOS will deliver a webinar “Reaching High-Speed and High-Resolution Architecture with IMX925/935 and SLVS-EC” From the event page: From sensor architecture to real-world integration — join the engineers behind the technology High-speed and high-resolution machine vision systems are pushing the limits of data throughput, latency, and