Development Tools for Embedded Vision

ENCOMPASSING MOST OF THE STANDARD ARSENAL USED FOR DEVELOPING REAL-TIME EMBEDDED PROCESSOR SYSTEMS

The software tools (compilers, debuggers, operating systems, libraries, etc.) encompass most of the standard arsenal used for developing real-time embedded processor systems, while adding in specialized vision libraries and possibly vendor-specific development tools for software development. On the hardware side, the requirements will depend on the application space, since the designer may need equipment for monitoring and testing real-time video data. Most of these hardware development tools are already used for other types of video system design.

Both general-purpose and vender-specific tools

Many vendors of vision devices use integrated CPUs that are based on the same instruction set (ARM, x86, etc), allowing a common set of development tools for software development. However, even though the base instruction set is the same, each CPU vendor integrates a different set of peripherals that have unique software interface requirements. In addition, most vendors accelerate the CPU with specialized computing devices (GPUs, DSPs, FPGAs, etc.) This extended CPU programming model requires a customized version of standard development tools. Most CPU vendors develop their own optimized software tool chain, while also working with 3rd-party software tool suppliers to make sure that the CPU components are broadly supported.

Heterogeneous software development in an integrated development environment

Since vision applications often require a mix of processing architectures, the development tools become more complicated and must handle multiple instruction sets and additional system debugging challenges. Most vendors provide a suite of tools that integrate development tasks into a single interface for the developer, simplifying software development and testing.

Upcoming Webinar on CSI-2 over D-PHY & C-PHY

On February 24, 2026, at 9:00 am PST (12:00 pm EST) MIPI Alliance will deliver a webinar “MIPI CSI-2 over D-PHY & C-PHY: Advancing Imaging Conduit Solutions” From the event page: MIPI CSI-2®, together with MIPI D-PHY™ and C-PHY™ physical layers, form the foundation of image sensor solutions across a wide range of markets, including

What’s New in MIPI Security: MIPI CCISE and Security for Debug

This blog post was originally published at MIPI Alliance’s website. It is reprinted here with the permission of MIPI Alliance. As the need for security becomes increasingly more critical, MIPI Alliance has continued to broaden its portfolio of standardized solutions, adding two more specifications in late 2025, and continuing work on significant updates to the MIPI Camera

Production-Ready, Full-Stack Edge AI Solutions Turn Microchip’s MCUs and MPUs Into Catalysts for Intelligent Real-Time Decision-Making

Chandler, Ariz., February 10, 2026 — A major next step for artificial intelligence (AI) and machine learning (ML) innovation is moving ML models from the cloud to the edge for real-time inferencing and decision-making applications in today’s industrial, automotive, data center and consumer Internet of Things (IoT) networks. Microchip Technology (Nasdaq: MCHP) has extended its edge AI offering

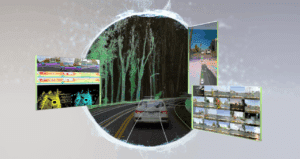

Accelerating next-generation automotive designs with the TDA5 Virtualizer™ Development Kit

This blog post was originally published at Texas Instruments’ website. It is reprinted here with the permission of Texas Instruments. Introduction Continuous innovation in high-performance, power-efficient systems-on-a-chip (SoCs) is enabling safer, smarter and more autonomous driving experiences in even more vehicles. As another big step forward, Texas Instruments and Synopsys developed a Virtualizer Development Kit™ (VDK) for the

Into the Omniverse: OpenUSD and NVIDIA Halos Accelerate Safety for Robotaxis, Physical AI Systems

This blog post was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. NVIDIA Editor’s note: This post is part of Into the Omniverse, a series focused on how developers, 3D practitioners and enterprises can transform their workflows using the latest advancements in OpenUSD and NVIDIA Omniverse. New NVIDIA safety

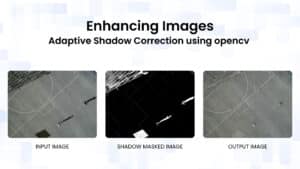

Enhancing Images: Adaptive Shadow Correction Using OpenCV

This blog post was originally published at OpenCV’s website. It is reprinted here with the permission of OpenCV. Imagine capturing the perfect landscape photo on a sunny day, only to find harsh shadows obscuring key details and distorting colors. Similarly, in computer vision projects, shadows can interfere with object detection algorithms, leading to inaccurate results.

Production Software Meets Production Hardware: Jetson Provisioning Now Available with Avocado OS

This blog post was originally published at Peridio’s website. It is reprinted here with the permission of Peridio. The gap between robotics prototypes and production deployments has always been an infrastructure problem disguised as a hardware problem. Teams build incredible computer vision models and robotic control systems on NVIDIA Jetson developer kits, only to hit

Robotics Builders Forum offers Hardware, Know-How and Networking to Developers

On February 25, 2026 from 8:30 am to 5:30 pm ET, Advantech, Qualcomm, Arrow, in partnership with D3 Embedded, Edge Impulse, and the Pittsburgh Robotics Network will present Robotics Builders Forum, an in-person conference for engineers and product teams. Qualcomm and D3 Embedded are members of the Edge AI and Vision Alliance, while Edge Impulse