Software for Embedded Vision

JetPack 7.2: The Production Moment for Physical AI

NVIDIA just shipped the most important Jetson release in years. Here’s what it means — and where Avocado OS fits in. This blog was originally published at Peridio’s website. It is reprinted here with the permission of Peridio. A couple weeks ago, the Peridio team was together at our Nashville HQ for our exec

“Accelerating Deep Learning Models on AMD Adaptive SoCs with the AMD Vitis AI Workflow,” a Presentation from AMD

Thomas Zerbs, Technical Marketing Engineer, Adaptive and Embedded Computing Group at AMD presents “Accelerating Deep Learning Models on AMD Adaptive SoCs with the AMD Vitis AI Workflow” at the May 2026 Embedded Vision Summit. In this presentation, we provide a practical end-to-end overview of the AMD Vitis™ AI workflow for… “Accelerating Deep Learning Models on

“Accelerating Physical AI with ROCm: High-Performance ML on AMD Embedded iGPUs,” a Presentation from AMD

Alok Gupta, Senior Technical Marketing Manager at AMD presents “Accelerating Physical AI with ROCm: High-Performance ML on AMD Embedded iGPUs” at the May 2026 Embedded Vision Summit. Discover how AMD’s ROCm software stack unlocks data center-class ML performance on embedded integrated GPUs, enabling diverse AI workloads from CNNs and transformers… “Accelerating Physical AI with ROCm:

“Edge-First Coding Agents: Trustworthy Agentic Development for Real Devices,” a Presentation from Ambarella

Pietro Antonio Cicalese, Senior Technical Marketing Engineer at Ambarella, presents “Edge-First Coding Agents: Trustworthy Agentic Development for Real Devices” at the May 2026 Embedded Vision Summit. Coding agents are usually built as cloud-first abstractions. But for developing trustworthy, production-ready edge systems, we’ve found that coding agents should be designed from… “Edge-First Coding Agents: Trustworthy Agentic

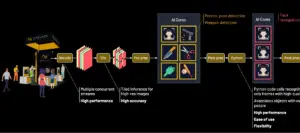

Scaling Edge AI for the Enterprise: Building the Ultimate Multi-Model, Multi-Stream Security System

This blog post was originally published at Axelera AI’s website. It is reprinted here with the permission of Axelera AI. At a Glance The Achievement: Real-time AI person-of-interest (POI) identification and threat detection across multiple 8K streams at 2.5 PetaOPS The Stack: Voyager SDK + Axelera Metis + Intel Xeon The Future: 3x performance leap with

AMD’s Embedded Computing Summit Comes to London and Eindhoven

On June 16, 2026 in London, and on June 18, 2026 in Eindhoven, the AMD “Embedded Computing Summit Global Technical Tour” comes to Europe. From the event page: Where Embedded Innocation Meets Technical Depth The Embedded Computing Summit (ECS) Global Technical Tour is the premier in-person technical event series from AMD, bringing together engineers, architects,

Upcoming Webinar on Building Your First Real-World AI Tool

On May 21, 2026, at 1:00 pm EDT (10:00 am PDT) Boston.AI will deliver a webinar “What It Takes to Build Your First Real-World AI Tool” From the event page: Most AI talks focus on what’s possible. This one shows how to actually use AI in product development, even if you’re just getting started. In

AMD Silo AI and University of Bologna Start Spatial AI Collaboration for Robotics and Autonomous Driving

The research collaboration will focus on building geometry-aware perception modules on the AMD ROCm™ open software stack AMD Silo AI and University of Bologna through its Department of Computer Science and Engineering (DISI) are starting a research collaboration to bring explicit 3D geometry into the Vision Language Action (VLA) and world-model pipeline for robotics and

The Next Frontier in AI Is Not Just Reasoning. It Is Knowing When to Look Again

Why AI Metacognition Requires Hierarchical Random-access Data This blog post was originally published at V-Nova’s website. It is reprinted here with the permission of V-Nova. Executive Summary (TL;DR) Today’s AI systems can reason impressively, but they still struggle to know when to look again. Humans use metacognition as a feedback loop between thought and perception:

Upcoming Event on AI Transformation

KOTRA Silicon Valley will host “Physical AI Superconnect 2026,” on June 24, 2026, from 10:00 am to 8:00 pm PDT at the Computer History Museum in Mountain View, California. The event will feature insightful presentations, technology exhibits, matchmaking and networking. From the event page: M.AX: A New Era of Manufacturing AI Transformation Physical AI Superconnect

Renesas Completes Acquisition of Irida Labs to Expand Vision AI Software Capabilities and Accelerates System-Level Vision Solutions

TOKYO, Japan ― Renesas Electronics Corporation (TSE:6723, “Renesas”), a premier supplier of advanced semiconductor solutions, today announced that a subsidiary of Renesas has completed the acquisition of Irida Labs, a Greece-based company specializing in embedded software for AI-powered visual perception systems. The acquisition strengthens Renesas’ edge AI embedded processing offerings, a key secular growth area for Renesas. It also enables system-level solutions that integrate physical AI and software to power camera and machine

Physical AI: 8 Questions Every Engineering Leader Is Asking

This blog post was originally published at Geisel Software’s website. It is reprinted here with the permission of Geisel Software. Jensen Huang called it at CES 2025: “The next frontier of AI is physical.” Since then, the phrase has been everywhere — in investor decks, conference keynotes, and vendor pitches. But for the software engineering managers, directors, VPs, and

Airy3D Announces Support for MediaTek Genio SoCs for Edge 3D Vision Applications

Montreal, Canada – May 11, 2026 – Airy3D today announced that its DepthIQ™ SDK is supported on the MediaTek Genio Series of System-on-Chips, enabling compact and cost-efficient passive 3D vision solutions for embedded AI vision applications across robotics, industrial, retail, and smart devices. Airy3D’s DepthIQ technology enables simultaneous capture of high-quality 2D images and depth

Face Super Resolution for Better Video Experiences

This blog post was originally published at Visidon’s website. It is reprinted here with the permission of Visidon. Video has become the primary medium for communication — from hybrid meetings to live events and social media. At the same time, expectations have risen. Faces need to look sharp, expressive, and natural — even when captured from

Case Study: How an Enterprise Tech Team Went from Dozens to 2,000+ Fine-Tuning Configurations

This blog post was originally published in expanded form at RapidFire AI’s website. It is reprinted here with the permission of RapidFire AI. The Use Case An AI-forward team at a Fortune 500 enterprise tech company builds intelligent autocomplete for enterprise form data entry: predicting what a user will select next across product dimensions, pricing fields,

Physical AI: From ST Sensors to a Robotics Platform, How Innovation Can Only Happen Through Collaboration

This blog post was originally published at STMicroelectronics’s website. It is reprinted here with the permission of STMicroelectronics. As technology aims to enable Physical AI, ST is sharing today how collaboration brought our sensors into a Holoscan Sensor Bridge module from Leopard Imaging, enabling developers to feed multi-modal sensing data to the NVIDIA Jetson Thor or

How We Built a 100% Effective Multi-Layer Safety Filter for Enterprise AI Agents

How Rapidflare’s multi-layer safety filter achieved 100% protection against harmful content while maintaining zero false positives on legitimate queries. This blog post was originally published at Rapidflare’s website. It is reprinted here with the permission of Rapidflare. When you deploy an AI agent to a public developer community, the threat model changes completely. In a

Beyond the Bench: Reinventing Embedded Hardware with Grinn

This video was originally published at Peridio’s website. It is reprinted here with the permission of Peridio. In this episode of Beyond the Bench from Peridio, Bill Brock sits down with Robert Otręba, Founder & CEO of Grinn, a Poland-based embedded engineering company operating for nearly 18 years. Robert shares how Grinn grew from a two-person