Software for Embedded Vision

MPEG-5 LCEVC: A practical shift for industrial AI video pipelines

This blog post was originally published at V-Nova’s website. It is reprinted here with the permission of V-Nova. In Industrial and Defense environments, I hear the same story. More cameras. Higher resolutions. Stricter latency targets. Infrastructure that cannot be replaced easily. And increasing pressure around storage, bandwidth, compute, and privacy. This is why MPEG-5 LCEVC is becoming even more relevant. It improves compression

Key Trends Shaping the Semiconductor Industry in 2026

This blog post was originally published at HTEC’s website. It is reprinted here with the permission of HTEC. The hardware boom is slowing down. What comes next is a software, power, and inference problem—and most of the industry isn’t ready for any of it. AI chips are now 0.2% of all chips manufactured, but

Texas Instruments, D3 Embedded, Lattice and NVIDIA Show a Practical Radar-Camera Fusion Stack for Robotics

TI’s new application brief and companion demo outline how mmWave radar, camera input, FPGA-based sensor bridging and NVIDIA Holoscan can be combined into a low-latency perception pipeline for humanoids and other autonomous machines. Texas Instruments, D3 Embedded, Lattice Semiconductor and NVIDIA are outlining a concrete radar-camera fusion stack for robotics rather than just talking

Building Robotics Applications with Ryzen AI and ROS 2

This blog post was originally published at AMD’s website. It is reprinted here with the permission of AMD. This blog showcases how to deploy power-efficient Ryzen AI perception models with ROS 2 – the Robot Operating System. We utilize the Ryzen AI Max+ 395 (Strix-Halo) platform, which is equipped with an efficient Ryzen AI NPU and

BrainChip Unveils Radar Reference Platform to Bridge the ‘Identification Gap’ in Edge AI

LAGUNA HILLS, Calif. — April 6, 2026 — BrainChip Holdings Ltd (ASX: BRN, OTCQX: BRCHF, BCHPY), the world’s first commercial producer of ultra-low-power, neuromorphic AI technology, today announced the launch of its Radar Reference Platform. This fully validated hardware and AI stack is designed to provide real-time object classification at the edge, solving the critical “identification gap” that limits traditional radar

Gemma 4 Models Optimized for Intel Hardware: Enabling Instant Deployment from Day Zero

We’re excited to announce Intel’s strategic partnership with Google to deliver optimized Gemma 4 models on Intel hardware from day one. This collaboration enables developers to leverage the power of Google’s latest AI models on Intel hardware: Intel® Core™ Ultra processors, Intel® Xeon® CPUs, and Intel® Arc™ GPUs. Developers can create AI applications that run

Upcoming Webinar on NVIDIA IGX Thor

On April 15, 2026, at 9:00 pm PDT (12:00 pm EDT) NVIDIA will deliver a webinar “Unlock Real-Time Physical AI for the Industrial Edge” From the event page: Join us to learn how IGX Thor’s Blackwell-powered architecture is powering autonomous robots, surgical systems, and high-performance industrial automation at the edge. NVIDIA experts will walk through

2026: The Year Intelligence Gets Physical

This article was originally published at Analog Devices’ website. It is reprinted here with the permission of Analog Devices. Artificial intelligence is entering a new phase where models interpret contextual data whilst interacting with the physical world in real time. At Analog Devices, Inc. (ADI), we call this Physical Intelligence: intelligent systems that can perceive, reason

Why Night HDR Is More Challenging Than Daytime HDR

This blog post was originally published at Visidon’s website. It is reprinted here with the permission of Visidon. High Dynamic Range (HDR) imaging has become a standard feature in modern cameras, from smartphones to automotive and surveillance systems. While daytime HDR is already a complex task, nighttime HDR introduces a completely different level of difficulty. The same

AI-Assisted Coding: The Next Step in Abstraction

This blog post was originally published at Geisel Software’s website. It is reprinted here with the permission of Geisel Software. I’ve been using AI-assisted coding for my work a lot recently, and I’ll admit, I wasn’t sure how I felt about it. Was I cheating? How do I know it’s right? Do I admit to using

NVIDIA, T-Mobile and Partners Integrate Physical AI Applications on AI-RAN-Ready Infrastructure

News Summary: T-Mobile pilots NVIDIA RTX PRO 6000 Blackwell Server Edition AI infrastructure to demonstrate physical AI applications at the edge, complementing the AI-RAN Innovation Center’s distributed network Physical AI developers including Fogsphere, LinkerVision, Levatas, Vaidio and Siemens Energy are building reasoning and vision AI agents to the edge using the NVIDIA Metropolis Blueprint for

NVIDIA and Global Robotics Leaders Take Physical AI to the Real World

News Summary: Physical AI leaders across robot brain developers, industrial, and surgical robot giants and humanoid pioneers including ABB Robotics, AGIBOT, Agility, CMR Surgical, FANUC, Figure, Hexagon Robotics, KUKA, Medtronic, Skild AI, Universal Robots, World Labs and YASKAWA are building on NVIDIA technology to develop and deploy physical AI at scale. NVIDIA unveils new NVIDIA

Edge Container Registries Explained: How to Distribute Images Reliably at Scale

This blog post was originally published at Avassa’s website. It is reprinted here with the permission of Avassa. A mostly hidden piece of the container application puzzle is the image registry. If you’ve used Linux containers, you’ve come across one: it’s where container images are stored. Registries can be public (like Docker Hub) or private,

RTOS vs. Bare-Metal: Decision Matrix Tool for Projects Based on High-End Microcontrollers

This blog post was originally published at eInfochips’ website. It is reprinted here with the permission of eInfochips. Introduction When building a system with a powerful microcontroller (MCU) or microprocessor, such as an ARM Cortex-M4, M7, R5, RXv3, A15, or A53—one of the key decisions developers face is whether to use bare-metal programming or a

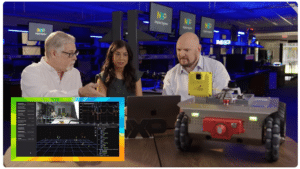

Conversations at the Edge with NXP

This blog post was originally published at Au-Zone’s website. It is reprinted here with the permission of Au-Zone. Are Single-Sensor Robots Obsolete? We think so, and we’re here to show you why. Au-Zone is proud to be featured in NXP Semiconductors’ Conversations at the Edge video series, a multi-part collaboration exploring innovation at the intersection of

Accelerating Product Development in the Era of Physical AI

This video was originally published at Peridio’s website. It is reprinted here with the permission of Peridio. The embedded world is undergoing its biggest transformation in a generation. AI workloads are now moving into the physical world — into cameras, robots, tractors, and drones — and edge devices are evolving into intelligent agents. Yet the

Why On-device AI Matters

This blog post was originally published at ENERZAi’s website. It is reprinted here with the permission of ENERZAi. Hello! I’m Minwoo Son from ENERZAi’s Business Development team. Through several posts so far, we’ve shared ENERZAi’s full-stack software capabilities for delivering high-performance on-device AI — including Optimium, our proprietary AI compiler that encapsulates our optimization expertise;

Upcoming Webinar on LLM-driven Driver Development

On March 19, 2026, at 1:00 pm EDT (10:00 am PDT) Boston.AI will deliver a webinar “Intelligent Driver Development with LLM Context Engineering ” From the event page: Developing even simple sensor drivers can consume valuable engineering time, requiring manual transcription of registers from datasheets into code—an error-prone and repetitive process. In this webinar, you’ll