Videos on Edge AI and Visual Intelligence

We hope that the compelling AI and visual intelligence case studies that follow will both entertain and inspire you, and that you’ll regularly revisit this page as new material is added. For more, monitor the News page, where you’ll frequently find video content embedded within the daily writeups.

Alliance Website Videos

VeriSilicon Demonstration of the Open Se Cura Project

Chris Wang, VP of Multimedia Technologies and a member of CTO office at VeriSilicon, demonstrates the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Wang demonstrates examples from the Open Se Cura Project, a joint effort between VeriSilicon and Google. The project showcases a scalable, power-efficient, and

VeriSilicon Demonstration of Advanced AR/VR Glasses Solutions

Dr. Mahadev Kolluru, Senior VP of North America and India Business at VeriSilicon, demonstrates the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Dr. Kolluru demonstrates products developed using the company’s IP licensing and turnkey silicon design services. Dr. Kolluru highlights how VeriSilicon supports cutting-edge AI and

Synopsys and Visionary.ai Demonstration of a Low-light Real-time AI Video Denoiser Tailored for NPX6 NPU IP

Gordon Cooper, Principal Product Manager at Synopsys, and David Jarmon, Senior VP of Worldwide Sales at Visionary.ai, demonstrates the companies’ latest edge AI and vision technologies and products in Synopsys’ booth at the 2025 Embedded Vision Summit. Specifically, Cooper and Jarmon demonstrate the future of low-light imaging with Visionary.ai’s cutting-edge real-time AI video denoiser. This

Synopsys Demonstration of Smart Architectural Exploration for AI SoCs

Guy Ben Haim, Senior Product Manager, and Gururaj Rao, Field Applications Engineer, both of Synopsys, demonstrate the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Ben Haim and Rao demonstrate how to optimize neural network performance with the Synopsys ARC MetaWare MX Development Toolkit. Ben Haim and

SqueezeBits Demonstration of On-device LLM Inference, Running a 2.4B Parameter Model on the iPhone 14 Pro

Taesu Kim, CTO of SqueezeBits, demonstrates the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Kim demonstrates a 2.4-billion-parameter large language model (LLM) running entirely on an iPhone 14 Pro without server connectivity. The device operates in airplane mode, highlighting on-device inference using a hybrid approach that

Sony Semiconductor Demonstration of AI Vision Devices and Tools for Industrial Use Cases

Zachary Li, Product and Business Development Manager at Sony America, demonstrates the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Li demonstrates his company’s AITRIOS products and ecosystem. Powered by the IMX500 intelligent vision sensor, Sony AITRIOS collaborates with Raspberry Pi for development kits and with leading

Sony Semiconductor Demonstration of Its Open-source Edge AI Stack with the IMX500 Intelligent Sensor

JF Joly, Product Manager for the AITRIOS platform at Sony Semiconductor, demonstrates the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Joly demonstrates Sony’s fully open-source software stack that enables the creation of AI-powered cameras using the IMX500 intelligent vision sensor. In this demo, Joly illustrates how

Sony Semiconductor Demonstration of On-sensor YOLO Inference with the Sony IMX500 and Raspberry Pi

Amir Servi, Edge AI Product Manager at Sony Semiconductors, demonstrates the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Servi demonstrates the IMX500 — the first vision sensor with integrated edge AI processing capabilities. Using the Raspberry Pi AI Camera and Ultralytics YOLOv11n models, Servi showcases real-time

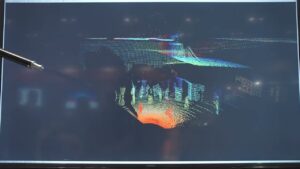

Namuga Vision Connectivity Demonstration of Compact Solid-state LiDAR for Automotive and Robotics Applications

Min Lee, Business Development Team Leader at Namuga Vision Connectivity, demonstrates the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Lee demonstrates a compact solid-state LiDAR solution tailored for automotive and robotics industries. This solid-state LiDAR features high precision, fast response time, and no moving parts—ideal for

Namuga Vision Connectivity Demonstration of an AI-powered Total Camera System for an Automotive Bus Solution

Min Lee, Business Development Team Leader at Namuga Vision Connectivity, demonstrates the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Lee demonstrates his company’s AI-powered total camera system. The system is designed for integration into public transportation, especially buses, enhancing safety and automation. It includes front-view, side-view,