Videos on Edge AI and Visual Intelligence

We hope that the compelling AI and visual intelligence case studies that follow will both entertain and inspire you, and that you’ll regularly revisit this page as new material is added. For more, monitor the News page, where you’ll frequently find video content embedded within the daily writeups.

Alliance Website Videos

Falcon Takes Flight: Connect Tech’s Vehicle AI Platform Was Built for the Worst Conditions on Earth

This blog post was originally published at Macnica America’s website. It is reprinted here with the permission of Macnica America. Dust. Vibration. Mud. Sub-zero mornings and scorching afternoons. These are the conditions that kill most compute platforms before they ever reach production, and yet it’s exactly these conditions, on farms, construction sites, and open-pit mines, where

Roadmap to Autonomous Manufacturing: An AI driven Approach Based on Engineering Foundations

This article was originally published at HCLTech’s website. It is reprinted here with the permission of HCL Tech. Autonomous manufacturing can be achieved through a structured journey built on foundational engineering, converged data and human-led AI Key takeaways Autonomous manufacturing is the natural evolution of automation, engineering and AI. It is already here in parts,

Beyond the Bench: Reinventing Embedded Hardware with Grinn

This video was originally published at Peridio’s website. It is reprinted here with the permission of Peridio. In this episode of Beyond the Bench from Peridio, Bill Brock sits down with Robert Otręba, Founder & CEO of Grinn, a Poland-based embedded engineering company operating for nearly 18 years. Robert shares how Grinn grew from a two-person

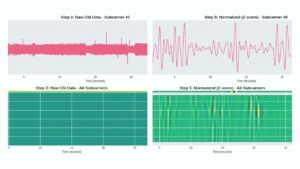

“Non-Contact Vital Sign Monitoring Using Low-Cost WiFi Devices,” a Presentation from the University of California, Santa Cruz

Katia Obraczka, Professor of Computer Science and Engineering, University of California, Santa Cruz, Pranay Kocheta, Lexington High School, and Nayan Bhatia, University of California, Santa Cruz present the “Non-Contact Vital Sign Monitoring Using Low-Cost WiFi Devices” tutorial at the December 2025 Edge AI and Vision Innovation Forum.

See How ams OSRAM Revolutionize Optical Solutions with the Help of Cadence Tools

OSRAM utilizes the Quantus SNA workflow for high-precision silicon. In modern semiconductor design, Heterogeneous Integration is the new frontier. When light detectors for Medical Imaging or depth sensors for Autonomous Systems are packed onto a single die alongside radio systems, the risk of interference skyrockets. For ams OSRAM, “sensing the power of light” means more

Lightweight Keyword Spotting Solution from Microchip

Microchip presents a customizable, target-agnostic solution to program wake words and voice commands. The ML model, generated and tested using a custom application, has low latency and a minimal memory footprint, making it ideal for resource-constrained embedded systems. The ML model can be integrated into voice-based applications running on any 32-bit microcontroller or microprocessor running

Restar Framos Demo: Sony IMX927 105 MP Global Shutter Image Sensor

Prashant Mehta from Restar Framos presents the Sony IMX927, a high-performance 105-megapixel global shutter image sensor. The demonstration highlights the sensor’s extreme resolution and speed, showing its ability to capture live, detailed images of a watch’s internal mechanics. By displaying a 1:1 pixel representation on a monitor, the demo illustrates how the sensor provides

Conversations at the Edge with NXP

This blog post was originally published at Au-Zone’s website. It is reprinted here with the permission of Au-Zone. Are Single-Sensor Robots Obsolete? We think so, and we’re here to show you why. Au-Zone is proud to be featured in NXP Semiconductors’ Conversations at the Edge video series, a multi-part collaboration exploring innovation at the intersection of

Accelerating Product Development in the Era of Physical AI

This video was originally published at Peridio’s website. It is reprinted here with the permission of Peridio. The embedded world is undergoing its biggest transformation in a generation. AI workloads are now moving into the physical world — into cameras, robots, tractors, and drones — and edge devices are evolving into intelligent agents. Yet the