Development Tools for Embedded Vision

ENCOMPASSING MOST OF THE STANDARD ARSENAL USED FOR DEVELOPING REAL-TIME EMBEDDED PROCESSOR SYSTEMS

The software tools (compilers, debuggers, operating systems, libraries, etc.) encompass most of the standard arsenal used for developing real-time embedded processor systems, while adding in specialized vision libraries and possibly vendor-specific development tools for software development. On the hardware side, the requirements will depend on the application space, since the designer may need equipment for monitoring and testing real-time video data. Most of these hardware development tools are already used for other types of video system design.

Both general-purpose and vender-specific tools

Many vendors of vision devices use integrated CPUs that are based on the same instruction set (ARM, x86, etc), allowing a common set of development tools for software development. However, even though the base instruction set is the same, each CPU vendor integrates a different set of peripherals that have unique software interface requirements. In addition, most vendors accelerate the CPU with specialized computing devices (GPUs, DSPs, FPGAs, etc.) This extended CPU programming model requires a customized version of standard development tools. Most CPU vendors develop their own optimized software tool chain, while also working with 3rd-party software tool suppliers to make sure that the CPU components are broadly supported.

Heterogeneous software development in an integrated development environment

Since vision applications often require a mix of processing architectures, the development tools become more complicated and must handle multiple instruction sets and additional system debugging challenges. Most vendors provide a suite of tools that integrate development tasks into a single interface for the developer, simplifying software development and testing.

OpenMV’s Latest: Firmware v4.8.1, Multi-sensor Vision, Faster Debug, and What’s Next

OpenMV kicked off 2026 with a substantial software update and a clearer look at where the platform is headed next. The headline is OpenMV Firmware v4.8.1 paired with OpenMV IDE v4.8.1, which adds multi-sensor capabilities, expands event-camera support, and lays the groundwork for a major debugging and connectivity upgrade coming with firmware v5. If you’re

On-Device LLMs in 2026: What Changed, What Matters, What’s Next

Editor’s note: Vikas Chandra is one of the keynote speakers for the 2026 Embedded Vision Summit. Check out his upcoming keynote “Scaling Down is the New Scaling Up here. The Embedded Vision Summit runs May 11-13, 2026 in Santa Clara, California. In On-Device LLMs: State of the Union, 2026, Vikas Chandra and Raghuraman Krishnamoorthi explain

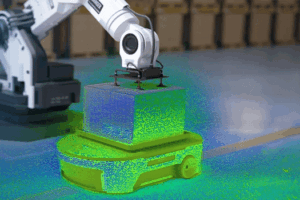

Faster Sensor Simulation for Robotics Training with Machine Learning Surrogates

This article was originally published at Analog Devices’ website. It is reprinted here with the permission of Analog Devices. Training robots in the physical world is slow, expensive, and difficult to scale. Roboticists developing AI policies depend on high quality data—especially for complex tasks like picking up flexible objects or navigating cluttered environments. These tasks rely

How Edge Computing In Retail Is Transforming the Shopping Experience

Forward-looking retailers are increasingly relying on an in-store combination of data collection through IoT devices with various types of sensors, AI for decisions and transactions on live data, and digital signage to communicate results and allow for interaction with customers and store associates. The applications built on this data- and AI-centric foundation range from more

Free Webinar Highlights Compelling Advantages of FPGAs

On March 17, 2026 at 9 am PT (noon ET), Efinix’s Mark Oliver, VP of Marketing and Business Development, will present the free hour webinar “Why your Next AI Accelerator Should Be an FPGA,” organized by the Edge AI and Vision Alliance. Here’s the description, from the event registration page: Edge AI system developers often

HCLTech Recognized as the ‘Innovation Award’ Winner of the 2025 Ericsson Supplier Awards

LONDON and NOIDA, India, Jan 19 2026 — HCLTech, a leading global technology company, today announced that it has been recognized by Ericsson as the ‘Innovation Award’ winner in the 2025 Ericsson Supplier Awards. The award has been given in recognition of HCLTech’s contribution to enhancing Ericsson’s operational efficiency through AI-driven capabilities and automation. HCLTech was selected

Microchip Expands PolarFire FPGA Smart Embedded Video Ecosystem with New SDI IP Cores and Quad CoaXPress Bridge Kit

Solution stacks deliver broadcast-quality video, SLVS-EC to CoaXPress bridging and ultra-low power operation for next-generation medical, industrial and robotic vision applications CHANDLER, Ariz., January 19, 2025 —Microchip Technology (Nasdaq: MCHP) has expanded its PolarFire® FPGA smart embedded video ecosystem to support developers who need reliable, low-power, high-bandwidth video connectivity. The embedded vision solution stacks combine hardware evaluation kits, development

Top Python Libraries of 2025

This article was originally published at Tryolabs’ website. It is reprinted here with the permission of Tryolabs. Welcome to the 11th edition of our yearly roundup of the Python libraries! If 2025 felt like the year of Large Language Models (LLMs) and agents, it’s because it truly was. The ecosystem expanded at incredible speed, with new models,