Videos on Edge AI and Visual Intelligence

We hope that the compelling AI and visual intelligence case studies that follow will both entertain and inspire you, and that you’ll regularly revisit this page as new material is added. For more, monitor the News page, where you’ll frequently find video content embedded within the daily writeups.

Alliance Website Videos

STMicroelectronics Demonstration of Real-time Multi-pose Detection

Therese Mbock, Product Marketing Engineer at STMicroelectronics, and Sylvain Bernard, Founder and Solutions Architect at Siana Systems, demonstration the companies’ latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Mbock and Bernard demonstrate using STMicroelectronics’ VD55G1 and STM32N6 to detect real-time human poses, ideal for fitness, gestures, and gaming.

Nota AI Demonstration of Nota Vision Agent, Next-generation Video Monitoring at the Edge

Tae-Ho Kim, CTO and Co-founder of Nota AI, demonstrates the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Kim demonstrates Nota Vision Agent—a next-generation video monitoring solution powered by Vision Language Models (VLMs). The solution delivers real-time analytics and intelligent alerts across critical domains including industrial safety,

Nextchip Demonstration of a High Definition Analog Video Solution Using STELLA5

Jonathan Lee, Manager of the Global Strategy Team at Nextchip, demonstrates the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Lee demonstrates a high definition analog video solution using his company’s STELLA5 module. Lee showcases how Nextchip’s proprietary AHD (Analog HD) technology offers a more cost-efficient and

Nextchip Demonstration of Various Computing Applications Using the Company’s ADAS SoC

Jonathan Lee, Manager of the Global Strategy Team at Nextchip, demonstrates the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Lee demonstrates various computing applications using his company’s ADAS SoC. Lee showcases how Nextchip’s ADAS SoC can be used for applications such as: Sensor fusion of iToF

Microchip Technology Demonstration of Real-time Object and Facial Recognition with Edge AI Platforms

Swapna Guramani, Applications Engineer for Microchip Technology, demonstrates the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Guramani demonstrates her company’s latest AI/ML capabilities in action: real-time object recognition using the SAMA7G54 32-bit MPU running Edge Impulse’s FOMO model, and facial recognition powered by TensorFlow Lite’s Mobile

Microchip Technology Demonstration of AI-powered Face ID on the Polarfire SoC FPGA Using the Vectorblox SDK

Avery Williams, Channel Marketing Manager for Microchip Technology, demonstrates the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Williams demonstrates ultra-efficient AI-powered facial recognition on Microchip’s PolarFire SoC FPGA using the VectorBlox Accelerator SDK. Pre-trained neural networks are quantized to INT8 and compiled to run directly on

Chips&Media Demonstration of Its WAVE-N NPU In High-quality, High-resolution Imaging Applications

Andy Lee, Vice President of U.S. Marketing at Chips&Media, demonstrates the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Lee demonstrates example high-quality, high-resolution imaging applications in edge devices implemented using WAVE-N, his company’s custom NPU. Key notes: Extreme efficiency, up to 90% of MAC utilization, for

Chips&Media Introduction to Its WAVE-N Specialized Video Processing NPU IP

Andy Lee, Vice President of U.S. Marketing at Chips&Media, demonstrates the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Lee introduces WAVE-N, his company’s specialized video processing NPU IP. Key notes: Extreme efficiency, up to 90% of MAC utilization, for modern CNN computation Highly optimized for real-time

3LC Demonstration of Catching Synthetic Slip-ups with 3LC

Paul Endresen, CEO of 3LC, demonstrates the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Andresen demonstrates the investigation of a curious embryo classification study from Norway, where synthetic data was supposed to help train a model – but something didn’t quite hatch right. Using 3LC to

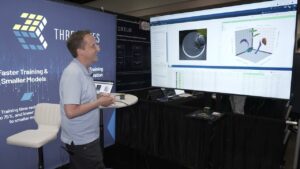

3LC Demonstration of Debugging YOLO with 3LC’s Training-time Truth Detector

Paul Endresen, CEO of 3LC, demonstrates the company’s latest edge AI and vision technologies and products at the 2025 Embedded Vision Summit. Specifically, Andresen demonstrates how to uncover hidden treasures in the COCO dataset – like unlabeled forks and phantom objects – using his platform’s training-time introspection tools. In this demo, 3LC eavesdrops on a