TI’s new application brief and companion demo outline how mmWave radar, camera input, FPGA-based sensor bridging and NVIDIA Holoscan can be combined into a low-latency perception pipeline for humanoids and other autonomous machines.

Texas Instruments, D3 Embedded, Lattice Semiconductor and NVIDIA are outlining a concrete radar-camera fusion stack for robotics rather than just talking about sensor fusion at a high level. At NVIDIA GTC and in recent releases, the companies have shown how TI’s IWR6243 mmWave radar can be fused with camera data on NVIDIA Holoscan, using a Lattice-based Holoscan Sensor Bridge and D3-developed application software.

For robotics developers, the pitch is straightforward: cameras are rich sensors, but they are not always dependable on their own. TI’s brief argues that vision can degrade in glare, fog, dust, rain and low light, while mmWave radar contributes direct range, velocity and angle measurements that are independent of lighting conditions. TI also highlights a practical robotics benefit: radar can help detect hazards such as transparent or reflective obstacles that vision systems may miss, and can be used to maintain a dynamic “safety bubble” around a robot based on object distance and relative speed.

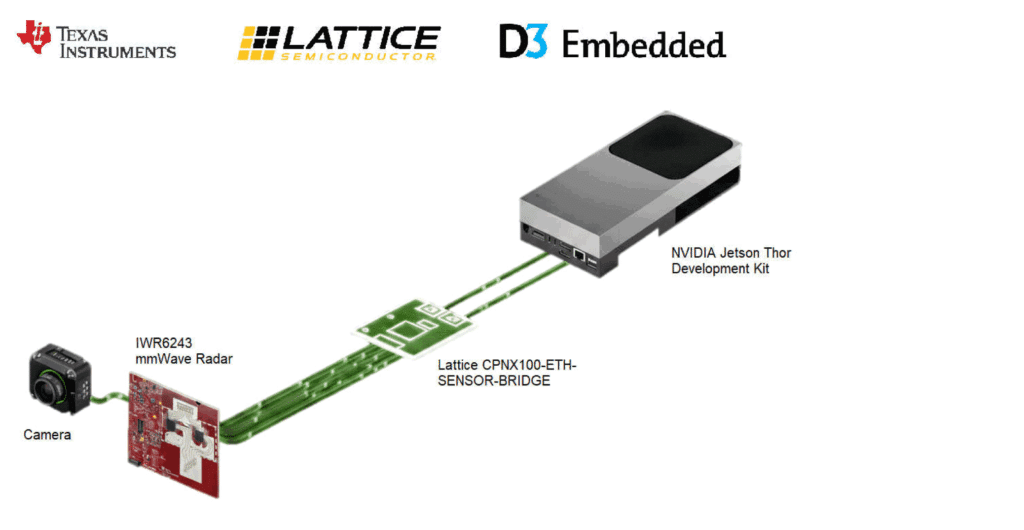

The reference architecture is built around a low-latency, GPU-centric data path. TI describes a hardware stack that combines an IWR6243-based radar front end, camera module, CSI-2 adapter board, Lattice CertusPro-NX Sensor-to-Ethernet Bridge Board, and NVIDIA Jetson Thor running the Holoscan SDK. The IWR6243 itself is a 57 GHz to 64 GHz single-chip 4RX/3TX mmWave transceiver, while the reference hardware uses a cascade EVM with two IWR6243 devices to expose raw radar data for development and validation.

Reference architecture for TI’s IWR6243 radar-camera fusion pipeline on NVIDIA Holoscan, using a Lattice sensor bridge and D3 Embedded integration software.

What matters technically is how that data moves. According to TI, raw radar data leaves the radar over CSI-2, is handed to the Holoscan Sensor Bridge, and is then streamed into GPU-accessible memory using zero-copy mechanisms over Ethernet, minimizing CPU involvement. Once on the GPU, Holoscan orchestrates a graph-based pipeline in which radar signal processing, camera preprocessing, neural network inference and multimodal fusion are scheduled deterministically. Lattice’s role here is not peripheral: the bridge board is the aggregation and transport layer that receives the sensor streams and handles deterministic data movement into the compute platform.

TI’s brief also makes clear that this is not just a sensor-plumbing demo. The fusion pipeline begins with synchronized radar and camera capture. Radar processing turns IWR6243 measurements into point clouds carrying both spatial structure and motion characteristics, while camera models extract semantic cues such as object identity, pose and classification. The fusion stage then aligns radar and visual detections in space and time so that radar-derived depth and velocity can complement vision-based inference in cases such as fast-moving objects, partial occlusion or visually degraded scenes. A preview post from the demo team described the setup as capturing raw ADC samples from TI radar ICs, routing them through a Lattice FPGA-based Holoscan Sensor Bridge, and processing them with NVIDIA radar software.

D3 Embedded appears to be doing a substantial share of the solution integration work. TI says the application software layered on top of Holoscan was developed by D3 and implements radar configuration, signal processing, sensor alignment and fusion logic. In practice, that means TI supplies the radar front end, Lattice provides the bridge hardware, NVIDIA provides the streaming and AI runtime, and D3 turns those ingredients into a working perception pipeline that can generate radar point clouds, align them with camera data and feed AI-driven fusion in a centralized architecture.

The combination of the IWR6243, Holoscan Sensor Bridge, Jetson Thor and D3 software represent a complete hardware and software foundation optimized for high-bandwidth sensing, low latency and scalability toward humanoid and robotics deployments. For engineering teams building physical AI systems, that makes this collaboration worth watching: it is a documented template for bringing radar into modern multimodal perception stacks with a credible path from bring-up to deployment.

Read the complete technical brief here: https://www.ti.com/lit/ab/sbaa790/sbaa790.pdf