From ADAS to Robotaxi: How Vision Systems Must Level Up to Meet New Mobility Use Cases (Part 2)

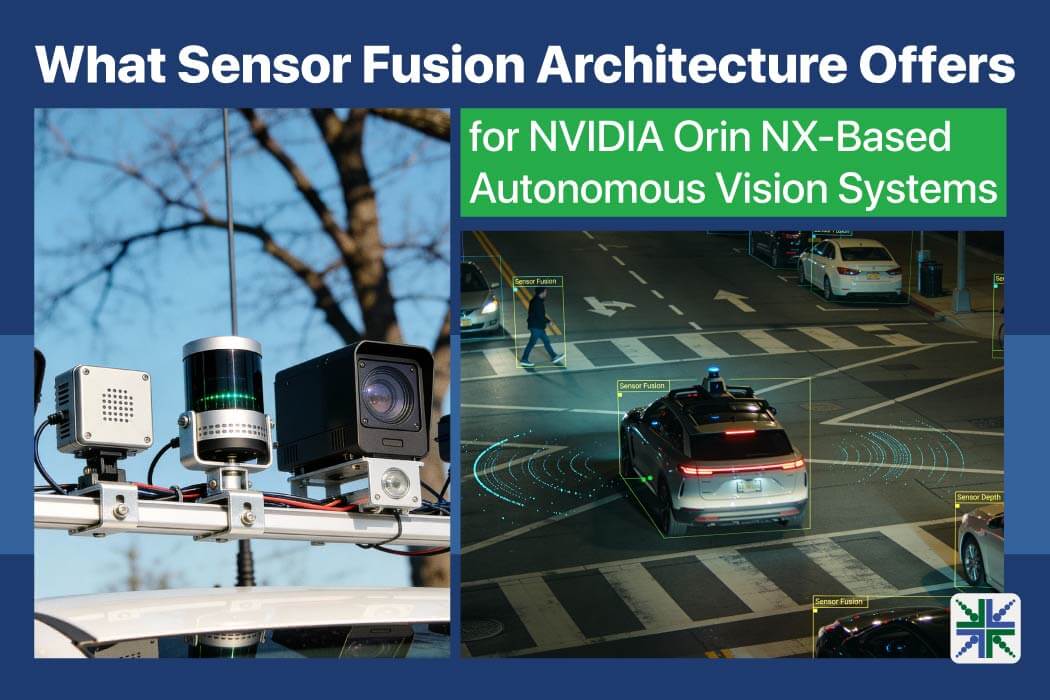

This blog post was originally published at e-con Systems’ website. It is reprinted here with the permission of e-con Systems. Key Takeaways How urban lighting and motion define robotaxi imaging needs Which camera features support reliable perception during day and night operation Why unified AI vision boxes reduce latency and coordination gaps How integrated vision platforms […]