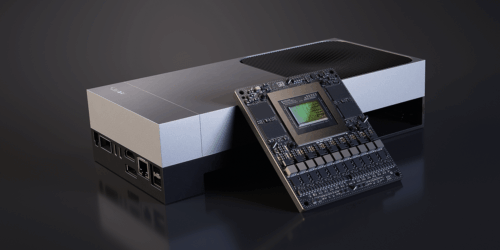

Build High-performance Vision AI Pipelines with NVIDIA CUDA-accelerated VC-6

This blog post was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. The constantly increasing compute throughput of NVIDIA GPUs presents a new opportunity for optimizing vision AI workloads: keeping the hardware fed with data. As GPU performance continues to scale, traditional data pipeline stages, such as I/O from […]

Build High-performance Vision AI Pipelines with NVIDIA CUDA-accelerated VC-6 Read More +