Resources

In-depth information about the edge AI and vision applications, technologies, products, markets and trends.

The content in this section of the website comes from Edge AI and Vision Alliance members and other industry luminaries.

All Resources

AI at the Edge: Designing for Constraints from Day One

This blog post was originally published at ModelCat’s website. It is reprinted here with the permission of ModelCat. Artificial intelligence has never been more visible yet more misunderstood. Every week seems to bring new headlines

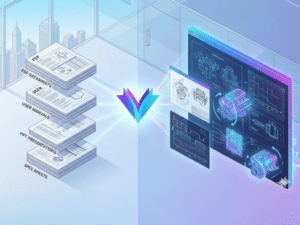

Introducing the Electronics Industry’s First AI Agent with Visual Reasoning

This blog post was originally published at Rapidflare’s website. It is reprinted here with the permission of Rapidflare. AI has made extraordinary progress in understanding language. But in industries like semiconductors, electronics, manufacturing, medical devices, and

Renesas Announces General Availability of Renesas 365

NUREMBERG, Germany and TOKYO, Japan — Renesas Electronics Corporation (TSE: 6723), a premier supplier of advanced semiconductor solutions, today announced the general availability of Renesas 365, Powered by Altium, an intelligent, model-based platform that integrates device exploration,

How to Ensure that High-tech Products Such as Smart Glasses are Brought to Market Quickly and Cost-effectively

This blog post was originally published at Helbling Technik’s website. It is reprinted here with the permission of Helbling Technik. A cure for myopia With more than 2.6 billion people living with the condition

BrainChip Enables the Next Generation of Always-On Wearables with the AkidaTag Reference Platform

LAGUNA HILLS, Calif. — March 10, 2026 — BrainChip Holdings Ltd. (ASX: BRN, OTCQX: BRCHF, ADR: BCHPY), the world’s first commercial producer of ultra-low-power, fully digital, event-based neuromorphic AI, today announced at Embedded World in Nuremberg, Germany the launch of AkidaTag©,

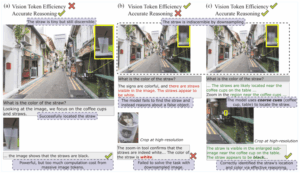

ERGO: Efficient High-Resolution Visual Understanding for Vision-Language Models

This blog post was originally published at Nota AI’s website. It is reprinted here with the permission of Nota AI. Key Takeaways: Efficient coarse-to-fine pipeline: A two-stage reasoning pipeline that first processes low-resolution inputs to identify

Edge Container Registries Explained: How to Distribute Images Reliably at Scale

This blog post was originally published at Avassa’s website. It is reprinted here with the permission of Avassa. A mostly hidden piece of the container application puzzle is the image registry. If you’ve used Linux

TI expands Microcontroller Portfolio and Software Ecosystem to Enable Edge AI in Every Device

New MCUs with the TinyEngine NPU join TI’s comprehensive portfolio of AI-enabled hardware, software and tools, allowing engineers to deploy intelligence anywhere Key Takeaways: TI’s integrated TinyEngine NPU can run AI models with up to

Arduino Announces Arduino VENTUNO Q, Powered by Qualcomm Dragonwing IQ8 Series

The new platform by the leading open-source hardware provider is purpose-built for generative AI, robotics, and actuation — making advanced capabilities accessible to all. Ahead of Embedded World, Arduino announced the upcoming launch of

Technologies

Upcoming Webinar on Agentic Memory Systems

On April 16, 2026, at 1:00 pm EDT (10:00 am PDT) Boston.AI will deliver a webinar “Remembering to Forget: Agentic Memory Systems and Context Constraints” From the event page: As AI agents evolve from stateless responders into persistent, goal-directed systems, memory has become a central design challenge. The question is no longer just what agents

From hardware to intelligence: The Qualcomm AI camera platform for scalable security solutions

At ISC West 2026 Qualcomm Technologies showcases its vision for the future of smart camera and security Key Takeaways: End-to-end AI development tools and the Qualcomm Insight Platform to enable customers to develop AI features once and scale across their entire product portfolio. Qualcomm Technologies’ camera solutions span video security, law enforcement and enterprise body

Lightweight Keyword Spotting Solution from Microchip

Microchip presents a customizable, target-agnostic solution to program wake words and voice commands. The ML model, generated and tested using a custom application, has low latency and a minimal memory footprint, making it ideal for resource-constrained embedded systems. The ML model can be integrated into voice-based applications running on any 32-bit microcontroller or microprocessor running

Applications

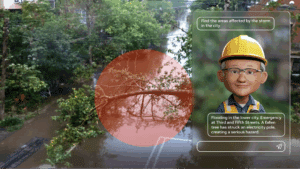

2026: The Year Intelligence Gets Physical

This article was originally published at Analog Devices’ website. It is reprinted here with the permission of Analog Devices. Artificial intelligence is entering a new phase where models interpret contextual data whilst interacting with the physical world in real time. At Analog Devices, Inc. (ADI), we call this Physical Intelligence: intelligent systems that can perceive, reason

From Warehouse to Wallet: New State of AI in Retail and CPG Survey Uncovers How AI Is Rewiring Supply Chains and Customer Experiences

This blog post was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. The third annual NVIDIA State of AI in Retail and CPG survey shows why nine in 10 retailers will increase AI budgets in 2026, focusing on open-source models and software, as well as agentic and physical AI. Highlights

STMicroelectronics and Leopard Imaging Accelerate Robotics Vision with NVIDIA Jetson-ready Multi-sensor Module

Key Takeaways Multimodal module combining 2D imaging, 3D depth sensing, and human-like motion perception NVIDIA Holoscan Sensor Bridge ensuring multi-gigabit plug and play connectivity with Jetson platforms Fully supported by NVIDIA Isaac open robot development platform STMicroelectronics and Leopard Imaging® have introduced an all-in-one multimodal vision module for humanoid and other advanced robotics systems. Combining

Functions

AI On: 3 Ways to Bring Agentic AI to Computer Vision Applications

This blog post was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. Learn how to integrate vision language models into video analytics applications, from AI-powered search to fully automated video analysis. Today’s computer vision systems excel at identifying what happens in physical spaces and processes, but lack the abilities to explain the

SAM3: A New Era for Open‑Vocabulary Segmentation and Edge AI

Quality training data – especially segmented visual data – is a cornerstone of building robust vision models. Meta’s recently announced Segment Anything Model 3 (SAM3) arrives as a potential game-changer in this domain. SAM3 is a unified model that can detect, segment, and even track objects in images and videos using both text and visual

TLens vs VCM Autofocus Technology

This blog post was originally published at e-con Systems’ website. It is reprinted here with the permission of e-con Systems. In this blog, we’ll walk you through how TLens technology differs from traditional VCM autofocus, how TLens combined with e-con Systems’ Tinte ISP enhances camera performance, key advantages of TLens over mechanical autofocus systems, and applications