Processors for Embedded Vision

THIS TECHNOLOGY CATEGORY INCLUDES ANY DEVICE THAT EXECUTES VISION ALGORITHMS OR VISION SYSTEM CONTROL SOFTWARE

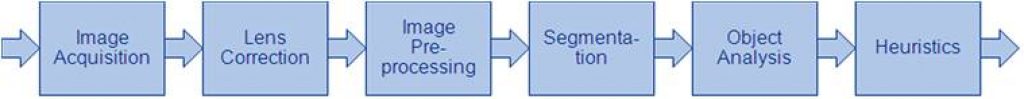

This technology category includes any device that executes vision algorithms or vision system control software. The following diagram shows a typical computer vision pipeline; processors are often optimized for the compute-intensive portions of the software workload.

The following examples represent distinctly different types of processor architectures for embedded vision, and each has advantages and trade-offs that depend on the workload. For this reason, many devices combine multiple processor types into a heterogeneous computing environment, often integrated into a single semiconductor component. In addition, a processor can be accelerated by dedicated hardware that improves performance on computer vision algorithms.

General-purpose CPUs

While computer vision algorithms can run on most general-purpose CPUs, desktop processors may not meet the design constraints of some systems. However, x86 processors and system boards can leverage the PC infrastructure for low-cost hardware and broadly-supported software development tools. Several Alliance Member companies also offer devices that integrate a RISC CPU core. A general-purpose CPU is best suited for heuristics, complex decision-making, network access, user interface, storage management, and overall control. A general purpose CPU may be paired with a vision-specialized device for better performance on pixel-level processing.

Graphics Processing Units

High-performance GPUs deliver massive amounts of parallel computing potential, and graphics processors can be used to accelerate the portions of the computer vision pipeline that perform parallel processing on pixel data. While General Purpose GPUs (GPGPUs) have primarily been used for high-performance computing (HPC), even mobile graphics processors and integrated graphics cores are gaining GPGPU capability—meeting the power constraints for a wider range of vision applications. In designs that require 3D processing in addition to embedded vision, a GPU will already be part of the system and can be used to assist a general-purpose CPU with many computer vision algorithms. Many examples exist of x86-based embedded systems with discrete GPGPUs.

Digital Signal Processors

DSPs are very efficient for processing streaming data, since the bus and memory architecture are optimized to process high-speed data as it traverses the system. This architecture makes DSPs an excellent solution for processing image pixel data as it streams from a sensor source. Many DSPs for vision have been enhanced with coprocessors that are optimized for processing video inputs and accelerating computer vision algorithms. The specialized nature of DSPs makes these devices inefficient for processing general-purpose software workloads, so DSPs are usually paired with a RISC processor to create a heterogeneous computing environment that offers the best of both worlds.

Field Programmable Gate Arrays (FPGAs)

Instead of incurring the high cost and long lead-times for a custom ASIC to accelerate computer vision systems, designers can implement an FPGA to offer a reprogrammable solution for hardware acceleration. With millions of programmable gates, hundreds of I/O pins, and compute performance in the trillions of multiply-accumulates/sec (tera-MACs), high-end FPGAs offer the potential for highest performance in a vision system. Unlike a CPU, which has to time-slice or multi-thread tasks as they compete for compute resources, an FPGA has the advantage of being able to simultaneously accelerate multiple portions of a computer vision pipeline. Since the parallel nature of FPGAs offers so much advantage for accelerating computer vision, many of the algorithms are available as optimized libraries from semiconductor vendors. These computer vision libraries also include preconfigured interface blocks for connecting to other vision devices, such as IP cameras.

Vision-Specific Processors and Cores

Application-specific standard products (ASSPs) are specialized, highly integrated chips tailored for specific applications or application sets. ASSPs may incorporate a CPU, or use a separate CPU chip. By virtue of their specialization, ASSPs for vision processing typically deliver superior cost- and energy-efficiency compared with other types of processing solutions. Among other techniques, ASSPs deliver this efficiency through the use of specialized coprocessors and accelerators. And, because ASSPs are by definition focused on a specific application, they are usually provided with extensive associated software. This same specialization, however, means that an ASSP designed for vision is typically not suitable for other applications. ASSPs’ unique architectures can also make programming them more difficult than with other kinds of processors; some ASSPs are not user-programmable.

A Smarter Vision for AI at ISC West 2026

Ambarella will be hosting an invitation-only exhibition at ISC West 2026, taking place March 25 – 27 at the Venetian Expo in Las Vegas, where we’ll demonstrate how edge AI is enabling the next generation of intelligent security and physical AI systems. This year’s theme, “A Smarter Vision for AI,” reflects an important shift underway

UCIe & Chiplets: A Practical Guide to Modular SoC Design

This blog post was originally published at Tessolve’s website. It is reprinted here with the permission of Tessolve. The semiconductor industry is undergoing a paradigm shift. Traditional monolithic System-on-Chip (SoC) designs are giving way to modular architectures that leverage interoperable chiplets. At the heart of this evolution is Universal Chiplet Interconnect Express (UCIe). This open standard

Cadence and NVIDIA Unveil Accelerated Engineering Solutions Purpose-Built for Agentic AI Chip and System Design

New agentic integrated circuit (IC) and physical AI accelerated solutions enable engineers to solve previously impossible chip, system and AI factory challenges SAN JOSE, Calif.— Today, Cadence announced an expansion of its broad collaboration with NVIDIA to accelerate Cadence’s Design for AI and AI for Design strategy. The next generation of agentic AI design solutions includes autonomous,

STMicroelectronics and Leopard Imaging Accelerate Robotics Vision with NVIDIA Jetson-ready Multi-sensor Module

Key Takeaways Multimodal module combining 2D imaging, 3D depth sensing, and human-like motion perception NVIDIA Holoscan Sensor Bridge ensuring multi-gigabit plug and play connectivity with Jetson platforms Fully supported by NVIDIA Isaac open robot development platform STMicroelectronics and Leopard Imaging® have introduced an all-in-one multimodal vision module for humanoid and other advanced robotics systems. Combining

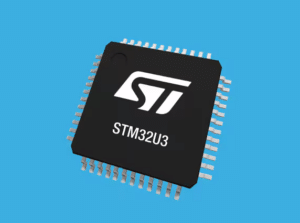

HSP: The new hardware accelerator that transforms an ultra-low-power STM32U3 into an AI machine

This blog post was originally published at STMicroelectronics’s website. It is reprinted here with the permission of STMicroelectronics. ST is launching today its hardware signal processor (HSP), a new hardware unit that the industry will experience in more and more of our upcoming STM32 microcontrollers, starting today with our new STM32U3B5/C5 devices featuring 2 MB of flash. In a

NVIDIA, T-Mobile and Partners Integrate Physical AI Applications on AI-RAN-Ready Infrastructure

News Summary: T-Mobile pilots NVIDIA RTX PRO 6000 Blackwell Server Edition AI infrastructure to demonstrate physical AI applications at the edge, complementing the AI-RAN Innovation Center’s distributed network Physical AI developers including Fogsphere, LinkerVision, Levatas, Vaidio and Siemens Energy are building reasoning and vision AI agents to the edge using the NVIDIA Metropolis Blueprint for

Lattice Joins NVIDIA Halos Ecosystem to Advance Safety for Physical AI with Holoscan Sensor Bridge

HILLSBORO, Ore. – Mar. 16, 2026 – Lattice Semiconductor (NASDAQ: LSCC), the low power programmable leader, today announced it has joined the NVIDIA Halos AI Systems Inspection Lab ecosystem, the first ANSI National Accreditation Board (ANAB) accredited inspection lab for AI-driven physical systems. Announced at the NVIDIA GTC 2026, Lattice will engage with NVIDIA and other Halos ecosystem members to

NVIDIA and Global Robotics Leaders Take Physical AI to the Real World

News Summary: Physical AI leaders across robot brain developers, industrial, and surgical robot giants and humanoid pioneers including ABB Robotics, AGIBOT, Agility, CMR Surgical, FANUC, Figure, Hexagon Robotics, KUKA, Medtronic, Skild AI, Universal Robots, World Labs and YASKAWA are building on NVIDIA technology to develop and deploy physical AI at scale. NVIDIA unveils new NVIDIA

NXP Delivers New Innovations for Advanced Physical AI with NVIDIA

Key Takeaways: Secure, reliable real-time data processing and transport solutions for next-generation physical AI applications, developed in collaboration with NVIDIA NVIDIA humanoid robotics solutions integrated into NXP’s safe, secure edge portfolio cut development costs and speed time to market First in a series of NXP’s foundational robotics solutions designed to accelerate physical AI development and

BrainChip Enables the Next Generation of Always-On Wearables with the AkidaTag Reference Platform

LAGUNA HILLS, Calif. — March 10, 2026 — BrainChip Holdings Ltd. (ASX: BRN, OTCQX: BRCHF, ADR: BCHPY), the world’s first commercial producer of ultra-low-power, fully digital, event-based neuromorphic AI, today announced at Embedded World in Nuremberg, Germany the launch of AkidaTag©, a reference platform for smart sensing in a battery-powered tag powered by Nordic Semiconductor. Intelligent wearable and remote industrial sensors

TI expands Microcontroller Portfolio and Software Ecosystem to Enable Edge AI in Every Device

New MCUs with the TinyEngine NPU join TI’s comprehensive portfolio of AI-enabled hardware, software and tools, allowing engineers to deploy intelligence anywhere Key Takeaways: TI’s integrated TinyEngine NPU can run AI models with up to 90 times lower latency and more than 120 times lower energy utilization per inference than similar MCUs without an accelerator.

Arduino Announces Arduino VENTUNO Q, Powered by Qualcomm Dragonwing IQ8 Series

The new platform by the leading open-source hardware provider is purpose-built for generative AI, robotics, and actuation — making advanced capabilities accessible to all. Ahead of Embedded World, Arduino announced the upcoming launch of its newest platform to democratize edge AI, Arduino® VENTUNO™ Q. Named after the Italian word for twenty-one, VENTUNO Q builds on the iconic

Intel Launches Core Series 2 Processor with Real-Time Performance and Expands Edge AI Portfolio

New industrial-ready platform delivers breakthrough deterministic performance; sixth Edge AI suite targets healthcare applications NUREMBERG, Germany — March 9, 2026 — At Embedded World 2026, Intel launched the Intel® Core™ processor Series 2 with P-cores, an industrial-ready platform engineered for mission-critical edge applications. Intel also announced its latest Edge AI suite for Health & Life Sciences, providing validated reference pipelines and benchmarking tools

Synaptics Introduces SYN765x, an Industry-Leading AI-Native Wi-Fi® 7 Solution for Integrated IoT Edge Applications

AN JOSE, Calif., Mar 10, 2026 — Synaptics Incorporated (Nasdaq: SYNA), today announced the SYN765x, an AI-native wireless solution that redefines Edge intelligence. As an industry-leading single-chip device combining AI-optimized compute with integrated Wi-Fi® 7, the SYN765x is designed to bring scalable, real-time intelligence directly to smart appliances, home automation systems, and Industrial IoT (IIoT) applications. SYN765x

RTOS vs. Bare-Metal: Decision Matrix Tool for Projects Based on High-End Microcontrollers

This blog post was originally published at eInfochips’ website. It is reprinted here with the permission of eInfochips. Introduction When building a system with a powerful microcontroller (MCU) or microprocessor, such as an ARM Cortex-M4, M7, R5, RXv3, A15, or A53—one of the key decisions developers face is whether to use bare-metal programming or a

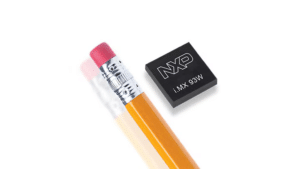

NXP’s New i.MX 93W Fuses Edge Compute and Secure Wireless Connectivity to Accelerate Physical AI

Key Takeaways: First applications processor to combine an AI NPU with secure, tri-radio connectivity, replacing up to 60 discrete components with a single package Accelerates coordinated AI agent deployment with integrated edge compute and secure connectivity, supported by NXP software and eIQ® AI enablement Pre-certified designs simplify wireless certification, eliminating RF complexity and speeding time-to-market

Conversations at the Edge with NXP

This blog post was originally published at Au-Zone’s website. It is reprinted here with the permission of Au-Zone. Are Single-Sensor Robots Obsolete? We think so, and we’re here to show you why. Au-Zone is proud to be featured in NXP Semiconductors’ Conversations at the Edge video series, a multi-part collaboration exploring innovation at the intersection of

TI Accelerates the Next Generation of Physical AI with NVIDIA

News highlights: TI and NVIDIA are collaborating to accelerate the path from simulation to the safe deployment of humanoid robots in the real world. As part of this collaboration, TI integrated its mmWave radar technology with NVIDIA Jetson Thor and NVIDIA Holoscan to enable low-latency 3D perception and safety awareness for physical AI applications. TI

STM32U3B5/U3C5: Bringing High-Performance DSP & Edge AI to Ultralow Power Designs

Built on the Arm® Cortex®‑M33 core, the STM32U3B5/U3C5 MCUs combine up to 2 Mbytes of dual‑bank flash memory with 640 Kbytes of RAM and are available in packages from 48 to 144 pins (UFQFPN, WLCSP, LQFP, and UFBGA). The lines introduce a hardware signal processor (HSP) to the STM32U3 portfolio, offloading complex DSP and edge‑AI workloads and

From ADAS to Robotaxi: How Vision Systems Must Level Up to Meet New Mobility Use Cases (Part 2)

This blog post was originally published at e-con Systems’ website. It is reprinted here with the permission of e-con Systems. Key Takeaways How urban lighting and motion define robotaxi imaging needs Which camera features support reliable perception during day and night operation Why unified AI vision boxes reduce latency and coordination gaps How integrated vision platforms