Processors for Embedded Vision

THIS TECHNOLOGY CATEGORY INCLUDES ANY DEVICE THAT EXECUTES VISION ALGORITHMS OR VISION SYSTEM CONTROL SOFTWARE

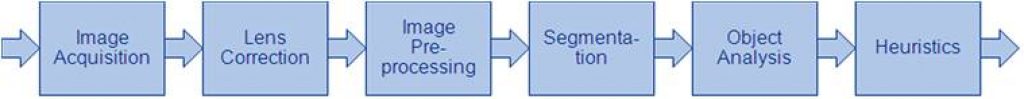

This technology category includes any device that executes vision algorithms or vision system control software. The following diagram shows a typical computer vision pipeline; processors are often optimized for the compute-intensive portions of the software workload.

The following examples represent distinctly different types of processor architectures for embedded vision, and each has advantages and trade-offs that depend on the workload. For this reason, many devices combine multiple processor types into a heterogeneous computing environment, often integrated into a single semiconductor component. In addition, a processor can be accelerated by dedicated hardware that improves performance on computer vision algorithms.

General-purpose CPUs

While computer vision algorithms can run on most general-purpose CPUs, desktop processors may not meet the design constraints of some systems. However, x86 processors and system boards can leverage the PC infrastructure for low-cost hardware and broadly-supported software development tools. Several Alliance Member companies also offer devices that integrate a RISC CPU core. A general-purpose CPU is best suited for heuristics, complex decision-making, network access, user interface, storage management, and overall control. A general purpose CPU may be paired with a vision-specialized device for better performance on pixel-level processing.

Graphics Processing Units

High-performance GPUs deliver massive amounts of parallel computing potential, and graphics processors can be used to accelerate the portions of the computer vision pipeline that perform parallel processing on pixel data. While General Purpose GPUs (GPGPUs) have primarily been used for high-performance computing (HPC), even mobile graphics processors and integrated graphics cores are gaining GPGPU capability—meeting the power constraints for a wider range of vision applications. In designs that require 3D processing in addition to embedded vision, a GPU will already be part of the system and can be used to assist a general-purpose CPU with many computer vision algorithms. Many examples exist of x86-based embedded systems with discrete GPGPUs.

Digital Signal Processors

DSPs are very efficient for processing streaming data, since the bus and memory architecture are optimized to process high-speed data as it traverses the system. This architecture makes DSPs an excellent solution for processing image pixel data as it streams from a sensor source. Many DSPs for vision have been enhanced with coprocessors that are optimized for processing video inputs and accelerating computer vision algorithms. The specialized nature of DSPs makes these devices inefficient for processing general-purpose software workloads, so DSPs are usually paired with a RISC processor to create a heterogeneous computing environment that offers the best of both worlds.

Field Programmable Gate Arrays (FPGAs)

Instead of incurring the high cost and long lead-times for a custom ASIC to accelerate computer vision systems, designers can implement an FPGA to offer a reprogrammable solution for hardware acceleration. With millions of programmable gates, hundreds of I/O pins, and compute performance in the trillions of multiply-accumulates/sec (tera-MACs), high-end FPGAs offer the potential for highest performance in a vision system. Unlike a CPU, which has to time-slice or multi-thread tasks as they compete for compute resources, an FPGA has the advantage of being able to simultaneously accelerate multiple portions of a computer vision pipeline. Since the parallel nature of FPGAs offers so much advantage for accelerating computer vision, many of the algorithms are available as optimized libraries from semiconductor vendors. These computer vision libraries also include preconfigured interface blocks for connecting to other vision devices, such as IP cameras.

Vision-Specific Processors and Cores

Application-specific standard products (ASSPs) are specialized, highly integrated chips tailored for specific applications or application sets. ASSPs may incorporate a CPU, or use a separate CPU chip. By virtue of their specialization, ASSPs for vision processing typically deliver superior cost- and energy-efficiency compared with other types of processing solutions. Among other techniques, ASSPs deliver this efficiency through the use of specialized coprocessors and accelerators. And, because ASSPs are by definition focused on a specific application, they are usually provided with extensive associated software. This same specialization, however, means that an ASSP designed for vision is typically not suitable for other applications. ASSPs’ unique architectures can also make programming them more difficult than with other kinds of processors; some ASSPs are not user-programmable.

Designing with Efinix FPGA DSP Blocks

This blog post was originally published at Efinix’s website. It is reprinted here with the permission of Efinix. Today’s most advanced digital signal processing (DSP) solutions demand speed, adaptability, and precision — and that’s exactly where FPGAs (Field-Programmable Gate Arrays) shine. As a powerful hardware platform, FPGAs deliver exceptional parallel processing capability, high configurability, and

FotoNation and SEMIFIVE Announce Strategic Collaboration for Turnkey Development of TriSilica Perceptual AI Chip Family Using Samsung Foundry

Collaboration to accelerate the commercialization of FotoNation’s ultra-low-power sensor-fusion SoCs for edge AI applications GALWAY, Ireland, and SEOUL, South Korea – May 11 th , 2026 – FotoNation Ltd., a European-based Perception Recognition company and SEMIFIVE Inc., a leading global provider of custom AI semiconductor solutions, today announced a strategic collaboration agreement under which SEMIFIVE

Scalable FPGA Prototyping for AI SoCs: Handling Massive Parallelism and Bandwidth

This blog post was originally published at Tessolve’s website. It is reprinted here with the permission of Tessolve. Creating an AI System-on-Chip (SoC) today resembles conducting a thousand musicians playing different melodies at once; each note, or data stream, must align perfectly. As AI workloads become increasingly complex, prototyping these SoCs on FPGA (Field Programmable

NPX6 NPU IP Accelerates Physical AI SoC Performance

To meet the evolving performance and power-efficiency needs of generative AI (GenAI) and Physical AI models targeting for AI-driven SoCs, Synopsys has announced an enhanced version of its silicon-proven ARC® NPX6 NPU IP family of AI accelerators. These enhanced NPU IPs, which are software-compatible with existing NPX6 IP families, include: AI Data Compression: An enhanced

FotoNation Completes a Pre-A Round to Fund the Development of its TriSilica Chip Lead by Enterprise Ireland and Silicon Gardens

GALWAY, Ireland, May 7, 2026 /PRNewswire/ — FotoNation® LTD., a Galway-based company, announced it has closed its Pre-A round led by Enterprise-Ireland and Silicon Gardens, with participation from other notable Angels. This capital will accelerate FotoNation’s mission to develop its TriSilica® product: an ultra-low-power, perception-AI chip. Specifically, the funds will be used to progress the completion of the MPW prototype,

Gateworks and NXP Powers the Future of the Industrial Edge with M.2 AI Acceleration Card

This news blog post was originally published at NXP Semiconductors’ website. It is reprinted here with the permission of NXP Semiconductors. Gateworks, an NXP Gold Partner, is a leader in embedded computing solutions for edge AI and industrial connectivity. Bringing decades of experience and deep integration within NXP’s ecosystem, Gateworks delivers USA-made, industrial-grade platforms, complemented

New Synopsys.ai Copilots Deliver 2–5× Faster Chip Design Productivity

AI-assisted semiconductor design and verification is no longer just a concept. It is now a full-fledged reality that is delivering remarkable productivity gains. Five new Synopsys.ai Copilot assistants and advisors are now commercially available, putting expert-level guidance and creativity at engineers’ fingertips. Thousands of users across leading semiconductor companies are already harnessing this first-of-its-kind generative AI technology to

Accelerating AI Innovation at the Edge with the Ara240 Discrete Neural Processing Unit (NPU)

This blog post was originally published at NXP Semiconductors’ website. It is reprinted here with the permission of NXP Semiconductors. As AI workloads grow with larger, multimodal models and on-device generative and agentic AI, edge systems need more than incremental compute. They require dedicated acceleration for real-time performance, lower power, strong data privacy, and scalability. The Ara240

Addressing Technical Challenges in Next-Gen Smart Lock Design

This blog post was originally published at Renesas’ website. It is reprinted here with the permission of Renesas. From Connectivity Standards Alliance’s Matter™ and Aliro™ to Biometric Authentication The growing demand for secure, easy-to-use, and seamlessly integrated access solutions is driving smart locks to the forefront of residential and commercial access control. Expansion of smart home

Modern Trends in Floating-Point

This blog post was originally published at Imagination Technologies’ website. It is reprinted here with the permission of Imagination Technologies. The requirement to support real numbers in computers has existed for as long as computers themselves, yet has always been a more complicated challenge than it at first appears. Why? Because computer-based representations can only represent

Beyond the Bench: Reinventing Embedded Hardware with Grinn

This video was originally published at Peridio’s website. It is reprinted here with the permission of Peridio. In this episode of Beyond the Bench from Peridio, Bill Brock sits down with Robert Otręba, Founder & CEO of Grinn, a Poland-based embedded engineering company operating for nearly 18 years. Robert shares how Grinn grew from a two-person

Cadence and NVIDIA Expand Partnership to Reinvent Engineering for the Age of AI and Accelerated Computing

15 Apr 2026 Expanded collaboration combines agentic AI, physics-based simulation, and digital twins to accelerate engineering and unlock new levels of productivity across semiconductors, physical AI systems and AI factories SAN JOSE, Calif.— At CadenceLIVE Silicon Valley 2026, Cadence (Nasdaq: CDNS) announced an expanded partnership with NVIDIA to deliver accelerated solutions across agentic AI, physics-based simulation

Intel Launches Intel Core Series 3 Processors: Changing the Game for Everyday Computing

Intel® Core™ Series 3 brings advanced features and Intel’s latest architectures to value buyers, commercial and essential edge devices What’s New: Intel® today unveiled its new Intel Core™ Series 3 mobile processors, bringing advanced performance, exceptional battery life, and AI-ready to value buyers, commercial and essential edge devices. Purpose-engineered for value, Intel Core Series 3

What Is MCU-Based Power Management – and Why Does Your Vision System Need It?

This blog post was originally published at e-con Systems’ website. It is reprinted here with the permission of e-con Systems. Key Takeaways: Why e-con Systems’ Darsi Pro is a one-of-a-kind vision solution The role of the MCU in Darsi Pro How Darsi Pro helps smartly manage power on NVIDIA Jetson Orin NX modules Vision systems involve

Neuromorphic Computing Enables Ultra-low Power Edge Devices

This blog post was originally published at Helbling’s website. It is reprinted here with the permission of Helbling. Over the last five years, neuromorphic computing has rapidly advanced through the development of state-of-the-art hardware and software technologies that mimic the information processing dynamics of animal brains. This development provides ultra-low power computation capabilities, especially for edge

Key Trends Shaping the Semiconductor Industry in 2026

This blog post was originally published at HTEC’s website. It is reprinted here with the permission of HTEC. The hardware boom is slowing down. What comes next is a software, power, and inference problem—and most of the industry isn’t ready for any of it. AI chips are now 0.2% of all chips manufactured, but

Texas Instruments, D3 Embedded, Lattice and NVIDIA Show a Practical Radar-Camera Fusion Stack for Robotics

TI’s new application brief and companion demo outline how mmWave radar, camera input, FPGA-based sensor bridging and NVIDIA Holoscan can be combined into a low-latency perception pipeline for humanoids and other autonomous machines. Texas Instruments, D3 Embedded, Lattice Semiconductor and NVIDIA are outlining a concrete radar-camera fusion stack for robotics rather than just talking

Upcoming Webinar on Akida Radar Reference Platform

On April 20, 2026, at 8:00 pm PDT (11:00 am EDT) BrainChip will deliver a webinar “Akida Radar Reference Platform: See the Evolution of Radar Intelligence with AI-Powered Object Classification” From the event page: Join us on 20 April at 8:00 AM PT for an exclusive deep dive into BrainChip’s Radar Reference Platform — bringing

From Connected to Aware: How PSOC™ Edge Enables the Next Wave of Smart Devices

This blog post was originally published at Infineon’s website. It is reprinted here with the permission of Infineon. Across home, retail, and industry, devices that once followed simple rules are now expected to understand people and context. A thermostat shouldn’t just follow a schedule; it should know if anyone is in the room and choose the preferred

BrainChip Unveils Radar Reference Platform to Bridge the ‘Identification Gap’ in Edge AI

LAGUNA HILLS, Calif. — April 6, 2026 — BrainChip Holdings Ltd (ASX: BRN, OTCQX: BRCHF, BCHPY), the world’s first commercial producer of ultra-low-power, neuromorphic AI technology, today announced the launch of its Radar Reference Platform. This fully validated hardware and AI stack is designed to provide real-time object classification at the edge, solving the critical “identification gap” that limits traditional radar