Processors for Embedded Vision

THIS TECHNOLOGY CATEGORY INCLUDES ANY DEVICE THAT EXECUTES VISION ALGORITHMS OR VISION SYSTEM CONTROL SOFTWARE

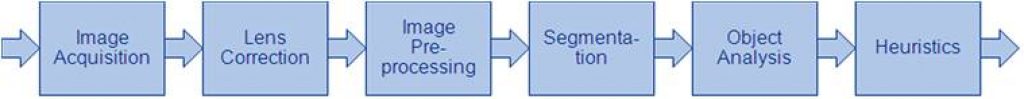

This technology category includes any device that executes vision algorithms or vision system control software. The following diagram shows a typical computer vision pipeline; processors are often optimized for the compute-intensive portions of the software workload.

The following examples represent distinctly different types of processor architectures for embedded vision, and each has advantages and trade-offs that depend on the workload. For this reason, many devices combine multiple processor types into a heterogeneous computing environment, often integrated into a single semiconductor component. In addition, a processor can be accelerated by dedicated hardware that improves performance on computer vision algorithms.

General-purpose CPUs

While computer vision algorithms can run on most general-purpose CPUs, desktop processors may not meet the design constraints of some systems. However, x86 processors and system boards can leverage the PC infrastructure for low-cost hardware and broadly-supported software development tools. Several Alliance Member companies also offer devices that integrate a RISC CPU core. A general-purpose CPU is best suited for heuristics, complex decision-making, network access, user interface, storage management, and overall control. A general purpose CPU may be paired with a vision-specialized device for better performance on pixel-level processing.

Graphics Processing Units

High-performance GPUs deliver massive amounts of parallel computing potential, and graphics processors can be used to accelerate the portions of the computer vision pipeline that perform parallel processing on pixel data. While General Purpose GPUs (GPGPUs) have primarily been used for high-performance computing (HPC), even mobile graphics processors and integrated graphics cores are gaining GPGPU capability—meeting the power constraints for a wider range of vision applications. In designs that require 3D processing in addition to embedded vision, a GPU will already be part of the system and can be used to assist a general-purpose CPU with many computer vision algorithms. Many examples exist of x86-based embedded systems with discrete GPGPUs.

Digital Signal Processors

DSPs are very efficient for processing streaming data, since the bus and memory architecture are optimized to process high-speed data as it traverses the system. This architecture makes DSPs an excellent solution for processing image pixel data as it streams from a sensor source. Many DSPs for vision have been enhanced with coprocessors that are optimized for processing video inputs and accelerating computer vision algorithms. The specialized nature of DSPs makes these devices inefficient for processing general-purpose software workloads, so DSPs are usually paired with a RISC processor to create a heterogeneous computing environment that offers the best of both worlds.

Field Programmable Gate Arrays (FPGAs)

Instead of incurring the high cost and long lead-times for a custom ASIC to accelerate computer vision systems, designers can implement an FPGA to offer a reprogrammable solution for hardware acceleration. With millions of programmable gates, hundreds of I/O pins, and compute performance in the trillions of multiply-accumulates/sec (tera-MACs), high-end FPGAs offer the potential for highest performance in a vision system. Unlike a CPU, which has to time-slice or multi-thread tasks as they compete for compute resources, an FPGA has the advantage of being able to simultaneously accelerate multiple portions of a computer vision pipeline. Since the parallel nature of FPGAs offers so much advantage for accelerating computer vision, many of the algorithms are available as optimized libraries from semiconductor vendors. These computer vision libraries also include preconfigured interface blocks for connecting to other vision devices, such as IP cameras.

Vision-Specific Processors and Cores

Application-specific standard products (ASSPs) are specialized, highly integrated chips tailored for specific applications or application sets. ASSPs may incorporate a CPU, or use a separate CPU chip. By virtue of their specialization, ASSPs for vision processing typically deliver superior cost- and energy-efficiency compared with other types of processing solutions. Among other techniques, ASSPs deliver this efficiency through the use of specialized coprocessors and accelerators. And, because ASSPs are by definition focused on a specific application, they are usually provided with extensive associated software. This same specialization, however, means that an ASSP designed for vision is typically not suitable for other applications. ASSPs’ unique architectures can also make programming them more difficult than with other kinds of processors; some ASSPs are not user-programmable.

Voyager SDK v1.5.3 is Live, and That Means Ultralytics YOLO26 Support

Voyager v1.5.3 dropped, and Ultralytics YOLO26 support is the big headline here. If you’ve been following Ultralytics’ releases, you’ll know Ultralytics YOLO26 is specifically engineered for edge devices like Axelera’s Metis hardware. Why Ultralytics YOLO26 matters for your projects: The architecture is designed end-to-end, which means no more NMS (non-maximum suppression) post-processing. That translates to simpler deployment and

Free Webinar Highlights Compelling Advantages of FPGAs

On March 17, 2026 at 9 am PT (noon ET), Efinix’s Mark Oliver, VP of Marketing and Business Development, will present the free hour webinar “Why your Next AI Accelerator Should Be an FPGA,” organized by the Edge AI and Vision Alliance. Here’s the description, from the event registration page: Edge AI system developers often

Meet MIPS S8200: Real-Time, On-Device AI for the Physical World

This blog post was originally published at MIPS’s website. It is reprinted here with the permission of MIPS. Physical AI is the ability for machines to sense their environment, think locally, act safely, and communicate quickly without waiting on the cloud. In safety-critical scenarios like driver assistance or industrial robotics, milliseconds matter. That’s why MIPS’

The Next Platform Shift: Physical and Edge AI, Powered by Arm

This blog post was originally published at Arm’s website. It is reprinted here with the permission of Arm. The Arm ecosystem is taking AI beyond the cloud and into the real-world As CES 2026 opens, a common thread quickly emerges across the show floor: most of what people are seeing, touching, and experiencing is already built on Arm. Arm-based

STM32MP21x: It’s Never Been More Cost-effective or More Straightforward to Create Industrial Applications with Cameras

This blog post was originally published at STMicroelectronics’ website. It is reprinted here with the permission of STMicroelectronics. ST is launching today the STM32MP21x product line, the most affordable STM32MP2, comprising a single-core Cortex-A35 running at 1.5 GHz and a Cortex-M33 at 300 MHz. It thus completes the STM32MP2 series announced in 2023, which became our first 64-bit MPUs. After the

Upcoming Webinar on Last Mile Logistics

On January 28, 2026, at 11:00 am PST (2:00 pm EST) Alliance Member company STMicroelectronics will deliver a webinar “Transforming last mile logistics with STMicroelectronics and Point One” From the event page: Precision navigation is rapidly becoming the standard for last mile delivery vehicles of all types. But what does it truly take to keep

Why Scalable High-Performance SoCs are the Future of Autonomous Vehicles

This blog post was originally published at Texas Instruments’ website. It is reprinted here with the permission of Texas Instruments. Summary The automotive industry is ascending to higher levels of vehicle autonomy with the help of central computing platforms. SoCs like the TDA5 family offer safe, efficient AI performance through an integrated C7™ NPU and

Getting Started with Edge AI on NVIDIA Jetson: LLMs, VLMs, and Foundation Models for Robotics

This article was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. Running advanced AI and computer vision workloads on small, power-efficient devices at the edge is a growing challenge. Robots, smart cameras, and autonomous machines need real-time intelligence to see, understand, and react without depending on the cloud. The NVIDIA

Microchip Expands PolarFire FPGA Smart Embedded Video Ecosystem with New SDI IP Cores and Quad CoaXPress Bridge Kit

Solution stacks deliver broadcast-quality video, SLVS-EC to CoaXPress bridging and ultra-low power operation for next-generation medical, industrial and robotic vision applications CHANDLER, Ariz., January 19, 2025 —Microchip Technology (Nasdaq: MCHP) has expanded its PolarFire® FPGA smart embedded video ecosystem to support developers who need reliable, low-power, high-bandwidth video connectivity. The embedded vision solution stacks combine hardware evaluation kits, development

Quadric, Inference Engine for On-Device AI Chips, Raises $30M Series C as Design Wins Accelerate Across Edge

Tripling product revenues, comprehensive developer tools, and scalable inference IP for vision and LLM workloads, position Quadric as the platform for on-device AI. BURLINGAME, Calif., Jan. 14, 2026 (PRNewswire) — Quadric®, the inference engine that powers on-device AI chips, today announced an oversubscribed $30 million Series C funding round, bringing total capital raised to $72

Quadric’s SDK Selected by TIER IV for AI Processing Evaluation and Optimization, Supporting Autoware Deployment in Next-Generation Autonomous Vehicles

Quadric today announced that TIER IV, Inc., of Japan has signed a license to use the Chimera AI processor SDK to evaluate and optimize future iterations of Autoware, open-source software for autonomous driving pioneered by TIER IV. Burlingame, CA, January 14, 2026 – Quadric today announced that TIER IV, Inc., of Japan has signed a

Deep Learning Vision Systems for Industrial Image Processing

This blog post was originally published at Basler’s website. It is reprinted here with the permission of Basler. Deep learning vision systems are often already a central component of industrial image processing. They enable precise error detection, intelligent quality control, and automated decisions – wherever conventional image processing methods reach their limits. We show how a

Upcoming Webinar on Advances in Automatic Test Pattern Generation

On January 14, 2026, at 7:00 am EST (10:00 am EST) Alliance Member company Synopsys will deliver a webinar “Advances in ATPG: From Power and Timing Awareness to Intelligent Pattern Search with AI” From the event page: As System-on-Chip (SoC) designs become increasingly complex, meeting test quality and cost goals requires advances in automatic test

Empowering Professionals and Aspiring Creators, Snapdragon X2 Plus Delivers Multi-day Battery Life, Fast Performance and Advanced AI

Key Takeaways: Snapdragon® X2 Plus transforms every click and every moment for modern professionals, aspiring creators and everyday users, delivering speed, multi-day battery life and built-in AI features. Representing a bold leap forward, the newest entrant in the Snapdragon X Series platform broadens access to the advanced performance and premium experiences consumers and businesses expect, expanding the growing Windows 11 Copilot+ PC community. Snapdragon X2 Plus harnesses the power of the 3rd Gen Qualcomm Oryon™ CPU and an 80 TOPS NPU, with select devices from

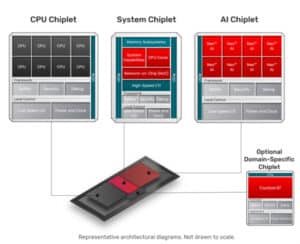

Cadence Launches Partner Ecosystem to Accelerate Chiplet Time to Market

Strategic collaborations with Samsung Foundry, Arm and others enable Cadence to deliver pre-validated chiplet solutions based on the Cadence Physical AI chiplet platform SAN JOSE, Calif., January 6, 2026, — Cadence (Nasdaq: CDNS) today announced a Chiplet Spec-to-Packaged Parts ecosystem to reduce engineering complexity and accelerate time to market for customers developing chiplets targeting physical

Intel Core Ultra Series 3 Debut as First Built on Intel 18A

Intel ushers in the next generation of AI PCs with exceptional performance, graphics and battery life; available this month Key Takeaways: First platform built on Intel 18A: At CES 2026, Intel launched the Intel® Core™ Ultra Series 3 processors, the first compute platform built on Intel 18A – the most advanced semiconductor process ever developed and

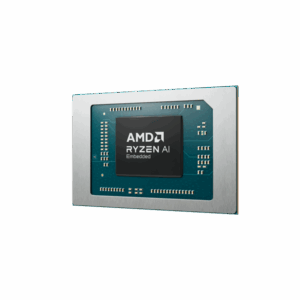

AMD Introduces Ryzen AI Embedded Processor Portfolio, Powering AI-Driven Immersive Experiences in Automotive, Industrial and Physical AI

Key Takeaways: New AMD Ryzen™ AI Embedded P100 and X100 Series processors combine high-performance “Zen 5” CPU cores, an AMD RDNA™ 3.5 GPU and an AMD XDNA™ 2 NPU for low-power AI acceleration Delivers energy-efficient, low-latency AI on a single chip for immersive in-vehicle experiences, industrial automation and physical AI for autonomous systems Launching today,

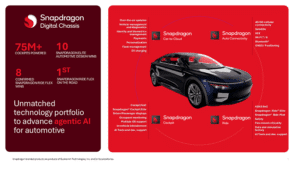

Qualcomm Drives the Future of Mobility with Strong Snapdragon Digital Chassis Momentum and Agentic AI for Major Global Automakers Worldwide

Key Takeaways: Qualcomm extends its automotive leadership with new collaborations, including Google, to power next‑gen software‑defined vehicles and agentic AI‑driven personalization. Snapdragon Ride and Cockpit Elite Platforms, and Snapdragon Ride Flex SoC, see rapid adoption, adding new design-wins and delivering the industry’s first commercialized mixed‑criticality platform that integrates cockpit, advanced driver‑assistance systems, and end‑to‑end AI. Decade of in-vehicle infotainment

SiMa.ai Announces First Integrated Capability with Synopsys to Accelerate Automotive Physical AI Development

San Jose, California – January 6, 2026 – SiMa.ai today announced the first integrated capability resulting from its strategic collaboration with Synopsys. The joint solution provides a blueprint to accelerate architecture exploration and early virtual software development for AI- ready, next-generation automotive SoCs that support applications such as Advanced Driver Assistance Systems (ADAS) and In-vehicle-Infotainment

D3 Embedded Showcases Camera/Radar Fusion, ADAS Cameras, Driver Monitoring, and LWIR solutions at CES

Las Vegas, NV, January 7, 2026 — D3 Embedded is showcasing a suite of technology solutions in partnership with fellow Edge AI and Vision Alliance Members HTEC, STMicroelectronics and Texas Instruments at CES 2026. Solutions include driver and in-cabin monitoring, ADAS, surveillance, targeting and human tracking – and will be viewable at different locations within