Processors for Embedded Vision

THIS TECHNOLOGY CATEGORY INCLUDES ANY DEVICE THAT EXECUTES VISION ALGORITHMS OR VISION SYSTEM CONTROL SOFTWARE

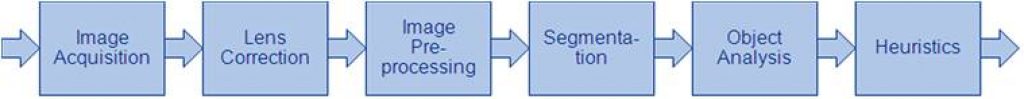

This technology category includes any device that executes vision algorithms or vision system control software. The following diagram shows a typical computer vision pipeline; processors are often optimized for the compute-intensive portions of the software workload.

The following examples represent distinctly different types of processor architectures for embedded vision, and each has advantages and trade-offs that depend on the workload. For this reason, many devices combine multiple processor types into a heterogeneous computing environment, often integrated into a single semiconductor component. In addition, a processor can be accelerated by dedicated hardware that improves performance on computer vision algorithms.

General-purpose CPUs

While computer vision algorithms can run on most general-purpose CPUs, desktop processors may not meet the design constraints of some systems. However, x86 processors and system boards can leverage the PC infrastructure for low-cost hardware and broadly-supported software development tools. Several Alliance Member companies also offer devices that integrate a RISC CPU core. A general-purpose CPU is best suited for heuristics, complex decision-making, network access, user interface, storage management, and overall control. A general purpose CPU may be paired with a vision-specialized device for better performance on pixel-level processing.

Graphics Processing Units

High-performance GPUs deliver massive amounts of parallel computing potential, and graphics processors can be used to accelerate the portions of the computer vision pipeline that perform parallel processing on pixel data. While General Purpose GPUs (GPGPUs) have primarily been used for high-performance computing (HPC), even mobile graphics processors and integrated graphics cores are gaining GPGPU capability—meeting the power constraints for a wider range of vision applications. In designs that require 3D processing in addition to embedded vision, a GPU will already be part of the system and can be used to assist a general-purpose CPU with many computer vision algorithms. Many examples exist of x86-based embedded systems with discrete GPGPUs.

Digital Signal Processors

DSPs are very efficient for processing streaming data, since the bus and memory architecture are optimized to process high-speed data as it traverses the system. This architecture makes DSPs an excellent solution for processing image pixel data as it streams from a sensor source. Many DSPs for vision have been enhanced with coprocessors that are optimized for processing video inputs and accelerating computer vision algorithms. The specialized nature of DSPs makes these devices inefficient for processing general-purpose software workloads, so DSPs are usually paired with a RISC processor to create a heterogeneous computing environment that offers the best of both worlds.

Field Programmable Gate Arrays (FPGAs)

Instead of incurring the high cost and long lead-times for a custom ASIC to accelerate computer vision systems, designers can implement an FPGA to offer a reprogrammable solution for hardware acceleration. With millions of programmable gates, hundreds of I/O pins, and compute performance in the trillions of multiply-accumulates/sec (tera-MACs), high-end FPGAs offer the potential for highest performance in a vision system. Unlike a CPU, which has to time-slice or multi-thread tasks as they compete for compute resources, an FPGA has the advantage of being able to simultaneously accelerate multiple portions of a computer vision pipeline. Since the parallel nature of FPGAs offers so much advantage for accelerating computer vision, many of the algorithms are available as optimized libraries from semiconductor vendors. These computer vision libraries also include preconfigured interface blocks for connecting to other vision devices, such as IP cameras.

Vision-Specific Processors and Cores

Application-specific standard products (ASSPs) are specialized, highly integrated chips tailored for specific applications or application sets. ASSPs may incorporate a CPU, or use a separate CPU chip. By virtue of their specialization, ASSPs for vision processing typically deliver superior cost- and energy-efficiency compared with other types of processing solutions. Among other techniques, ASSPs deliver this efficiency through the use of specialized coprocessors and accelerators. And, because ASSPs are by definition focused on a specific application, they are usually provided with extensive associated software. This same specialization, however, means that an ASSP designed for vision is typically not suitable for other applications. ASSPs’ unique architectures can also make programming them more difficult than with other kinds of processors; some ASSPs are not user-programmable.

“Speeding Time to Market with Production-Ready Edge AI Solutions: From Wake Word Detection to Face Recognition,” a Presentation from Microchip Technology

Nick De Rosa, Kannan Srinivasagam, Edge AI Marketing Manager at Microchip Technology presents “Speeding Time to Market with Production-Ready Edge AI Solutions: From Wake Word Detection to Face Recognition” at the May 2026 Embedded Vision Summit. Design teams are moving from edge AI evaluation to deployment and need production-ready, system-level… “Speeding Time to Market with

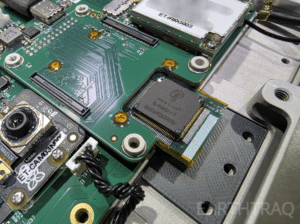

Mentium Technologies’ Luna-R1 AI Chip Selected for ET-01 Constellation Mission, First Multi-Satellite Deployment of Mentium Hardware

Launching on SpaceX Transporter-17 to Enable Autonomous Intelligence Across LEO Satellite Networks Goleta, CA — May 28, 2026 — Mentium Technologies today announced that its next-generation AI processing chip, Luna-R1, has been selected for deployment on the ET-01 mission, a constellation of satellites operating in Low Earth Orbit (LEO). The constellation is being developed by EarthTraq, previously operating in

Axelera AI and Andes Technology Partner to Power Next-Generation “Europa” AI Platform with High-Performance RISC-V AX65 Cores

EINDHOVEN, Netherlands & HSINCHU, Taiwan — June 1, 2026 — Axelera AI, the leading provider of high-performance, ultra-efficient edge AI solutions, and Andes Technology (TWSE: 6533), a premier supplier of high-efficiency 32/64-bit RISC-V processor cores, today announced a strategic partnership. Axelera AI has integrated the AndesCore™ AX65 Out-of-Order RISC-V processor into its newly unveiled Europa AI Processing Unit (AIPU) to provide

MicroIP and BrainChip Announce Strategic Ecosystem Partnership to Deliver Advanced Edge AI Hardware and Software

LAGUNA HILLS, Calif. and HSINCHU CITY, TAIWAN – June 2, 2026 – BrainChip Inc., a leading provider of ultra-low-power, neuromorphic AI semiconductor intellectual property, today announced a strategic eco-system partnership agreement with MicroIP, an expert in hardware and software products, machine learning (ML) applications, models and ASIC designs. Under the terms of the agreement, the two companies

Introducing the Qualcomm Dragonwing IQ10 RRD: A full-stack robotics reference design

A robotics reference design that brings integrated hardware, software and AI tools into one deployment-ready system Key Takeaways: The Dragonwing IQ10 Robotics Reference Design (RRD) aims to help teams move from prototype to production by combining compute, sensing, networking and software in a single deployment-ready system. The platform is designed to deliver up to

Introducing Infineon’s Live Lab

This blog post was originally published at Infineon’s website. It is reprinted here with the permission of Infineon. You have just decided to evaluate one of Infineon’s PSOC™ family of MCUs, be it PSOC™ Edge or PSOC™ Control. But you do not have the time to wait for the kit to arrive. What now? Well,

“Efficient Computer Vision at the Far Edge: Design and Training Under Constraints,” a Presentation from Lattice Semiconductor

Nicolas Widynski, AI Fellow at Lattice Semiconductor presents “Efficient Computer Vision at the Far Edge: Design and Training Under Constraints” at the May 2026 Embedded Vision Summit. This session explores practical strategies for deploying computer vision AI on far-edge devices under strict resource constraints. While highlighting FPGA-specific strengths, such as… “Efficient Computer Vision at the

GlobalFoundries completes acquisition of Synopsys’ Processor IP Solutions Business, delivering a holistic technology platform for Physical AI

Combines GF’s Physical AI portfolio with MIPS’ RISC-V and software-to-silicon expertise to accelerate custom, software-first products for automotive, industrial and agentic edge platforms MALTA, N.Y., June 2, 2026 – GlobalFoundries (Nasdaq: GFS) (GF) today announced the completion of its acquisition of Synopsys’ ARC Processor IP Solutions business. Combined with MIPS, by GF, the acquisition

“Why Your Next AI Accelerator Should Be an FPGA,” a Presentation from Efinix

Mark Oliver, VP of Marketing and Business Development at Efinix presents “Why Your Next AI Accelerator Should Be an FPGA” at the May 2026 Embedded Vision Summit. Edge AI system developers often assume that AI workloads require a GPU or NPU. But when cost, latency, complex I/O or tight power… “Why Your Next AI Accelerator

Running BitNet on Qualcomm Hexagon with custom 1.58 kernels

This blog post was originally published at ENERZAi’s website. It is reprinted here with the permission of ENERZAi. Today, we are excited to share a milestone that our team has been working toward for some time. ENERZAi has successfully deployed BitNet (b1.58) 2B on the Qualcomm QCS6490 Hexagon NPU via QNN! If that sentence felt

GSOC Solutions and Andes Technology Announce Strategic Partnership to Expand RISC-V CPU Options for Configurable SoC and Chiplet Platforms

SAN JOSE, Calif. – May 26, 2026 – GSOC Solutions, a premier ASIC development center located at Tel-Aviv, Israel, and Andes Technology (TWSE: 6533), a leading supplier of high-performance, low-power RISC-V processor cores, announced a strategic partnership. The collaboration will integrate Andes’ full range of RISC-V CPU IP into GSOC’s Ocean™ platform, a configurable SoC

“HiFi iQ: Enabling Voice AI and Immersive Audio for Smart Home, Mobile and Automotive,” a Presentation from Cadence

Amol Borkar, Group Director of Product Management and Marketing—Tensilica DSPs, Silicon Solutions Group (SSG) at Cadence presents “HiFi iQ: Enabling Voice AI and Immersive Audio for Smart Home, Mobile and Automotive” at the May 2026 Embedded Vision Summit. Voice is quickly becoming the primary interface for consumer and enterprise devices,… “HiFi iQ: Enabling Voice AI

Why Vision LLMs Force A Rethink Of Edge AI Hardware

This blog post was originally published at Expedera’s website. It is reprinted here with the permission of Expedera. As vision-centric large language models move on-device, performance measured in raw TOPS is no longer enough. Architectures need to be built around real workloads, memory behavior, and sustained utilization, especially at the edge. Vision LLMs are changing

Gemma 4 on Arm: Accessible, Immediate, Optimized On-device AI to Accelerate the Mobile App Experience

Gemma 4 on Arm brings fast, privacy-preserving, power-efficient AI directly onto Android devices, helping developers deliver richer real-time app experiences to billions of users without relying on the cloud. This blog post was originally published at Arm’s website. It is reprinted here with the permission of Arm. Real-time assistance, seamless communication, and greater personalization are now baseline expectations for billions of smartphone

Building High-Performance Data Paths: New Evaluation Platforms for AI and Vision Systems

Bringing AI and vision designs to life starts with reliable data movement. Learn how our latest evaluation kits help engineers prototype imaging and networking paths early, reduce design risk and move from concept to working system faster. Microchip released two evaluation platforms designed to help developers prototype and benchmark data-intensive systems much earlier in

“Accelerating Deep Learning Models on AMD Adaptive SoCs with the AMD Vitis AI Workflow,” a Presentation from AMD

Thomas Zerbs, Technical Marketing Engineer, Adaptive and Embedded Computing Group at AMD presents “Accelerating Deep Learning Models on AMD Adaptive SoCs with the AMD Vitis AI Workflow” at the May 2026 Embedded Vision Summit. In this presentation, we provide a practical end-to-end overview of the AMD Vitis™ AI workflow for… “Accelerating Deep Learning Models on

Samsung Foundry and Cadence: Accelerating Chiplet Solutions for Physical AI

This blog post was originally published at Samsung Semiconductor’s website. It is reprinted here with the permission of Samsung Semiconductor. Artificial Intelligence (AI) is rapidly expanding beyond digital environments and into the physical world. AI-driven systems that give machines the ability to perceive, comprehend, learn from, and respond to real-time environments are quickly moving from

Analog Devices to Acquire Empower Semiconductor, Expanding its Next-Generation High-Density Power Portfolio for the AI Era

Key Takeaways: Addresses a critical challenge in AI – delivering high-density, energy-efficient compute as power and thermal demands limit system scale Further advances ADI’s position as a leading strategic, system-level grid-to-core power partner for hyperscalers and AI silicon developers Expands ADI’s total addressable market in AI compute power delivery with Integrated Voltage Regulator (IVR) and

The Software Gap Holding AI Back: What Semiconductor Leaders Can Learn From the EV Transition

This blog post was originally published at HTEC’s website. It is reprinted here with the permission of HTEC. The story of EV adoption contains a warning that semiconductor companies would be wise to take seriously. When the automotive industry made the leap from combustion engines to electric drivetrains, it discovered that a transformative technology is not enough on its own. The

Intel Core Ultra Series 3: The New Standard for Edge AI Robotics Compute

By integrating a CPU, GPU, and NPU, Intel Core Ultra Series 3 delivers edge AI power across global use cases—from hospitality and manufacturing to healthcare and education. At 2 a.m., an emergency room nurse orders a latte at a quiet hospital coffee stand. There is no human behind the counter. Instead, a sleek robotic arm