Dear Colleague,

The Edge AI and Vision Alliance is now accepting applications for the 2021 Edge AI and Vision Product of the Year Awards competition. The Edge AI and Vision Product of the Year Awards (an expansion of previous years’ Vision Product of the Year Awards) celebrate the innovation of the industry’s leading companies that are developing and enabling the next generation of edge AI and computer vision products. Winning a Product of the Year award recognizes your leadership in edge AI and computer vision as evaluated by independent industry experts. For more information and to enter. please see the program page.

The holidays are coming fast! In that spirit, we’re delighted to offer you 30% off on two essential resources that will keep you competitive in computer vision and edge AI in 2021:

- 2020 Embedded Vision Summit (On-Demand Edition)

If you want to learn about the hottest topics in practical computer vision and visual AI, straight from the mouths of true experts, you’ll want to check out the Embedded Vision Summit (On-Demand Edition). Get the content and know-how you need to advance your products and career in edge AI, computer vision and sensing.With the 2020 Embedded Vision Summit (On-Demand Edition) you can:

- Gain access to all 75+ talks about the most current topics in the industry

- Watch Over-the-Shoulder Tutorials from NVIDIA, Arrow Electronics, Avnet and LAON PEOPLE

- Learn from panel discussions about two of the hottest topics: opportunities and challenges in edge AI, and the future of image sensors

Register today and get 30% off the normal $149 (+ tax) price using promo code: 20ODSHOLIDAY (promotion expires 1/3/2021)

- Deep Learning for Computer Vision with TensorFlow 2.0 and Keras

Learn to develop CV and edge AI applications using the leading framework for neural network training, design and evaluation in

this course.

- 8 practical, knowledge-filled lectures by a Google Developer Expert in TensorFlow

- 6 hands-on labs hosted on Google Colab, where you’ll get to build your own neural networks and see how they work

- As many office-hour sessions and as much email support from our expert instructor as you need to get all your questions answered

Register today and get 30% off the normal $299 (+ tax) price using promo code: TFHOLIDAY30 (promotion expires 1/3/2021)

Brian Dipert

Editor-In-Chief, Edge AI and Vision Alliance |

|

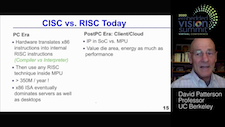

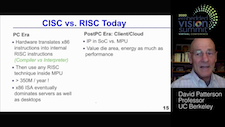

A New Golden Age for Computer Architecture: Processor Innovation to Enable Ubiquitous AI

Paradoxically, processors today are both a key enabler of and a painful obstacle to the widespread use of AI applications. Despite big recent advances in machine learning (ML) processors, many people creating ML algorithms and applications still need much better processors to make their ideas practical, affordable and scalable. What will it take to bring processors to the next level, so that ML-based solutions can be deployed widely? Uniquely qualified to answer these questions is 2020 Embedded Vision Summit keynote speaker David Patterson, UC Berkeley professor of the graduate school, a Google distinguished engineer and the RISC-V Foundation Vice-Chair. In this presentation, Patterson shares his perspective on the past, present, and future of processor design, highlighting key challenges, lessons learned, and the emergence of machine learning as a key driver of processor innovation. Using lessons learned from an earlier revolution in processor architecture, the RISC revolution, Patterson explains why today, the most promising direction in processor design is domain-specific architectures (DSAs) — processors that are optimized for specific types of workloads. To illustrate the concepts and advantages of DSAs, Patterson examines Google’s Tensor Processing Unit (TPU), one of the earliest DSAs to be widely deployed for machine learning applications.

Also see of Patterson’s extended Q&A session, “Perspectives On the Past, Present, and Future of Processor Design,” held at the 2020 Embedded Vision Summit with Jeff Bier, founder of the Edge AI and Vision Alliance.

The Future of Image Sensors

Image sensors, coupled with artificial intelligence, are increasingly being used as the “eyes” of machines. This is creating tremendous new opportunities for image sensors — while also radically transforming what system and application developers need from image sensors. In this panel discussion moderated by Shung Chieh, Co-founder and Vice President of Engineering at Solidspac3, a diverse set of experts–Sandor Barna, Vice President of Hardware at Aurora; Boyd Fowler, Chief Technology Officer at OmniVision; Sundar Ramamurthy, Group Vice President and General Manager at Applied Materials; and Ryad Benosman, Professor at the University of Pittsburgh Medical Center, Carnegie Mellon University and Sorbonne Universitas–share their perspectives on the future of image sensors. The panel explores trends such as integrating processors on the same die with sensors; ultra-low-power sensors; and new image sensing approaches, including event-based and neuromorphic sensing, new techniques for optical depth sensing, and hyperspectral imaging.

|

|

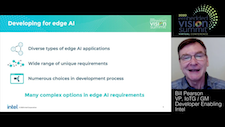

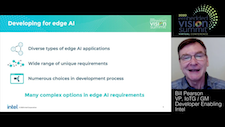

Streamline, Simplify, and Solve for the Edge of the Future

Today, edge AI is changing our world — whether it’s smart lab instruments enabling faster blood tests, autonomous drones inspecting wind generators, or AI coaches motivating us to exercise better. But developing AI-enabled edge devices is tough. Due to the diversity of edge AI applications, there’s no “one size fits all” solution; developers must navigate among numerous choices in algorithms, sensors, processors and development tools. And, developers must deliver highly efficient systems to meet the demanding cost, power consumption and size requirements of edge devices. In this general session talk from the 2020 Embedded Vision Summit, Bill Pearson, Vice President of the Internet of Things Group and General Manager of Developer Enabling at Intel, identifies the most important challenges facing edge AI developers today, which center around complex design and development processes. He shares Intel’s vision for how the industry must advance in order to enable edge AI to reach its true potential — and what Intel is doing to achieve this vision.

Opportunities and Challenges in Realizing the Potential for AI at the Edge

The opportunity for edge AI solutions at scale is massive—and is expected to eclipse cloud-based approaches in the next few years. But to realize this potential, we must find ways to simplify and democratize the development and deployment of edge AI systems. What are the most critical gaps that must be filled to streamline edge AI development and deployment? What are the most promising recent innovations in this space? What should “DevOps for edge AI” look like? In this panel discussion moderated by Samir Kumar, Managing Director at Microsoft M12, you’ll hear perspectives on these and related questions from leading experts who are enabling the next generation of edge AI solutions: Pete Warden, Staff Research Engineer at Google; Stevie Bathiche, Technical Fellow at Microsoft; Steve Teig, CEO at Perceive; and Luis Ceze, Professor at the University of Washington and Co-founder and CEO of OctoML.

|

|

Intel DevCloud for the Edge (Best Developer Tool)

Intel’s DevCloud for the Edge is the 2020 Edge AI and Vision Product of the Year Award winner in the Developer Tools category. The Intel DevCloud for the Edge allows you to virtually prototype and experiment with AI workloads for computer vision on the latest Intel edge inferencing hardware, with no hardware setup required since the code executes directly within the web browser. You can test the performance of your models using the Intel Distribution of OpenVINO Toolkit and combinations of CPUs, GPUs, VPUs and FPGAs. The site also contains a series of tutorials and examples preloaded with everything needed to quickly get started, including trained models, sample data and executable code from the Intel Distribution of OpenVINO Toolkit as well as other deep learning tools. Please see here for more information on Intel and its DevCloud for the Edge.

|