Andes Technology Expands Comprehensive AndeSentry Security Suite with Complete Trusted Execution Environment Support for Embedded Systems

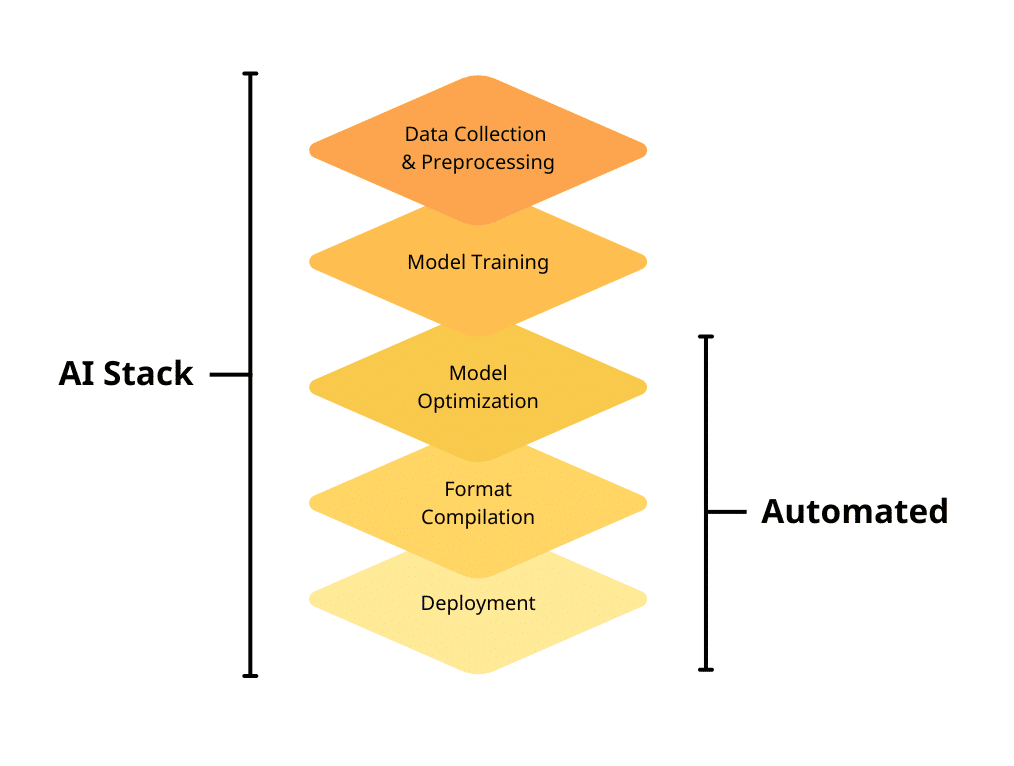

Includes IOPMP, Secure Boot, MCU-TEE for RTOS, and OP-TEE for Linux to Protect Devices from MCUs to Edge AI Processors Hsinchu, Taiwan – October 6th, 2025 – Andes Technology Corporation, the leading supplier of high-efficiency, low-power 32/64-bit RISC-V processor cores, today announced the latest AndeSentry™ Framework with two new components, Secure Boot v1.0.1 and MCU-TEE […]