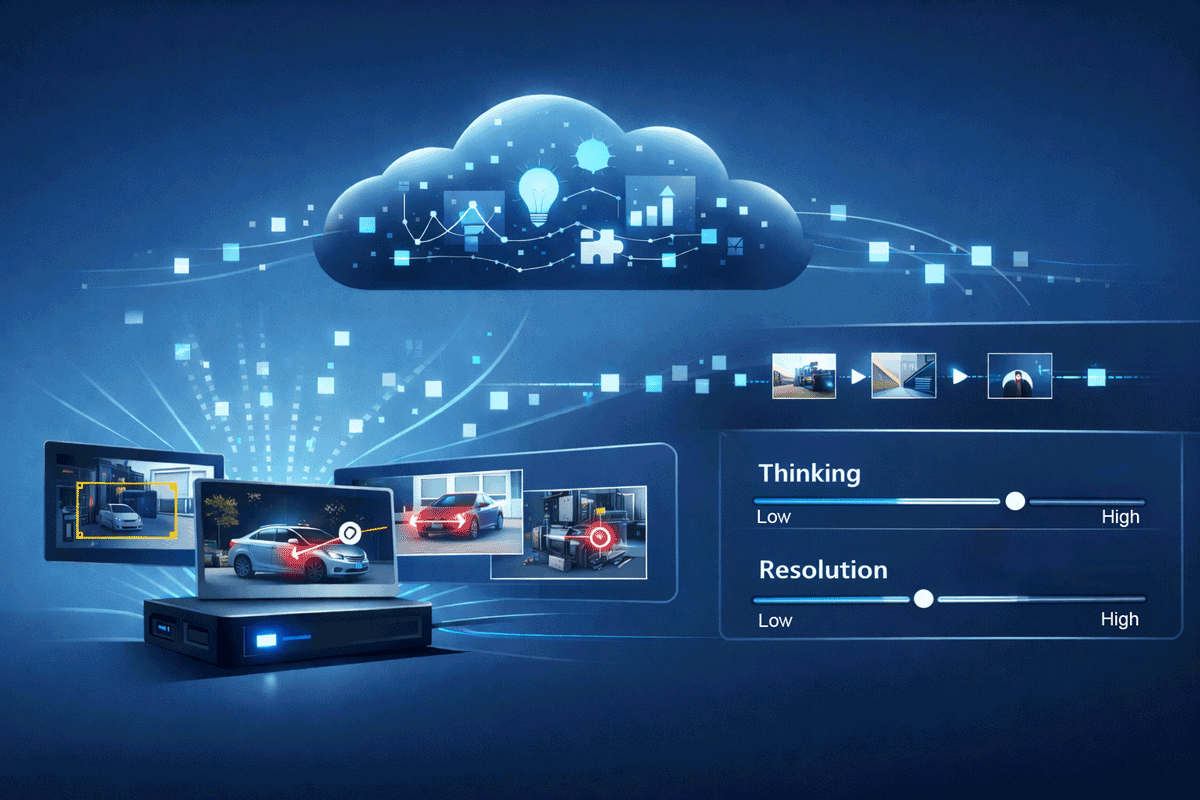

Ambarella Launches a Developer Zone to Broaden its Edge AI Ecosystem

SANTA CLARA, Calif., Jan. 6, 2026 — Ambarella, Inc. (NASDAQ: AMBA), an edge AI semiconductor company, today announced during CES the launch of its Ambarella Developer Zone (DevZone). Located at developer.ambarella.com, the DevZone is designed to help Ambarella’s growing ecosystem of partners learn, build and deploy edge AI applications on a variety of edge systems with greater speed and clarity. It […]

Ambarella Launches a Developer Zone to Broaden its Edge AI Ecosystem Read More +