Gesture Control Functions

Introducing TENN: Revolutionizing Computing with an Energy Efficient Transformer Replacement

This blog post was originally published at BrainChip’s website. It is reprinted here with the permission of BrainChip. TENN, or Temporal Event-based Neural Network, is redefining the landscape of artificial intelligence by offering a highly efficient alternative to traditional transformer models. Developed by BrainChip, this technology aims to address the substantial energy and computational demands

The Autonomous Home Has Arrived: Experience What’s Possible at CES 2024

This blog post was originally published at NXP Semiconductors’ website. It is reprinted here with the permission of NXP Semiconductors. As we enter 2024, the smart home is ready to enter a new era. Thanks to two overlapping trends — the arrival of Matter, the interoperability standard from the Connectivity Standards Alliance (CSA), and the

Future Automotive Technologies Represent a US$1.6 Trillion Opportunity in 2034

For more information, visit https://www.idtechex.com/en/research-report/future-automotive-technologies-2024-2034-applications-megatrends-forecasts/979. The automotive megatrends that will define the future of vehicles: autonomous driving, mobility as a service, electric vehicles, connected cars, software-defined vehicles, everything-as-a-service, and more. Autonomous driving technologies and vehicle electrification are megatrends that are reshaping the automotive industry. In addition to these, connected and software defined vehicles have recently

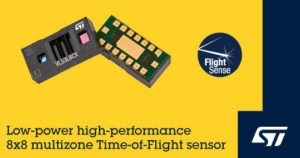

Next-generation Multizone Time-of-flight Sensor from STMicroelectronics Boosts Ranging Performance and Power Saving

Target applications include human-presence sensing, gesture recognition, robotics, and other industrial uses Dec 14, 2023 – Geneva, Switzerland – STMicroelectronics’ VL53L8CX, the latest-generation 8×8 multizone time-of-flight (ToF) ranging sensor, delivers a range of improvements including greater ambient-light immunity, lower power consumption, and enhanced optics. ST’s direct-ToF sensors combine a 940nm vertical cavity surface emitting laser

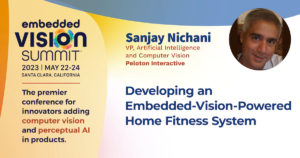

“Developing an Embedded Vision AI-powered Fitness System,” a Presentation from Peloton Interactive

Sanjay Nichani, Vice President for Artificial Intelligence and Computer Vision at Peloton Interactive, presents the “Developing an Embedded Vision AI-powered Fitness System” tutorial at the May 2023 Embedded Vision Summit. The Guide is Peloton’s first strength-training product that runs on a physical device and also the first that uses AI technology. It turns any TV

DeGirum Demonstration of Streaming Edge AI Development and Deployment

Konstantin Kudryavtsev, Vice President of Software Development at DeGirum, demonstrates the company’s latest edge AI and vision technologies and products at the September 2023 Edge AI and Vision Alliance Forum. Specifically, Kudryavtsev demonstrates streaming edge AI development and deployment using the company’s JavaScript and Python SDKs and its cloud platform. On the software front, DeGirum

DeGirum Demonstration of Running Multiple Object Detection Models in Parallel on DeGirum ORCA

Shashi Chilappagari, Co-Founder and Chief Architect of DeGirum, demonstrates the company’s latest edge AI and vision technologies and products at the 2023 Embedded Vision Summit. Specifically, Chilappagari demonstrates DeGirum’s hardware (ORCA) and software (PySDK). Chilappagari demonstrates a use case in which three YOLOv5-based object detection models (hand detection, face detection, and person detection) are running

BrainChip Demonstration of Vision and Edge Learning Solutions for Event- and Frame-based Cameras

Nikunj Kotecha, Solutions Architect at BrainChip, demonstrates the company’s latest edge AI and vision technologies and products at the 2023 Embedded Vision Summit. Specifically, Kotecha demonstrates BrainChip’s brain-inspired Akida platform. With the Akida platform, AI applications are free from the tethers of the cloud and can learn independently. Whether recognizing faces in conventional webcam images

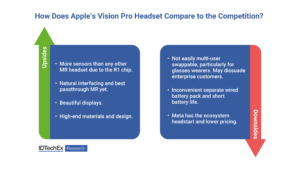

What Apple’s Vision Pro Mixed Reality Headset Does Differently

On June 5th, Apple ended years of speculation and finally announced a mixed reality (MR) headset. The US$3499 Vision Pro headset confirmed some expectations and confounded others. IDTechEx has been tracking the mixed, augmented and virtual reality markets since 2015, with reports on headsets and accessories and optics available now and a report on displays

DeGirum Demonstration of Machine Learning Model Multiplexing on ORCA

Shashi Chilappagari, Co-Founder and Chief Architect at DeGirum, demonstrates the company’s latest edge AI and vision technologies and products at the March 2023 Edge AI and Vision Innovation Forum. Specifically, Chilappagari demonstrates machine learning model multiplexing on the company’s ORCA AI hardware accelerator. Developing real-world edge AI applications often requires using multiple machine learning (ML)

CEVA Introduces UWB Radar Platform for Automotive Child Presence Detection to Meet Emerging Safety Specifications

RivieraWaves UWB Radar delivers robust sensing of object movements and breathing micro-movements for a wide suite of applications, including automotive child presence detection and gesture control, cot infant monitoring, and power-saving presence detection in laptops, TVs and smart buildings ROCKVILLE, MD, March 09, 2023 – CEVA, Inc. (NASDAQ: CEVA), the leading licensor of wireless connectivity and

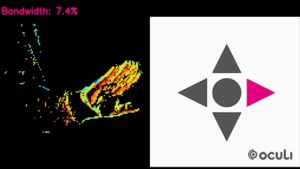

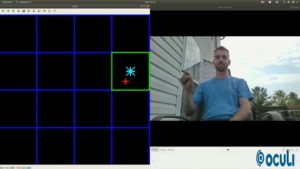

Oculi Demonstration of Its Sensing and Processing Unit

Chad Howard, Lead Applications Engineer at Oculi, demonstrates the company’s latest edge AI and vision technologies and products at the September 2022 Edge AI and Vision Innovation Forum. Specifically, Howard demonstrates the company’s Sensing and Processing Unit (SPU). The SPU is capable of performing tasks at the pixel level and at the edge—data doesn’t need

Luxonis Demonstration of Landmark Detection via Handtracking

Erik Kokalj, Director of Applications Engineering at Luxonis, demonstrates the company’s latest edge AI and vision technologies and products at the 2022 Embedded Vision Summit. Specifically, Kokalj demonstrates specialized camera technology from Luxonis, which melds on-device artificial intelligence and computer vision with stereo depth. Kokalj shows how the camera captures real-time 3D coordinates of his

“Combining Ultra-low-power Proximity Sensing and Ranging to Enable New Applications,” a Presentation from STMicroelectronics

Armita Abadian, Senior Technical Marketing Manager for Imaging in the Americas at STMicroelectronics, presents the “Combining Ultra-low-power Proximity Sensing and Ranging to Enable New Applications” tutorial at the May 2022 Embedded Vision Summit. Time-of-flight (ToF) sensors are widely used to provide depth maps, which enable machines to understand their surroundings in three dimensions for applications

May 2022 Embedded Vision Summit Slides

The Embedded Vision Summit was held on May 16-19, 2022 in Santa Clara, California, as an educational forum for product creators interested in incorporating visual intelligence into electronic systems and software. The presentations delivered at the Summit are listed below. All of the slides from these presentations are included in… May 2022 Embedded Vision Summit

STMicroelectronics Reveals Affordable and Turnkey STGesture Recognition for Touchless Control in Diverse Applications

Dedicated FlightSense™ software package enables low-power, low-cost gesture sensing Full Privacy, thanks to camera-free solution leveraging ToF multi-zone ranging sensor 25 Apr 2022 | Geneva | STMicroelectronics (NYSE: STM), a global semiconductor leader serving customers across the spectrum of electronics applications, has launched a solution for touchless gesture-based controls in simple, cost-conscious consumer and industrial

Oculi’s IntelliPixel Technology Enables Real-time Gesture Control for Interactive Displays and XR

This video was originally published at Oculi’s YouTube channel. It is reprinted here with the permission of Oculi. Users have been longing to experience the new touchless interactive technology on their devices. Whether it’s hand tracking, hand posture, face detection or gaze detection, they all require real-time performance while operating with limited resources. OCULI SPU™

Oculi Enables Near-zero Lag Performance with an Embedded Solution for Gesture Control

Immersive extended reality (XR) experiences let users seamlessly interact with virtual environments. These experiences require real-time gesture control and eye tracking while running in resource-constrained environments such as on head-mounted displays (HMDs) and smart glasses. These capabilities are typically implemented using computer vision technology, with imaging sensors that generate lots of data to be moved