Vision Algorithms for Embedded Vision

Most computer vision algorithms were developed on general-purpose computer systems with software written in a high-level language

Most computer vision algorithms were developed on general-purpose computer systems with software written in a high-level language. Some of the pixel-processing operations (ex: spatial filtering) have changed very little in the decades since they were first implemented on mainframes. With today’s broader embedded vision implementations, existing high-level algorithms may not fit within the system constraints, requiring new innovation to achieve the desired results.

Some of this innovation may involve replacing a general-purpose algorithm with a hardware-optimized equivalent. With such a broad range of processors for embedded vision, algorithm analysis will likely focus on ways to maximize pixel-level processing within system constraints.

This section refers to both general-purpose operations (ex: edge detection) and hardware-optimized versions (ex: parallel adaptive filtering in an FPGA). Many sources exist for general-purpose algorithms. The Embedded Vision Alliance is one of the best industry resources for learning about algorithms that map to specific hardware, since Alliance Members will share this information directly with the vision community.

General-purpose computer vision algorithms

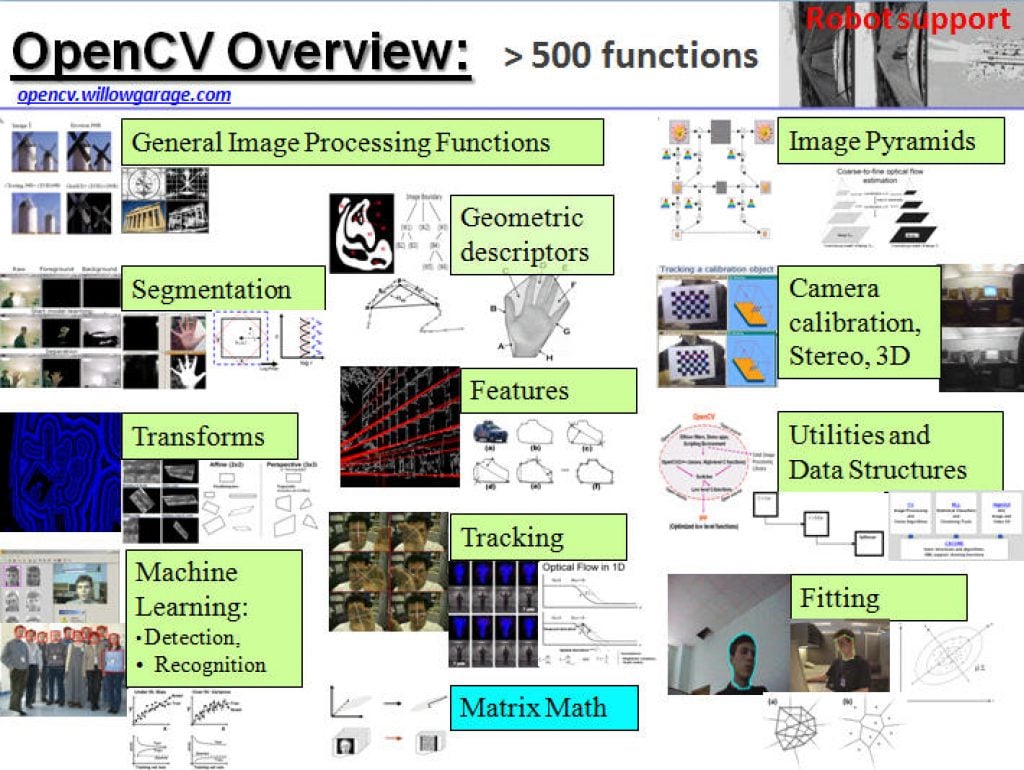

One of the most-popular sources of computer vision algorithms is the OpenCV Library. OpenCV is open-source and currently written in C, with a C++ version under development. For more information, see the Alliance’s interview with OpenCV Foundation President and CEO Gary Bradski, along with other OpenCV-related materials on the Alliance website.

Hardware-optimized computer vision algorithms

Several programmable device vendors have created optimized versions of off-the-shelf computer vision libraries. NVIDIA works closely with the OpenCV community, for example, and has created algorithms that are accelerated by GPGPUs. MathWorks provides MATLAB functions/objects and Simulink blocks for many computer vision algorithms within its Vision System Toolbox, while also allowing vendors to create their own libraries of functions that are optimized for a specific programmable architecture. National Instruments offers its LabView Vision module library. And Xilinx is another example of a vendor with an optimized computer vision library that it provides to customers as Plug and Play IP cores for creating hardware-accelerated vision algorithms in an FPGA.

Other vision libraries

- Halcon

- Matrox Imaging Library (MIL)

- Cognex VisionPro

- VXL

- CImg

- Filters

“Efficient Computer Vision at the Far Edge: Design and Training Under Constraints,” a Presentation from Lattice Semiconductor

Nicolas Widynski, AI Fellow at Lattice Semiconductor presents “Efficient Computer Vision at the Far Edge: Design and Training Under Constraints” at the May 2026 Embedded Vision Summit. This session explores practical strategies for deploying computer vision AI on far-edge devices under strict resource constraints. While highlighting FPGA-specific strengths, such as… “Efficient Computer Vision at the

Running BitNet on Qualcomm Hexagon with custom 1.58 kernels

This blog post was originally published at ENERZAi’s website. It is reprinted here with the permission of ENERZAi. Today, we are excited to share a milestone that our team has been working toward for some time. ENERZAi has successfully deployed BitNet (b1.58) 2B on the Qualcomm QCS6490 Hexagon NPU via QNN! If that sentence felt

Why Vision LLMs Force A Rethink Of Edge AI Hardware

This blog post was originally published at Expedera’s website. It is reprinted here with the permission of Expedera. As vision-centric large language models move on-device, performance measured in raw TOPS is no longer enough. Architectures need to be built around real workloads, memory behavior, and sustained utilization, especially at the edge. Vision LLMs are changing

“What We Learned Porting to OpenCV 5 with Claude Code,” a Presentation from Boston.AI

Mark Antonelli, CTO at Boston.AI presents “What We Learned Porting to OpenCV 5 with Claude Code” at the May 2026 Embedded Vision Summit. OpenCV 5 introduces significant architectural changes to improve vision performance and better utilize modern hardware. In addition to support for new features like vision-language models, there are… “What We Learned Porting to

Gemma 4 on Arm: Accessible, Immediate, Optimized On-device AI to Accelerate the Mobile App Experience

Gemma 4 on Arm brings fast, privacy-preserving, power-efficient AI directly onto Android devices, helping developers deliver richer real-time app experiences to billions of users without relying on the cloud. This blog post was originally published at Arm’s website. It is reprinted here with the permission of Arm. Real-time assistance, seamless communication, and greater personalization are now baseline expectations for billions of smartphone

“Edge-First Coding Agents: Trustworthy Agentic Development for Real Devices,” a Presentation from Ambarella

Pietro Antonio Cicalese, Senior Technical Marketing Engineer at Ambarella, presents “Edge-First Coding Agents: Trustworthy Agentic Development for Real Devices” at the May 2026 Embedded Vision Summit. Coding agents are usually built as cloud-first abstractions. But for developing trustworthy, production-ready edge systems, we’ve found that coding agents should be designed from… “Edge-First Coding Agents: Trustworthy Agentic

How to Install OpenClaw and Hermes Agent on Qualcomm Arduino Boards, Rubik Pi 3 and Snapdragon PCs

This blog post was originally published at Qualcomm’s website. It is reprinted here with the permission of Qualcomm. A Step-by-Step Guide with Arduino UNO Q, Rubik Pi 3, and PCs with Snapdragon Introduction Welcome, developers and tech enthusiasts! This blog post will guide you through running OpenClaw and Hermes Agent on Qualcomm Technologies’ platforms,

ModelCat Selected for 2026 Amazon Devices Climate Tech Accelerator

Company will collaborate with Amazon and Plug and Play Tech Center to evaluate edge AI deployment pathways that can help reduce device energy consumption and carbon impact Sunnyvale, California — May 20, 2026 — ModelCat today announced that it has been selected to participate in the 2026 Amazon Devices Climate Tech Accelerator, a program developed

Upcoming Webinar on Building Your First Real-World AI Tool

On May 21, 2026, at 1:00 pm EDT (10:00 am PDT) Boston.AI will deliver a webinar “What It Takes to Build Your First Real-World AI Tool” From the event page: Most AI talks focus on what’s possible. This one shows how to actually use AI in product development, even if you’re just getting started. In

Synetic Debuts LYNX Computer Vision SDK at the 2026 Embedded Vision Summit

New SDK helps teams add real-time vision capabilities to robotics, industrial automation, security and edge AI systems with faster deployment, flexible integration and continuous model improvement REDMOND, Wash. — May 11, 2026 — Synetic, Inc., a provider of computer vision and synthetic data solutions for real-world AI systems, today announced the debut of LYNX, a

Everything Is Going to Be Driven by Algorithms

This blog post was originally published at Ambarella’s website. It is reprinted here with the permission of Ambarella. A security camera on a warehouse loading dock captures 86,400 seconds of video every day. A fleet telematics recorder on a long-haul truck accumulates gigabytes of road footage between fuel stops. A surgical robot’s stereo cameras generate

Airy3D Announces Support for MediaTek Genio SoCs for Edge 3D Vision Applications

Montreal, Canada – May 11, 2026 – Airy3D today announced that its DepthIQ™ SDK is supported on the MediaTek Genio Series of System-on-Chips, enabling compact and cost-efficient passive 3D vision solutions for embedded AI vision applications across robotics, industrial, retail, and smart devices. Airy3D’s DepthIQ technology enables simultaneous capture of high-quality 2D images and depth

Face Super Resolution for Better Video Experiences

This blog post was originally published at Visidon’s website. It is reprinted here with the permission of Visidon. Video has become the primary medium for communication — from hybrid meetings to live events and social media. At the same time, expectations have risen. Faces need to look sharp, expressive, and natural — even when captured from

On-Demand Webinar on Deploying Computer Vision and LLMs at the Edge

Check out this recent webinar from Boston.AI “Reality Check: Deploying Computer Vision and LLMs at the Edge” From the event page: AI workloads are moving closer to where data is generated — but how close can we really get? This webinar from ICS and Boston AI explores the evolving landscape of deploying advanced AI models,

Case Study: How an Enterprise Tech Team Went from Dozens to 2,000+ Fine-Tuning Configurations

This blog post was originally published in expanded form at RapidFire AI’s website. It is reprinted here with the permission of RapidFire AI. The Use Case An AI-forward team at a Fortune 500 enterprise tech company builds intelligent autocomplete for enterprise form data entry: predicting what a user will select next across product dimensions, pricing fields,

Nota AI Wins Grand Prize at NVIDIA Nemotron Hackathon, Proving MoE Quantization Prowess with Synthetic Data Technology

Took 1st place in Track C and Grand Prize among all 20 competing teams with synthetic data generation technology specialized for MoE quantization Built a dataset using an agent based on Nemotron 3 Super120B, presenting a data-centric rather than algorithm-centric optimization approach SEOUL, South Korea, April 24, 2026 /PRNewswire/ — Nota AI, a leading AI model compression and optimization company,