D3 Embedded Showcases Camera/Radar Fusion, ADAS Cameras, Driver Monitoring, and LWIR solutions at CES

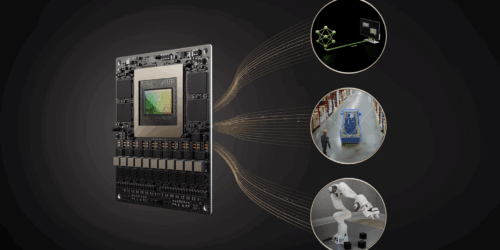

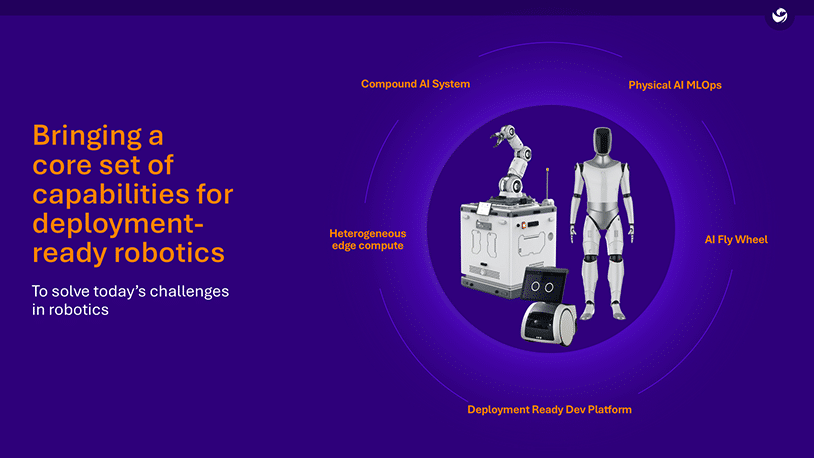

Las Vegas, NV, January 7, 2026 — D3 Embedded is showcasing a suite of technology solutions in partnership with fellow Edge AI and Vision Alliance Members HTEC, STMicroelectronics and Texas Instruments at CES 2026. Solutions include driver and in-cabin monitoring, ADAS, surveillance, targeting and human tracking – and will be viewable at different locations within […]