Using Xilinx FPGAs to Solve Endoscope System Architecture Challenges

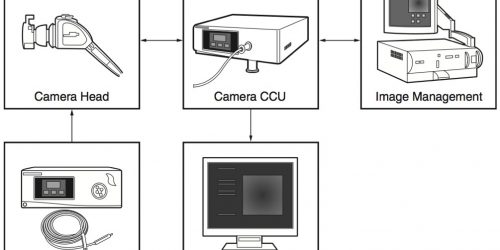

By Jon Alexander, Technical Marketing Manager for ISM (Industrial, Scientific, Medical) Markets Xilinx Corporation Image enhancement functions – noise reduction, edge enhancement, dynamic range correction, digital zoom, scaling, etc – are key elements of many embedded vision designs, in improving the ability for downstream algorithms to automatically extract meaning from the image. Interface flexibility and […]

Using Xilinx FPGAs to Solve Endoscope System Architecture Challenges Read More +