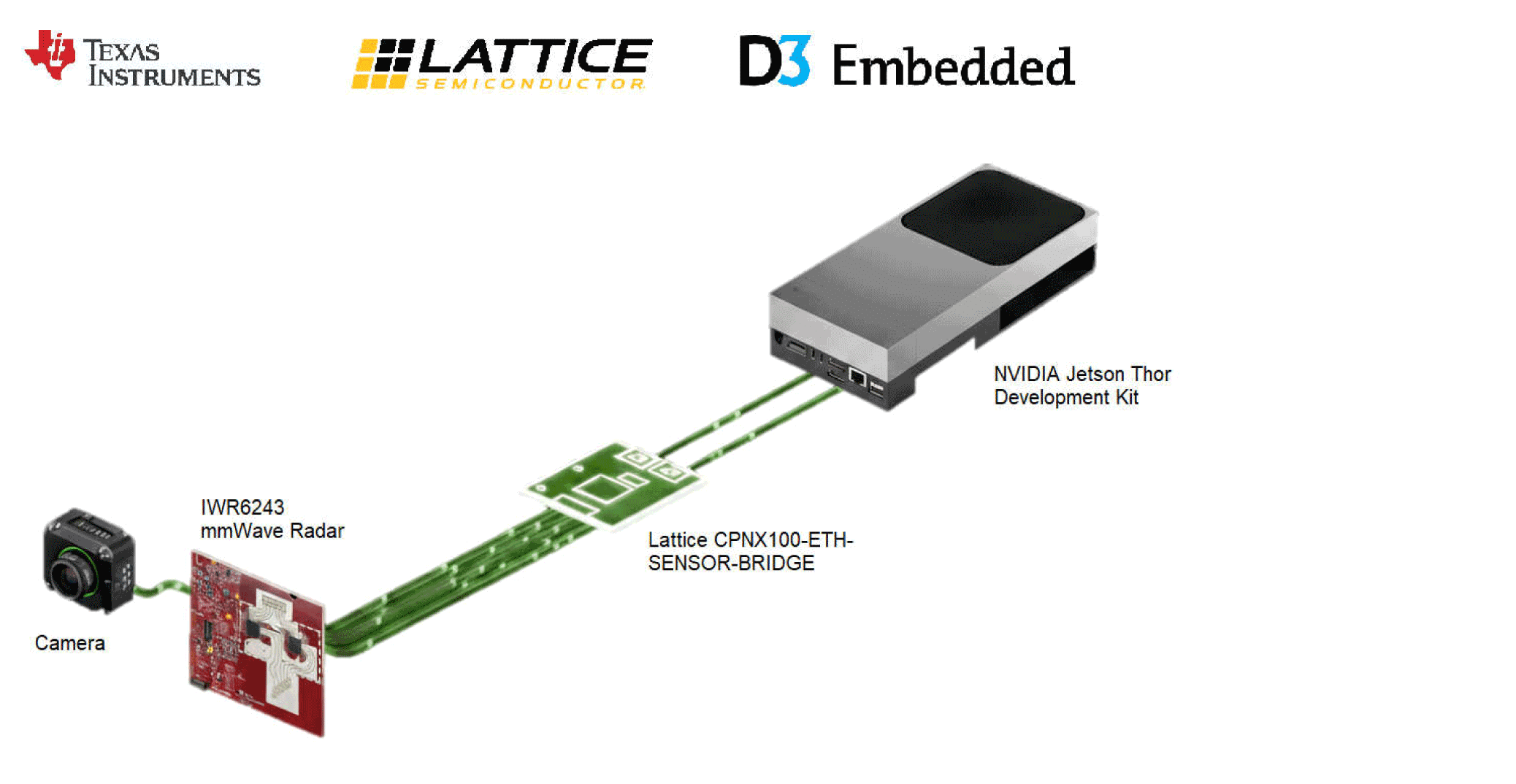

Texas Instruments, D3 Embedded, Lattice and NVIDIA Show a Practical Radar-Camera Fusion Stack for Robotics

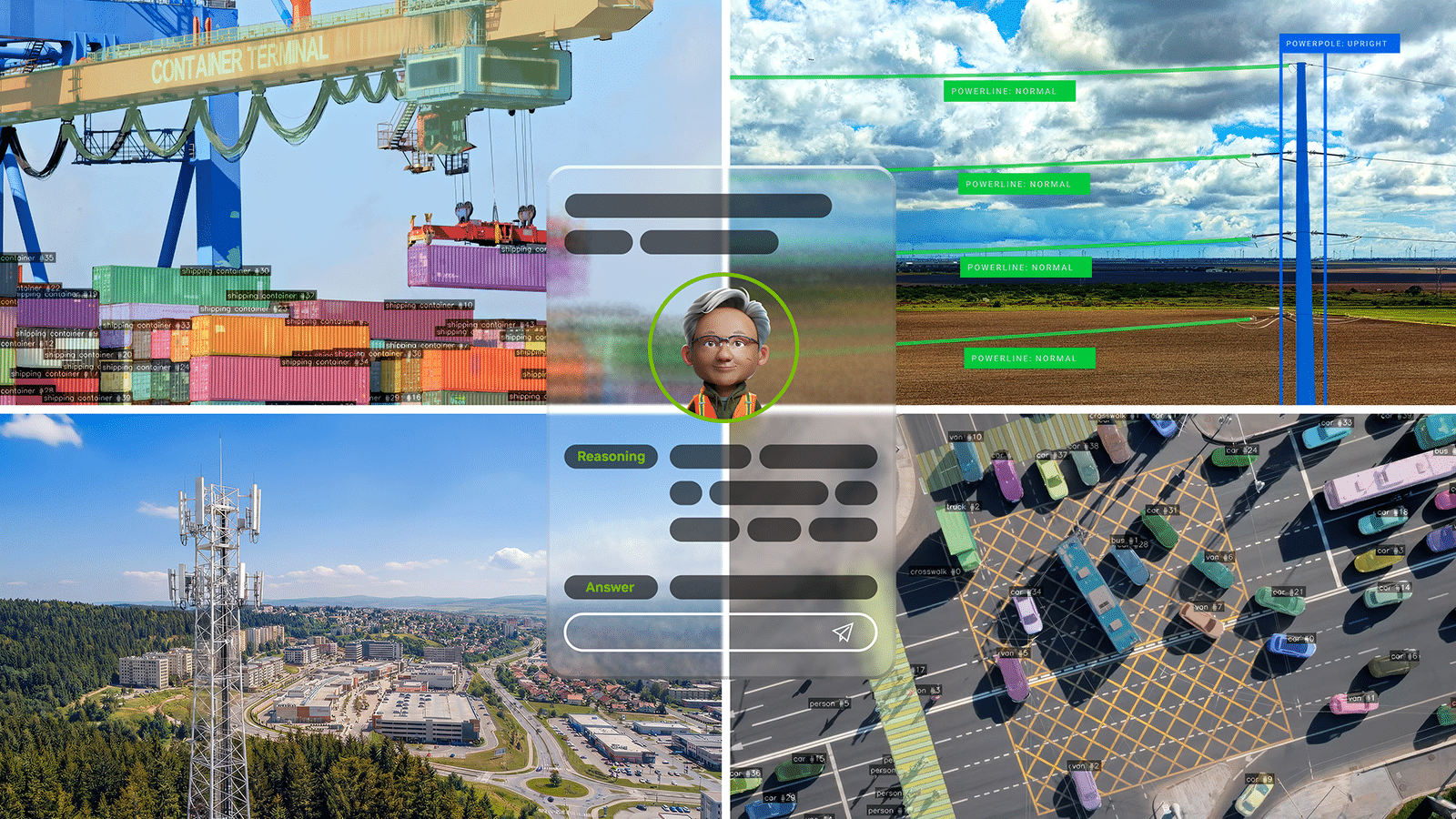

TI’s new application brief and companion demo outline how mmWave radar, camera input, FPGA-based sensor bridging and NVIDIA Holoscan can be combined into a low-latency perception pipeline for humanoids and other autonomous machines. Texas Instruments, D3 Embedded, Lattice Semiconductor and NVIDIA are outlining a concrete radar-camera fusion stack for robotics rather than just talking […]