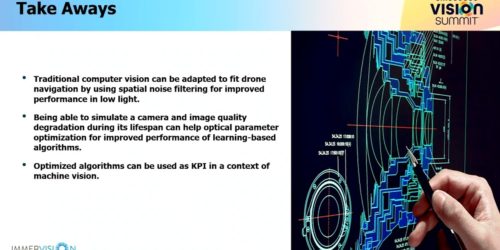

“Next-generation Computer Vision Methods for Automated Navigation of Unmanned Aircraft,” a Presentation from Immervision

Julie Buquet, Applied Researcher for Imaging and AI at Immervision, presents the “Next-generation Computer Vision Methods for Automated Navigation of Unmanned Aircraft” tutorial at the May 2023 Embedded Vision Summit. Unmanned aircraft systems (UASs) need to perform accurate autonomous navigation using sense-and-avoid algorithms under varying illumination conditions. This requires robust algorithms able to perform consistently, […]