Restar Framos Demo: Sony IMX927 105 MP Global Shutter Image Sensor

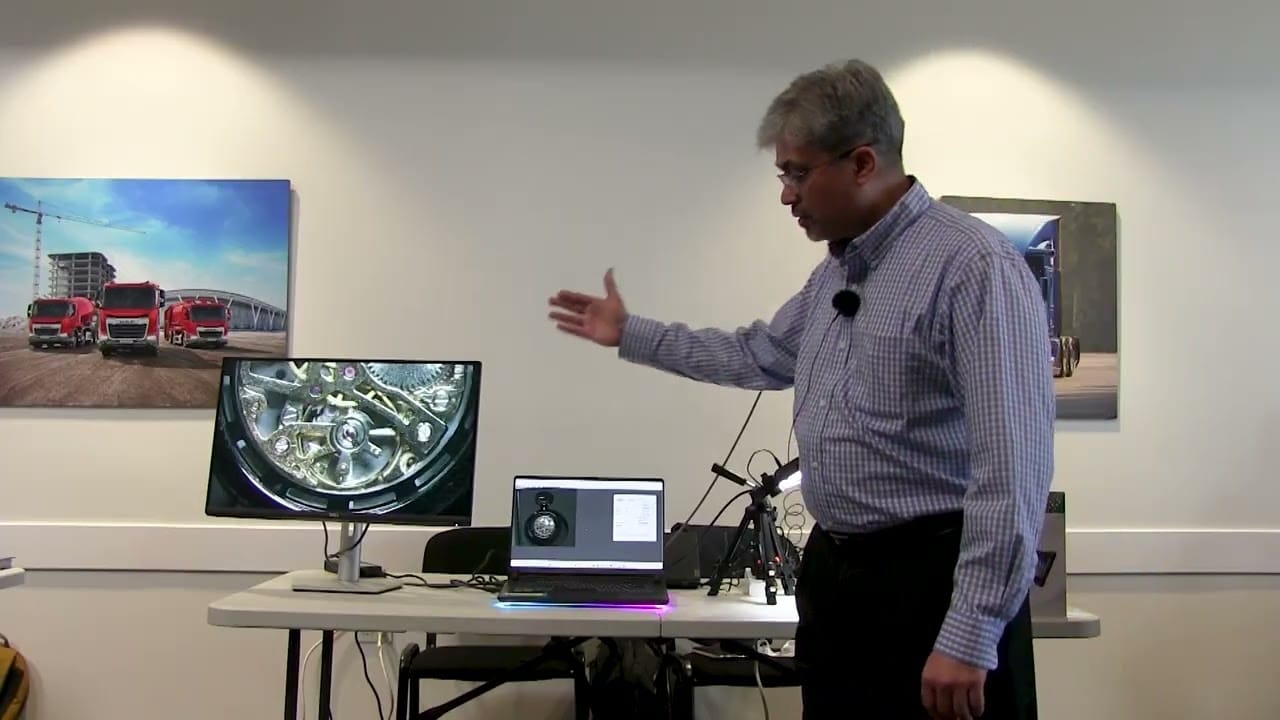

Prashant Mehta from Restar Framos presents the Sony IMX927, a high-performance 105-megapixel global shutter image sensor. The demonstration highlights the sensor’s extreme resolution and speed, showing its ability to capture live, detailed images of a watch’s internal mechanics. By displaying a 1:1 pixel representation on a monitor, the demo illustrates how the sensor provides […]

Restar Framos Demo: Sony IMX927 105 MP Global Shutter Image Sensor Read More +