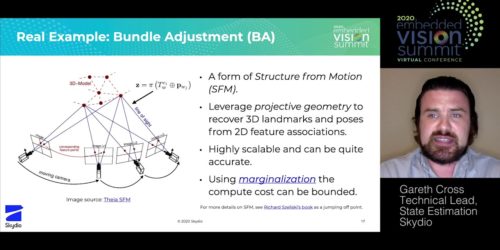

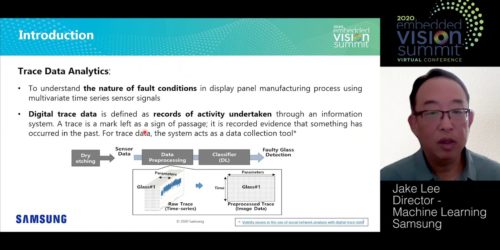

“Tackling Extreme Visual Conditions for Autonomous UAVs In the Wild,” a Presentation from Skydio

Hayk Martiros, Head of Autonomy at Skydio, presents the “Tackling Extreme Visual Conditions for Autonomous UAVs In the Wild” tutorial at the September 2020 Embedded Vision Summit. Skydio ships autonomous robots that are flown at scale in complex, unknown environments every day to capture incredible video, automate dangerous inspections and save lives of first responders. […]